A point set registration problem is a task using two shapes, each consisting of a set of points, to estimate the relationship of individual points between the two shapes. Here, a "shape" is like a human body or face, which is similar to another body or face but exhibits morphological diversity. Taking the face as an example: the center position of the pupil of an eye varies depending on individuals but can be thought to have a correspondence with that of another person. Such a correspondence can be estimated by gradually deforming one shape to be superimposable on the other. Estimation of the correspondence of a point on one shape to a point on another is the point set registration problem. Since the number of points of one shape could be millions, the estimation of correspondence is calculated by a computer. Nonetheless, up to now, even when the fastest conventional method was used, it took a lot of time for calculation for registration of ca. 100,000 points. Thus, algorithms that could find a solution far faster without affecting accuracy have been sought. Furthermore, preliminary registration before automated estimation was a prerequisite for the conventional calculation method, so algorithms that do not need preliminary registration are desirable.

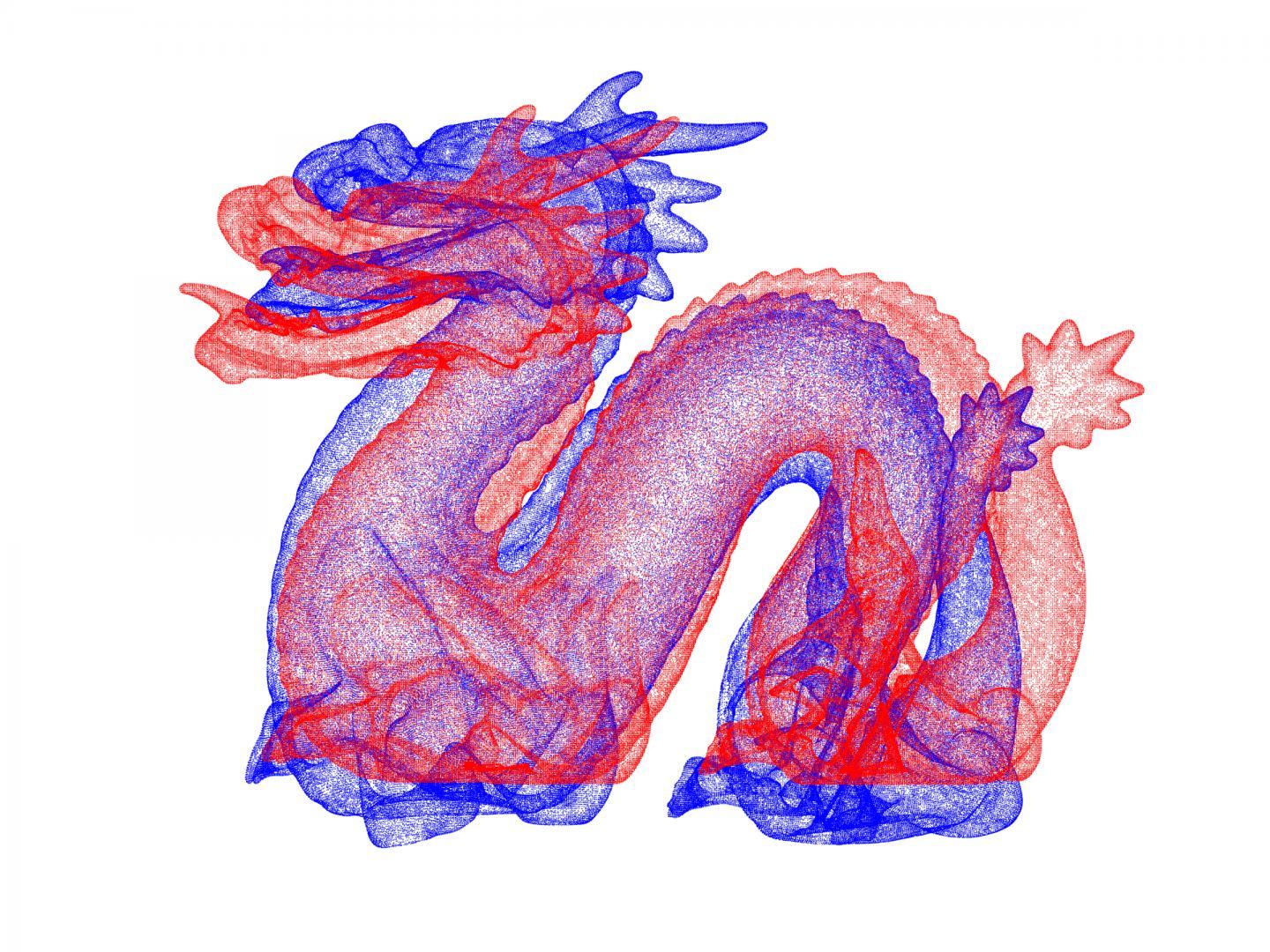

Prof. Osamu Hirose, a young scientist at Kanazawa University, has been working on{module INSIDE STORY} this problem. In his study, a completely new approach has been taken; a point set registration problem is defined as the maximization of posterior probability) in Bayesian statistics) and the smoothness of a displacement field) is defined as a prior probability). As a result, a new algorithm has been discovered that can find a solution to a typical point set registration problem even without sufficient preliminary registration. In addition, by replacing some calculations of this algorithm with approximation, point set registration problems can be solved drastically faster than conventional methods. For example, for two-point sets consisting of ca. 100,000 points each (leftmost in Figure 1), application of the present method was successful in completing highly accurate registration within 2 min, while the fastest method that was publicly available took about three hours. Also, as shown in Figure 2, the proposed method successfully registered the "dragon" dataset, where both point sets were composed of 437,645 points each. The computing time was roughly 20 min. Although the present high-speed calculation uses approximations, the accuracy of registration is not reduced to a discernible extent, as demonstrated by numerical experiments.

By using the algorithm, new CG characters can be automatically created, and thereby, it can be a labor-saving technique for CG designers. Figure 3 shows an example application of the algorithm. Source shape (a) and target shape (b) were obtained from a public database and used as input of the algorithm. Shape (c) is the result of the first registration, showing that the source shape became similar to the target shape with characteristics of the source shape retained. Shape (d) is the result of the second registration, showing the source shape to be deformed closer to the target shape. The video summary of this research: {media id=237,layout=solo}

The importance of point set registration problems is due to their wide range of applications in the fields of computer graphics (CG) and computer vision. Personal authentication by face recognition used on smartphones can be interpreted as an application of point set registration. Further, blending the 3-dimensional shape of certain two persons, called "morphing," can be performed through point set registration. In addition, there is a well-known study that enabled the restoration of a 3-dimensional face model of the late Audrey Hepburn from a single picture, which used a technique that can be interpreted as point set registration. Therefore, since point set registrations having a wide variety of applications can now be performed at a very high speed with high accuracy, it is expected that the method established in this study will be used as a core technology in this research field.

On the other hand, the method could be further improved. Although it is remarkably faster than the conventional method, calculation speed may become a problem when the number of points in a point set reaches millions. Prof. Hirose is further developing methods to enable calculation of such a large point set registration problem within several minutes. Preliminary results show great promise for successful further developments.

How to resolve AdBlock issue?

How to resolve AdBlock issue?

{module INSIDE STORY}

{module INSIDE STORY}