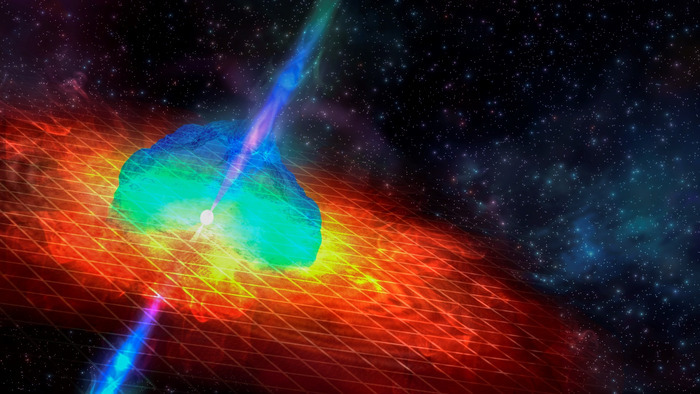

A highly unusual blast of high-energy light from a nearby galaxy has been linked by scientists to a neutron star merger.

The event, detected in December 2021 by NASA’s Neil Gehrels Swift Observatory and the Fermi Gamma-ray Space Telescope, was a gamma-ray burst – an immensely energetic explosion that can last from a few milliseconds to several hours.

This gamma-ray burst, identified as GRB 211211A, lasted about a minute – a relatively lengthy explosion, which would usually signal the collapse of a massive star into a supernova. But this event contained an excess of infrared light and was much fainter and faster-fading than a classical supernova, hinting that something different was going on.

In a new study, an international team of scientists showed that the infrared light detected in the burst came from a kilonova. This is a rare event, thought to be generated as neutron stars, or a neutron star and a black hole collide, producing heavy elements such as gold and platinum. Thus far, these events, called kilonovae, have only been associated with gamma-ray bursts with durations of less than two seconds.

The work was led by Jillian Rastinejad at Northwestern University in the US along with physicists from the University of Birmingham and the University of Leicester in the UK, and Radboud University in The Netherlands.

Dr. Matt Nicholl, an Associate Professor at the University of Birmingham, modeled the kilonova emission. “We found that this one event produced about 1,000 times the mass of the Earth in very heavy elements. This supports the idea that these kilonovae are the main factories of gold in the Universe,” he said.

Although up to 10 percent of long gamma-ray bursts are suspected to be caused by the merging of a neutron star or neutron stars and black holes, no firm evidence – in the form of kilonovae – had previously been identified.

Dr. Gavin Lamb, a post-doctoral researcher at the University of Leicester, explained: "A gamma-ray burst is followed by an afterglow that can last several days. These afterglows behave in a very characteristic manner, and by modeling them we can expose any extra emission components, such as a supernova or a kilonova."

The kilonova generated by GRB 211211A is the closest to having been discovered without gravitational waves, and has exciting implications for the upcoming gravitational wave observation run, starting in 2023. Its proximity to a neighboring galaxy only 1bn light years away allowed scientists to study the properties of the merger in unprecedented detail.

A related paper from the same collaboration in Nature Astronomy, led by Dr. Benjamin Gompertz, Assistant Professor at the University of Birmingham, describes some of these properties.

In particular, the team identified how the jet of high-energy electrons, traveling at almost the speed of light and causing the gamma-ray burst, changed with time. The cooling down of this jet was shown to be responsible for the long-lasting GRB emission.

In the paper, the team also described how close observation of GRB 211211A can offer fascinating insights into other previously unexplained gamma-ray bursts which have appeared not to fit with standard interpretations.

Dr. Gompertz said: “This was a remarkable GRB. We don’t expect mergers to last more than about two seconds. Somehow, this one powered a jet for almost a full minute. It’s possible the behavior could be explained by a long-lasting neutron star, but we can’t rule out that what we saw was a neutron star being ripped apart by a black hole.

“Studying more of these events will help us determine which is the right answer and the detailed information we gained from GRB 211211A will be invaluable for this interpretation.”

The work was funded by the European Research Council under the KilonovaRank project, which harnesses the power of Big Data in investigating large cosmic events.

How to resolve AdBlock issue?

How to resolve AdBlock issue?