A proposed method of profoundly enhancing the energy efficiency of wireless networks unfortunately also suffers from being amongst computationally complex problems to solve. But a computer scientist has for the first time demonstrated that the quantum search algorithm can solve this problem faster than a classical supercomputer.

A novel wireless communications technique that turns the on-off status of parts of the telecommunications medium itself—such as antennas and subcarriers—could deliver super-charged energy efficiency gains, but its optimization suffers from being a problem that counts among the computationally hard class of problems to solve. However, a Yokohama National University located in Yokohama, Japan computer scientist has for the first time demonstrated that the quantum search algorithm can solve this problem faster than a classical supercomputer in terms of query complexity.

The arrival of 5G wireless networks offers a great boost to bandwidth and higher data rates, and potentially enables a wide range of new mobile data applications such as self-driving cars and the internet of things (IoT). At the same time, this explosion in traffic necessitates an enhancement of techniques for improving the efficiency of use of the radio spectrum carrier of all this information and the energy required to power the system.

One innovative method for improving energy efficiency and that has been attracting a great deal of attention in wireless communications circles in recent years is what is known as index modulation.

The technique’s name echoes the terms frequency modulation (FM) or amplitude modulation (AM) used to describe how information such as voices or music was transmitted through space via radio waves for much of the 20th Century. At the sending end, either the frequency or the amplitude of the ‘carrier’ radio wave was instantaneously modified (‘modulated’) by the transmitter to impress information on that wave, similar to how telegraph operators in the 19th Century impressed information in the form of Morse code upon an electric current running through telegraph wires. At the receiving end, the decoding, or ‘demodulation’ of that carrier wave extracted the information embedded in its form, producing sounds that could then be heard by human ears. A third way to modulate a carrier wave beyond altering a radio wave’s frequency or amplitude is by altering its phase.

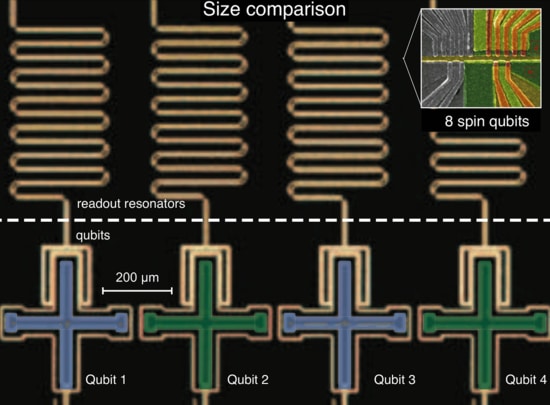

Index modulation (IM) offers a fourth way, or one could say the fourth dimension, of impressing information, but this time via exploitation of the on or off status of its indices. The word index in this case is simply a catch-all term for the infrastructural and operational building blocks of the communications system, such as the transmission antennas, subcarriers, time slots, radiofrequency mirrors, LEDs, and even relays and spreading codes. By switching these various elements on or off, potentially adds another layer of information to transmission, this time in the form of binary digits or bits.

And by turning parts of the system off even as they are conveying information, the sparseness of the transmitted sequence of symbols simplifies the calculation complexity. This also substantially reduces the energy required for a given amount of data that is transferred.

“It’s a very elegant concept, using the activation pattern of the building blocks of the communications system itself to impart information, and leading to a reduction in the complexity of the hardware,” said Associate Professor Naoki Ishikawa, the author of the paper.

But this radical improvement comes with an additional—and substantial—challenge.

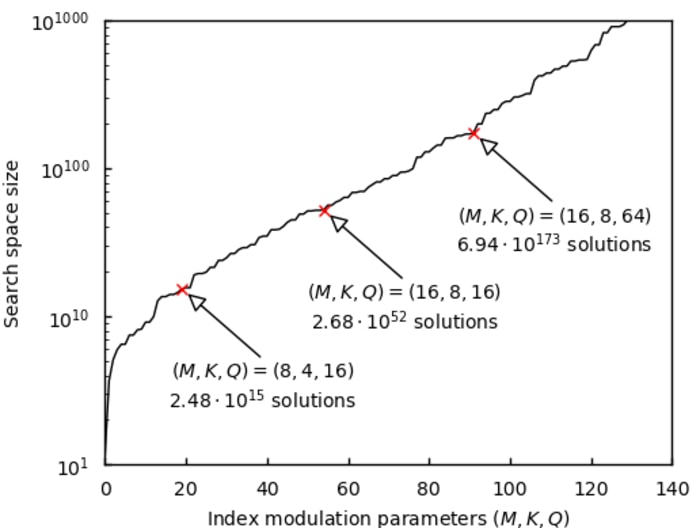

IM requires optimization to determine which indices should be used and when to convey this binary information, and this particular type of optimization happens to be computationally very difficult.

“This optimization problem is what computational complexity theorists term ‘NP-hard’, one of the very hard classes of a problem there is. It leads to what we call a combinatorial explosion,” he added. “So I’ve named this monster of a mathematical challenge the ‘index selection problem’.”

To address the index selection problem, Ishikawa used an algorithm for quantum computing called Grover Adaptive Search (GAS), also known as the quantum search algorithm. Quantum computing may one day offer the ability to perform many types of computations much faster than classical computers.

In the paper, Ishikawa demonstrated for the first time that in principle GAS can solve the index selection problem faster than a classical computer in terms of query complexity.

“This shows that index modulation is compatible with quantum computers because it represents information on and off, resulting in binary variables typically used in quantum computation,” he said.

The use of GAS to solve the index modulation problem remains something of a proof of concept, as fault-tolerant, large-scale quantum supercomputers are years away from being realized. There remain many challenges for industrial applications of existing quantum computers due to their non-negligible noise drowning out many signals. In addition, GAS can provide a quadratic speedup, but the problem of exponential complexity is still unresolved and requires long-term study.

Quadratic speedup occurs when a quantum computer solves a problem through N queries where a classical computer would need to take, for example, N * N = N^2 queries. Exponential speedup occurs where a quantum computer solves a problem through N queries where a classical computer would take 2^ N queries. So if N is a large value, then the difference in terms of query complexity would become larger too.

Nevertheless, the demonstration of quantum speedup achieved by GAS has the potential to solve many other problems in society, not just the index selection problem.

How to resolve AdBlock issue?

How to resolve AdBlock issue?