Supercomputer simulations conducted by astrophysicists at Tohoku University in Japan have revealed a new theory for the origin of supermassive black holes. In this theory, the precursors of supermassive black holes grow by swallowing up not only interstellar gas but also smaller stars as well. This helps to explain the large number of supermassive black holes observed today.

Almost every galaxy in the modern Universe has a supermassive black hole at its center. Their masses can sometimes reach up to 10 billion times the mass of the Sun. However, their origin is still one of the great mysteries of astronomy. A popular theory is the direct collapse model where primordial clouds of interstellar gas collapse under self-gravity to form supermassive stars which then evolve into supermassive black holes. But previous studies have shown that direct collapse only works with pristine gas consisting of only hydrogen and helium. Heavier elements such as carbon and oxygen change the gas dynamics, causing the collapsing gas to fragment into many smaller clouds which form small stars of their own, rather than a few supermassive stars. Direct collapse from pristine gas alone can't explain the large number of supermassive black holes seen today.  {module INSIDE STORY}

{module INSIDE STORY}

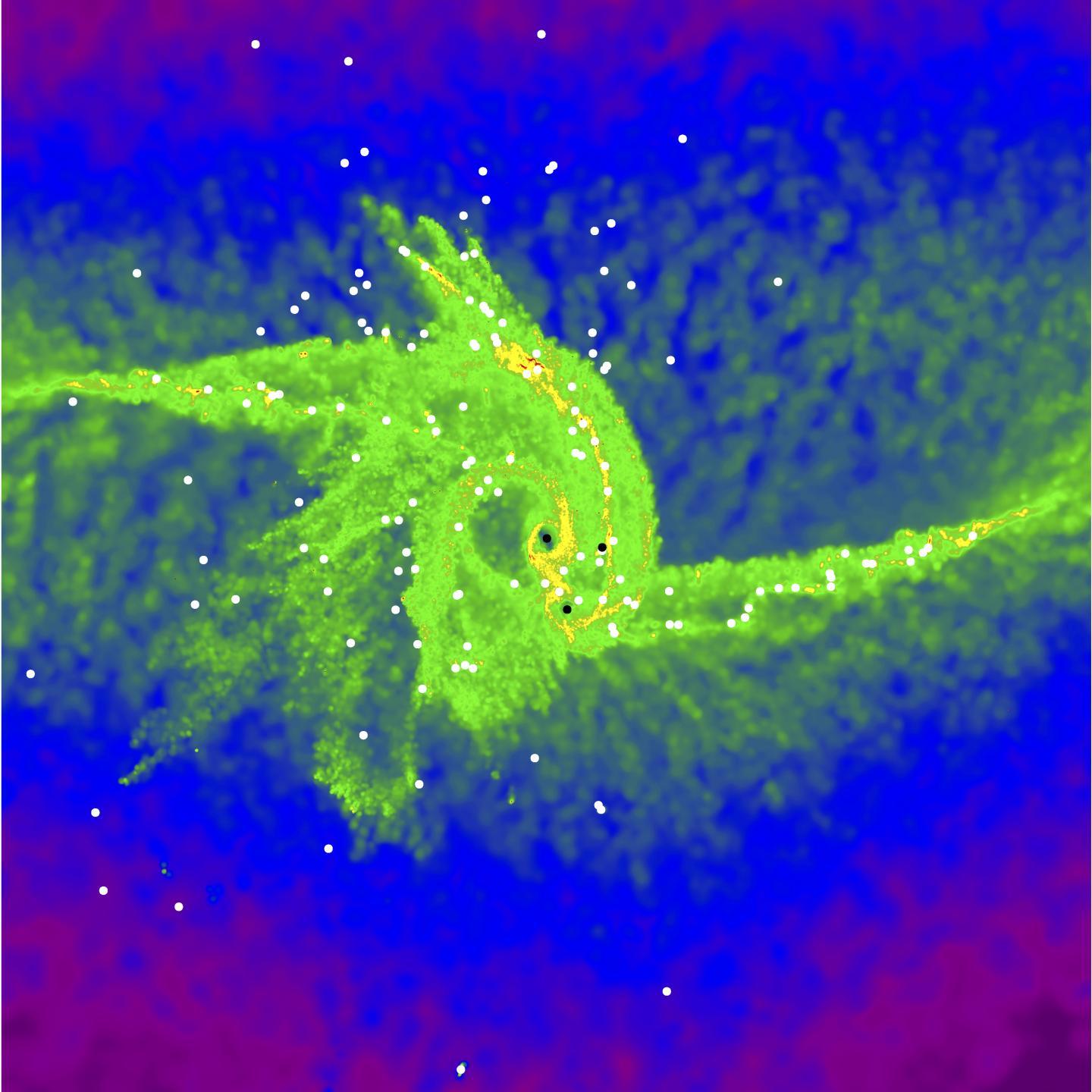

Sunmyon Chon, a postdoctoral fellow at the Japan Society for the Promotion of Science and Tohoku University and his team used the National Astronomical Observatory of Japan's supercomputer "ATERUI II" to perform long-term 3D high-resolution simulations to test the possibility that supermassive stars could form even in heavy-element-enriched gas. Star formation in gas clouds including heavy elements has been difficult to simulate because of the computational cost of simulating the violent splitting of the gas, but advances in supercomputing power, specifically the high calculation speed of "ATERUI II" commissioned in 2018, allowed the team to overcome this challenge. These new simulations make it possible to study the formation of stars from gas clouds in more detail.

Contrary to previous predictions, the research team found that supermassive stars can still form from heavy-element enriched gas clouds. As expected, the gas cloud breaks up violently and many smaller stars form. However, there is a strong gas flow towards the center of the cloud; the smaller stars are dragged by this flow and are swallowed up by the massive stars in the center. The simulations resulted in the formation of a massive star 10,000 times more massive than the Sun. "This is the first time that we have shown the formation of such a large black hole precursor in clouds enriched in heavy-elements. We believe that the giant star thus formed will continue to grow and evolve into a giant black hole," says Chon.

This new model shows that not only primordial gas but also gas containing heavy elements can form giant stars, which are the seeds of black holes. "Our new model is able to explain the origin of more black holes than the previous studies, and this result leads to a unified understanding of the origin of supermassive black holes," says Kazuyuki Omukai, a professor at Tohoku University.

How to resolve AdBlock issue?

How to resolve AdBlock issue?  {module INSIDE STORY}

{module INSIDE STORY}