Launching in August 2022 and arriving at the asteroid belt in 2026, NASA’s Psyche spacecraft will orbit a world we can barely pinpoint from Earth and have never visited.

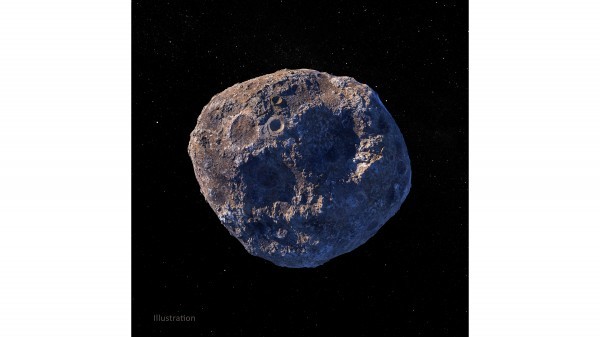

The target of NASA’s Psyche mission – a metal-rich asteroid, also called Psyche, in the main belt between Mars and Jupiter – is an uncharted world in outer space. From Earth- and space-based telescopes, the asteroid appears as a fuzzy blur. What scientists do know, from radar data, is that it’s shaped somewhat like a potato and that it spins on its side.

By analyzing light reflected off the asteroid, scientists hypothesize that asteroid Psyche is unusually rich in metal. One possible explanation is that it formed early in our solar system, either as a core of a planetesimal – a piece of a planet – or as primordial material that never melted. This mission aims to find out, and in the process of doing so, they expect to help answer fundamental questions about the formation of our solar system.

“If it turns out to be part of a metal core, it would be part of the very first generation of early cores in our solar system,” said Arizona State University’s Lindy Elkins-Tanton, who as principal investigator leads the Psyche mission. “But we don’t really know, and we won’t know anything for sure until we get there. We wanted to ask primary questions about the material that built planets. We’re filled with questions and not a lot of answers. This is real exploration.”

Elkins-Tanton led the group that proposed Psyche as a NASA Discovery-class mission; it was selected in 2017. A huge challenge, she said, was choosing the mission’s science instruments: How do you make sure you’ll get the data you need when you’re not sure of what, specifically, you’ll be measuring?

For example, to determine what exactly the asteroid is made of and whether it’s part of a planetesimal core, scientists needed instruments that could account for a range of possibilities: nickel, iron, different kinds of rock, or rock and metal mixed.

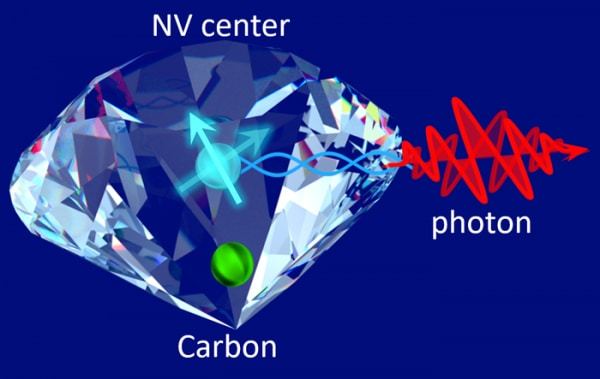

They selected a payload suite that includes a magnetometer to measure any magnetic field; imagers to photograph and map the surface, and spectrometers to indicate what the surface is made of by measuring the gamma rays and neutrons emitted from it. Scientists continue to hypothesize about what Psyche is made of, but “no one’s been able to come up with a Psyche that we can’t handle with the science instruments we have,” Elkins-Tanton said.

How to Tour an Unknown World

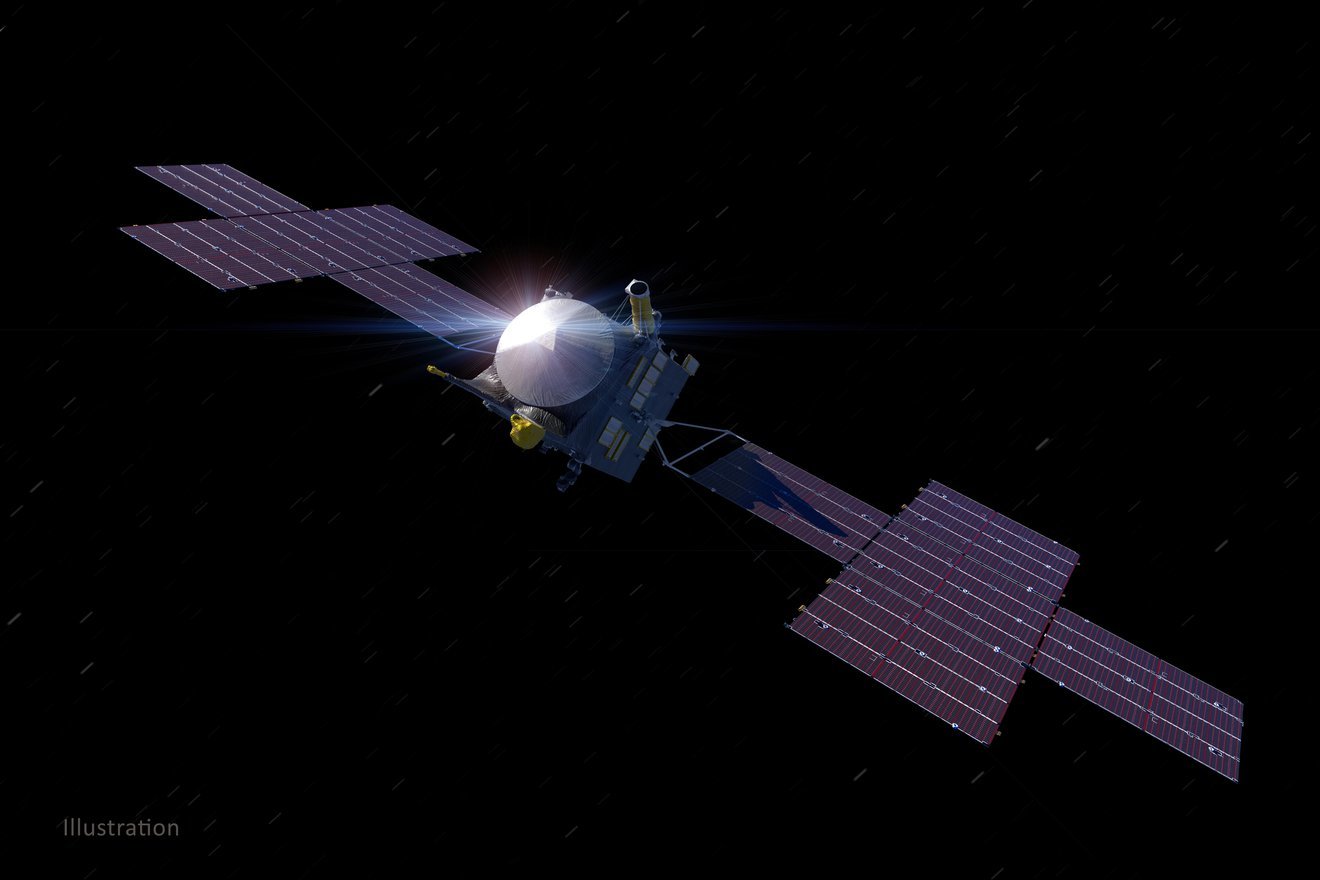

But before scientists can put those instruments to work, they’ll need to reach the asteroid and get into orbit. After launching from NASA’s Kennedy Space Center in August 2022, Psyche will sail past Mars nine months later, using the planet’s gravitational force to slingshot itself toward the asteroid. It’s a total journey of about 1.5 billion miles (2.4 billion kilometers).

The spacecraft will begin its final approach to the asteroid in late 2025. As the spacecraft gets closer to its target, the mission team will turn its cameras on, and the visual of asteroid Psyche will morph from the fuzzy blob we know now into high-definition, revealing surface features of this strange world for the first time. The imagery also will help engineers get their bearings as they prepare to slip into orbit in January 2026. The spacecraft’s initial orbit is designed to be at a high, safe altitude – about 435 miles (700 kilometers) above the asteroid’s surface.

During this first orbit, Psyche’s mission design and navigation team will be laser-focused on measuring the asteroid’s gravity field, the force that will keep the spacecraft in orbit. With an understanding of the gravity field, the team can then safely navigate the spacecraft closer and closer to the surface as the science mission is carried out in just under two years.

Psyche appears to be lumpy, wider across (173 miles, or 280 kilometers, at its widest point) than it is from top to bottom, with an uneven distribution of mass. Some parts may be less dense, like a sponge, and some may be more tightly packed and more massive. The parts of Psyche with more mass will have higher gravity, exerting a stronger pull on the spacecraft.

To solve the gravity-field mystery, the mission team will use the spacecraft’s telecommunications system. By measuring subtle changes in the X-band radio waves bouncing back and forth between the spacecraft and the large Deep Space Network antennas around Earth, engineers can precisely determine the asteroid’s mass, gravity field, rotation, orientation, and wobble.

The team has been working up scenarios and has devised thousands of “possible Psyches” – simulating variations in the asteroid’s density and mass, and orientation of its spin axis – to lay the groundwork for the orbital plan. They can test their models in supercomputer simulations, but there’s no way to know for sure until the spacecraft gets there.

Over the following 20 months, the spacecraft will use its gentle electric propulsion system to dip into lower and lower orbits. Measurements of the gravity field will grow more precise as the spacecraft gets closer, and images of the surface will become higher resolution, allowing the team to improve their understanding of the body. Eventually, the spacecraft will establish a final orbit about 53 miles (85 kilometers) above the surface.

It’s all to solve the riddles of this unique asteroid: Where did Psyche come from, what is it made of, and what does it tell us about the formation of our solar system?

“Humans have always been explorers,” Elkins-Tanton said. “We’ve always set out from where we are to find out what is over that hill. We always want to go farther; we always want to imagine. It’s inherent in us. We don’t know what we’re going to find, and I’m expecting us to be entirely surprised.”

How to resolve AdBlock issue?

How to resolve AdBlock issue?