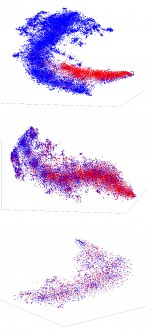

Breast cancer is the most common cancer with the highest mortality rate. Swift detection and diagnosis diminish the impact of the disease. However, classifying breast cancer using histopathology images — tissues and cells examined under a microscope — is a challenging task because of bias in the data and the unavailability of annotated data in large quantities. Automatic detection of breast cancer using convolutional neural network (CNN), a machine learning technique, has shown promise — but it is associated with a high risk of false positives and false negatives.

Without any measure of confidence, such false predictions of CNN could lead to catastrophic outcomes. But a new machine learning model developed by Michigan Technological University researchers can evaluate the uncertainty in its predictions as it classifies benign and malignant tumors, helping reduce this risk.

In their study, mechanical engineering graduate students Ponkrshnan Thiagarajan and Pushkar Kharinar and Susanta Ghosh, assistant professor of mechanical engineering and machine learning expert, outline their novel probabilistic machine learning model, which outperforms similar models.

“Any machine learning algorithm that has been developed so far will have some uncertainty in its prediction,” Thiagarajan said. “There is little way to quantify those uncertainties. Even if an algorithm tells us a person has cancer, we do not know the level of confidence in that prediction.”

From Experience Comes Confidence

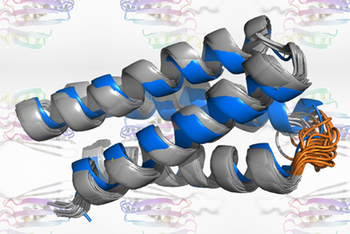

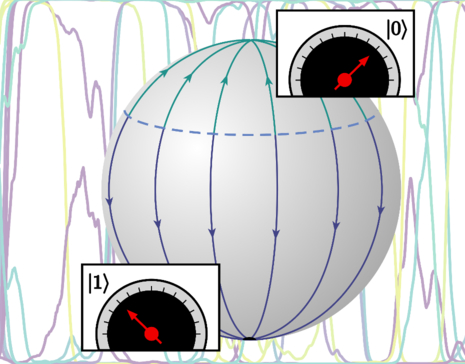

In the medical context, not knowing how confident an algorithm is has made it difficult to rely on computer-generated predictions. The present model is an extension of the Bayesian neural network — a machine learning model that can evaluate an image and produce an output. The parameters for this model are treated as random variables that facilitate uncertainty quantification.

The Michigan Tech model differentiates between negative and positive classes by analyzing the images, which at their most basic level are collections of pixels. In addition to this classification, the model can measure the uncertainty in its predictions.

In a medical laboratory, such a model promises time savings by classifying images faster than a lab tech. And, because the model can evaluate its level of certainty, it can refer the images to a human expert when it is less confident.

But why is a mechanical engineer creating algorithms for the medical community? Thiagarajan’s idea kindled when he started using machine learning to reduce the computational time needed for mechanical engineering problems. Whether a computation evaluates the deformation of building materials or determines whether someone has breast cancer, it’s important to know the uncertainty of that computation — the key ideas remain the same.

“Breast cancer is one of the cancers that have the highest mortality and highest incidence,” Thiagarajan said. “We believe that this is an exciting problem wherein better algorithms can make an impact on people’s lives directly.”

Next Steps

Now that their study has been published, the researchers will extend the model for multiclass classification of breast cancer. They will aim to detect cancer subtypes in addition to classifying benign and malignant tissues. And the model, though developed using breast cancer histopathology images, can also be extended for other medical diagnoses.

“Despite the promise of machine learning-based classification models, their predictions suffer from uncertainties due to the inherent randomness and the bias in the data and the scarcity of large datasets,” Ghosh said. “Our work attempts to address these issues and quantifies, uses, and explains the uncertainty.”

Ultimately, Thiagarajan, Khairnar, and Ghosh’s model itself — which can evaluate whether images have high or low measures of uncertainty and identify when images need the eyes of a medical expert — represents the next steps in the endeavor of machine learning.

How to resolve AdBlock issue?

How to resolve AdBlock issue?