Researchers at Delft University of Technology in the Netherlands have made a groundbreaking discovery by demonstrating that it is feasible to control and manipulate spin waves on a microchip using superconductors. This marks the first time that such a feat has been achieved. These spin waves can potentially be used as an energy-efficient substitute for electronics and may have applications in areas such as energy-efficient information technology and quantum supercomputing. The study involves using a superconducting electrode as a mirror for the spin wave, reflecting its magnetic field to the wave and allowing it to be manipulated with precision.

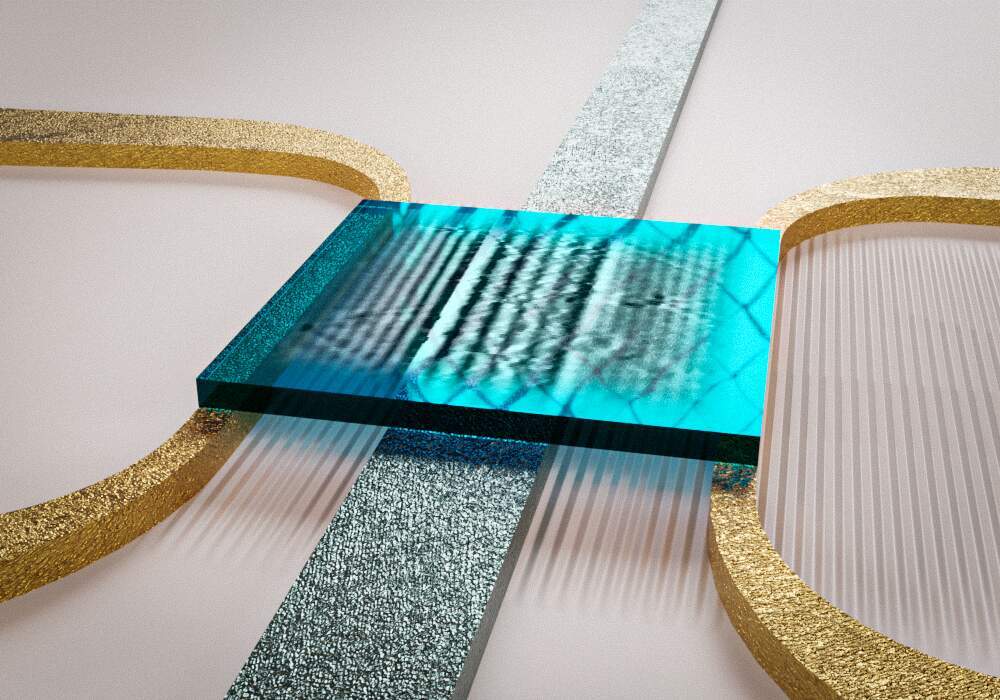

Spin waves are magnetic waves that can be used to transfer information, says Michael Borst, who led the experiment. Spin waves can be a promising alternative to electronics and scientists have been trying to control and manipulate them for years. Metal electrodes were predicted to be a way of controlling spin waves, but physicists have not been able to see such effects in experiments until now. The research team has shown that spin waves can be controlled properly with a superconducting electrode. A spin wave generates a magnetic field that generates a supercurrent in the superconductor, which acts as a mirror for the spin wave. The superconducting mirror slows down the spin waves' movement up and down, making them easily controllable. The wavelength of spin waves changes completely when they pass under the superconducting electrode, and by varying the temperature of the electrode slightly, the magnitude of the change can be precisely adjusted. The experiment used a thin magnetic layer of yttrium iron garnet (YIG), which is known as the best magnet on Earth. The team laid a superconducting electrode and another electrode on top of it to induce the spin waves. By cooling the electrode to -268 degrees, it entered a superconducting state, which helped to manipulate the spin waves.

The researchers used a unique sensor to image the spin waves. This was essential to the experiment. They used electrons in diamonds as sensors for the magnetic fields of the spin waves. Their lab is pioneering that technique. The cool thing about it is that they can look through the opaque superconductor at the spin waves underneath, just like an MRI scanner can look through the skin into someone's body.

According to Borst, spin wave technology is still in its infancy. To make energy-efficient computers with this technology, small circuits should be built to perform calculations. Their discovery opens a door to countless new and energy-efficient spin-wave circuits as superconducting electrodes can be used.

Van der Sar added that they can now design devices based on spin waves and superconductors that produce little heat and sound waves. Spintronics versions of frequency filters or resonators, components that can be found in electronic circuits of cell phones, for example, can be created. Or circuits that can serve as transistors or connectors between qubits in a quantum supercomputer can also be designed.

How to resolve AdBlock issue?

How to resolve AdBlock issue?