The researchers, from the University of Cambridge, found that it may be possible to detect and measure dark energy by studying Andromeda, our galactic next-door neighbor that is on a slow-motion collision course with the Milky Way.

Since it was first identified in the late 1990s, scientists have used very distant galaxies to study dark energy but have yet to directly detect it. However, the Cambridge researchers found that by studying how Andromeda and the Milky Way are moving toward each other given their collective mass, they could place an upper limit on the value of the cosmological constant, the simplest model of dark energy. The upper limit they found is five times higher than the value of the cosmological constant that can be detected from the early universe.

Although the technique is still early in its development, the researchers say that it could be possible to detect dark energy by studying our cosmic neighbourhood.

Everything we can see in our world and the skies – from tiny insects to massive galaxies – makes up just five percent of the observable universe. The rest is dark: scientists believe that about 27% of the universe is made of dark matter, which holds objects together, while 68% is dark energy, which pushes objects apart.

“Dark energy is a general name for a family of models you could add to Einstein’s theory of gravity,” said first author Dr David Benisty from the Department of Applied Mathematics and Theoretical Physics. “The simplest version of this is known as the cosmological constant: a constant energy density that pushes galaxies away from each other.”

Einstein temporarily added the cosmological constant to his theory of general relativity. From the 1930s to the 1990s, the cosmological constant was set at zero, until it was discovered that an unknown force – dark energy – was causing the expansion of the universe to accelerate. There are at least two big problems with dark energy, however: we don’t know exactly what it is, and we haven’t directly detected it.

Since it was first identified, astronomers have developed various methods to detect dark energy, most of which involve studying objects from the early universe and measuring how quickly they are moving away from us. Unpacking the effects of dark energy from billions of years ago is not easy: since it is a weak force between galaxies, dark energy is easily overcome by the much stronger forces inside galaxies.

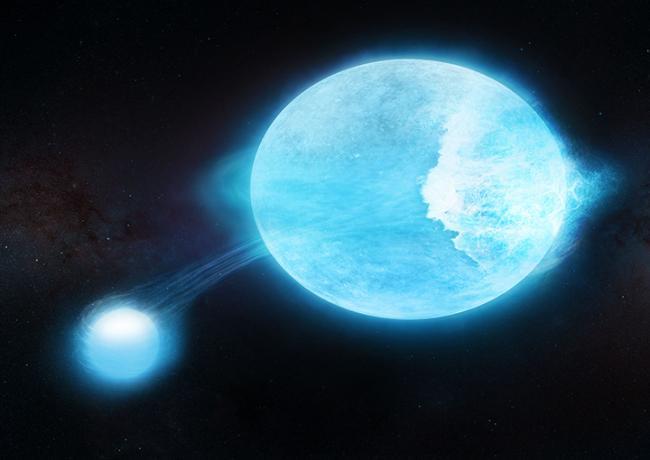

However, there is one region of the universe that is surprisingly sensitive to dark energy, and it’s in our cosmic backyard. The Andromeda galaxy is the closest to our own Milky Way, and the two galaxies are on a collision course. As they draw closer, the two galaxies will start to orbit each other – very slowly. A single orbit will take 20 billion years. However, due to the massive gravitational forces, well before a single orbit is complete, about five billion years from now, the two galaxies will start merging and falling into each other.

“Andromeda is the only galaxy that isn’t running away from us, so by studying its mass and movement, we may be able to make some determinations about the cosmological constant and dark energy,” said Benisty, who is also a Research Associate at Queens’ College.

Using supercomputer simulations based on the best available estimates of the mass of both galaxies, Benisty and his co-authors – Professor Anne Davis from DAMTP and Professor Wyn Evans from the Institute of Astronomy – found that dark energy is affecting how Andromeda and the Milky Way are orbiting each other.

“Dark energy affects every pair of galaxies: gravity wants to pull galaxies together, while dark energy pushes them apart,” said Benisty. “In our model, if we change the value of the cosmological constant, we can see how that changes the orbit of the two galaxies. Based on their mass, we can place an upper bound on the cosmological constant, which is about five times higher than we can measure from the rest of the universe.”

The researchers say that while the technique could prove immensely valuable, it is not yet a direct detection of dark energy. Data from the James Webb Telescope (JWST) will provide far more accurate measurements of Andromeda’s mass and motion, which could help reduce the upper bounds of the cosmological constant.

In addition, by studying other pairs of galaxies, it could be possible to further refine the technique and determine how dark energy affects our universe. “Dark energy is one of the biggest puzzles in cosmology,” said Benisty. “It could be that its effects vary over distance and time, but we hope this technique could help unravel the mystery.”

The research conducted by Cambridge researchers has provided a new and innovative way to measure dark energy. This new method has the potential to revolutionize our understanding of dark energy and its impact on the universe. With further research and development, this new method could lead to a better understanding of the universe and its components.

How to resolve AdBlock issue?

How to resolve AdBlock issue?