The new model can envision universes with unique parameters, such as extra dark matter, even without receiving training data in which those parameters varied

For the first time, astrophysicists have used artificial intelligence techniques to generate complex 3D supercomputer simulations of the universe. The results are so fast, accurate and robust that even the creators aren't sure how it all works.

"We can run these simulations in a few milliseconds, while other 'fast' simulations take a couple of minutes," says study co-author Shirley Ho, a group leader at the Flatiron Institute's Center for Computational Astrophysics in New York City and an adjunct professor at Carnegie Mellon University. "Not only that, but we're much more accurate."

The speed and accuracy of the project called the Deep Density Displacement Model, or D3M for short wasn't the biggest surprise to the researchers. The real shock was that D3M could accurately simulate how the universe would look if certain parameters were tweaked -- such as how much of the cosmos is dark matter -- even though the model had never received any training data where those parameters varied.  {module In-article}

{module In-article}

"It's like teaching image recognition software with lots of pictures of cats and dogs, but then it's able to recognize elephants," Ho explains. "Nobody knows how it does this, and it's a great mystery to be solved."

Ho and her colleagues present D3M June 24 in the Proceedings of the National Academy of Sciences. The study was led by Siyu He, a Flatiron Institute research analyst.

Ho and He worked in collaboration with Yin Li of the Berkeley Center for Cosmological Physics at the University of California, Berkeley, and the Kavli Institute for the Physics and Mathematics of the Universe near Tokyo; Yu Feng of the Berkeley Center for Cosmological Physics; Wei Chen of the Flatiron Institute; Siamak Ravanbakhsh of the University of British Columbia in Vancouver; and Barnabás Póczos of Carnegie Mellon University.

Computer simulations like those made by D3M have become essential to theoretical astrophysics. Scientists want to know how the cosmos might evolve under various scenarios, such as if the dark energy pulling the universe apart varied over time. Such studies require running thousands of simulations, making a lightning-fast and highly accurate computer model one of the major objectives of modern astrophysics.

D3M models how gravity shapes the universe. The researchers opted to focus on gravity alone because it is by far the most important force when it comes to the large-scale evolution of the cosmos.

The most accurate universe simulations calculate how gravity shifts each of billions of individual particles over the entire age of the universe. That level of accuracy takes time, requiring around 300 computation hours for one simulation. Faster methods can finish the same simulations in about two minutes, but the shortcuts required to result in lower accuracy.

Ho, He and their colleagues honed the deep neural network that powers D3M by feeding it 8,000 different simulations from one of the highest-accuracy models available. Neural networks take training data and run calculations on the information; researchers then compare the resulting outcome with the expected outcome. With further training, neural networks adapt over time to yield faster and more accurate results.

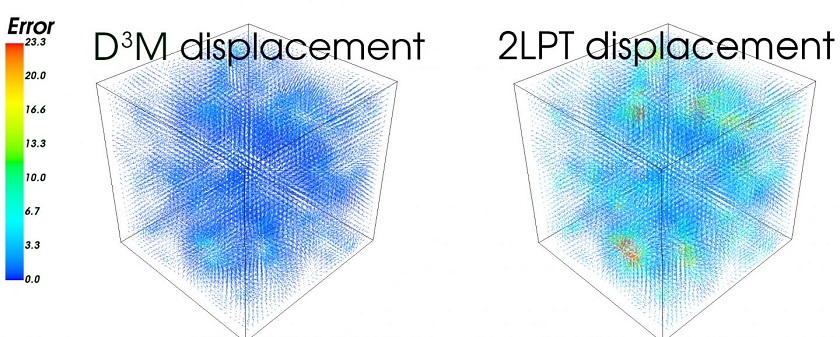

After training D3M, the researchers ran simulations of a box-shaped universe 600 million light-years across and compared the results to those of the slow and fast models. Whereas the slow-but-accurate approach took hundreds of hours of computation time per simulation and the existing fast method took a couple of minutes, D3M could complete a simulation in just 30 milliseconds.

D3M also churned out accurate results. When compared with the high-accuracy model, D3M had a relative error of 2.8 percent. Using the same comparison, the existing fast model had a relative error of 9.3 percent.

D3M's remarkable ability to handle parameter variations not found in its training data makes it an especially useful and flexible tool, Ho says. In addition to modeling other forces, such as hydrodynamics, Ho's team hopes to learn more about how the model works under the hood. Doing so could yield benefits for the advancement of artificial intelligence and machine learning, Ho says.

"We can be an interesting playground for a machine learner to use to see why this model extrapolates so well, why it extrapolates to elephants instead of just recognizing cats and dogs," she says. "It's a two-way street between science and deep learning."

How to resolve AdBlock issue?

How to resolve AdBlock issue?  {module In-article}

{module In-article}