With the help of machine-learning techniques, a team of astronomers has discovered a dozen quasars that have been warped by a naturally occurring cosmic "lens" and split into four similar images. Quasars are extremely luminous cores of distant galaxies that are powered by supermassive black holes.

Over the past four decades, astronomers had found about 50 of these "quadruply imaged quasars," or quads for short, which occur when the gravity of a massive galaxy that happens to sit in front of a quasar splits its single image into four. The latest study, which spanned only a year and a half, increases the number of known quads by about 25 percent and demonstrates the power of machine learning to assist astronomers in their search for these cosmic oddities.

"The quads are gold mines for all sorts of questions. They can help determine the expansion rate of the universe, and help address other mysteries, such as dark matter and quasar 'central engines,'" says Daniel Stern, lead author of the new study and a research scientist at the Jet Propulsion Laboratory, which is managed by Caltech for NASA. "They are not just needles in a haystack but Swiss Army knives because they have so many uses."

The findings, to be published in The Astrophysical Journal, were made by combining machine-learning tools with data from several ground- and space-based telescopes, including the European Space Agency's Gaia mission; NASA's Wide-field Infrared Survey Explorer (or WISE); the W. M. Keck Observatory on Maunakea, Hawaii; Caltech's Palomar Observatory; the European Southern Observatory's New Technology Telescope in Chile; and the Gemini South telescope in Chile.

Cosmological Dilemma

In recent years, a discrepancy has emerged over the precise value of the universe's expansion rate, also known as Hubble's constant. Two primary means can be used to determine this number: one relies on measurements of the distance and speed of objects in our local universe, and the other extrapolates the rate from models based on distant radiation left over from the birth of our universe, called the cosmic microwave background. The problem is that the numbers do not match.

"There are potentially systematic errors in the measurements, but that is looking less and less likely," says Stern. "More enticingly, the discrepancy in the values could mean that something about our model of the universe is wrong and there is new physics to discover."

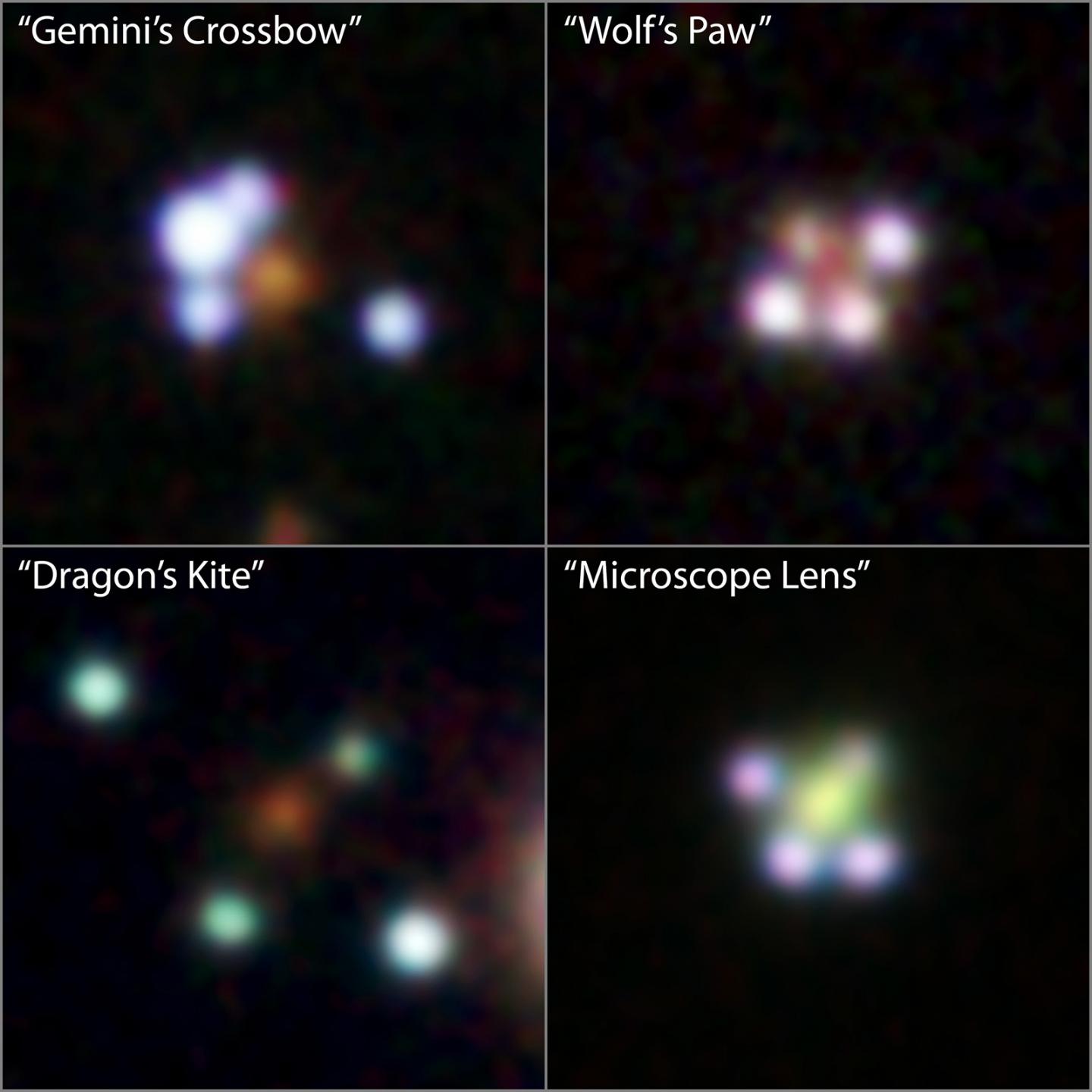

The new quasar quads, which the team gave nicknames such as Wolf's Paw and Dragon Kite, will help in future calculations of Hubble's constant and may illuminate why the two primary measurements are not in alignment. The quasars lie in between the local and distant targets used for the previous calculations, so they give astronomers a way to probe the intermediate range of the universe. A quasar-based determination of Hubble's constant could indicate which of the two values is correct, or, perhaps more interestingly, could show that the constant lies somewhere between the locally determined and distant value, a possible sign of previously unknown physics.

Gravitational Illusions

The multiplication of quasar images and other objects in the cosmos occurs when the gravity of a foreground object, such as a galaxy, bends and magnifies the light of objects behind it. The phenomenon, called gravitational lensing, has been seen many times before. Sometimes quasars are lensed into two similar images; less commonly, they are lensed into four.

"Quads are better than the doubly imaged quasars for cosmology studies, such as measuring the distance to objects, because they can be exquisitely well modeled," says co-author George Djorgovski, professor of astronomy and data science at Caltech. "They are relatively clean laboratories for making these cosmological measurements."

In the new study, the researchers used data from WISE, which has relatively coarse resolution, to find likely quasars, and then used the sharp resolution of Gaia to identify which of the WISE quasars were associated with possible quadruply imaged quasars. The researchers then applied machine-learning tools to pick out which candidates were most likely multiply imaged sources and not just different stars sitting close to each other in the sky. Follow-up observations by Keck, Palomar, the New Technology Telescope, and Gemini-South confirmed which of the objects were indeed quadruply imaged quasars lying billions of light-years away.

Humans and Machines Working Together

The first quad found with the help of machine learning, nicknamed Centaurus' Victory, was confirmed during an all-nighter the team spent at Caltech, with collaborators from Belgium, France, and Germany, while using a dedicated supercomputer in Brazil, recalls co-author Alberto Krone-Martins of UC Irvine. The team had been remotely observing their objects using the Keck Observatory.

"Machine learning was key to our study but it is not meant to replace human decisions," explains Krone-Martins. "We continuously train and update the models in an ongoing learning loop, such that humans and the human expertise are an essential part of the loop. When we talk about 'AI' in reference to machine-learning tools like these, it stands for Augmented Intelligence, not Artificial Intelligence."

"Alberto not only initially came up with the clever machine-learning algorithms for this project, but it was his idea to use the Gaia data, something that had not been done before for this type of project," says Djorgovski.

"This story is not just about finding interesting gravitational lenses," he says, "but also about how a combination of big data and machine learning can lead to new discoveries."

How to resolve AdBlock issue?

How to resolve AdBlock issue?