A new approach to IT technologies: The use of spin waves as data storage may one day allow supercomputers to compute more efficiently and reliably. This is the conclusion reached by a team of researchers from Martin Luther University Halle-Wittenberg (MLU) and Lanzhou University in China based on simulations for spin waves in structured materials. The study was published in the academic journal "npj computational materials".

Logic operations, including addition, multiplication, and subtraction, form the basis of all computing applications in devices such as smartphones and computers. "Without these operations, we would be unable to make use of the stored data," explains Professor Jamal Berakdar from the Institute of Physics at MLU. Until now, logic operations have been carried out with the help of charge currents. However, this technology has some disadvantages: "Moving charges react sensitively to external electric or magnetic fields. There is also an increase in energy dissipation - the smaller the devices become, the more the material heats up," says Berakdar. Therefore, researchers have been looking for alternative approaches. Magnonics attempts to utilize so-called magnons. Magnons are waves that can be generated in magnetic materials, thus also called spin waves. They are created through the oscillation of magnetization and can be used as signals in the data processing. Unlike charge-currents-assisted logic operation, electrons do not have to travel in magnonic-based information processing, so less energy is consumed.

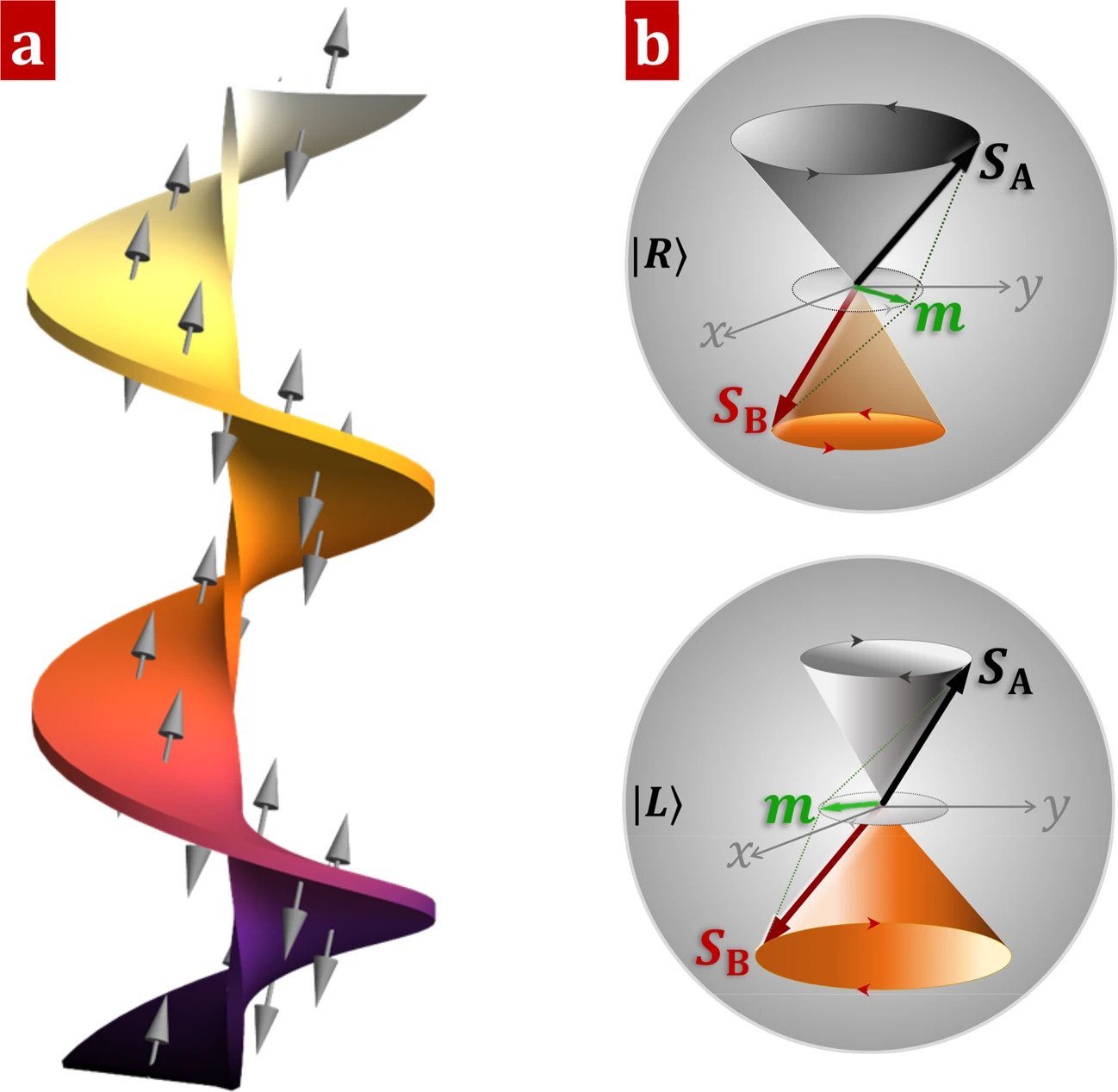

"Until now, research on magnons and spintronics has generally focused on conventional ferromagnets," says Berakdar. However, the team from Germany and China found that nanostructured antiferromagnetic wires are particularly well suited for logical operations. "Antiferromagnets differ fundamentally from ferromagnets. At the microscopic level, they are magnetically ordered, but in such a way that the material as a whole has no magnetization. Therefore, antiferromagnets respond only weakly to electric or magnetic fields," explains Berakdar. The simulations carried out by the physicists show that there are special magnons in nanostructured antiferromagnetic wires that can be used to perform logic operations quickly, reliably, and simultaneously without consuming a lot of energy.

How to resolve AdBlock issue?

How to resolve AdBlock issue?