Limit global warming below 1.5°C to prevent the new normal climate beyond record-warm sea surface temperatures we experienced

Limit global warming below 1.5°C to prevent the new normal climate beyond record-warm sea surface temperatures we experienced

In the past decade, the marginal seas of Japan frequently experienced extremely high sea surface temperatures (SSTs). A new study led by National Institute for Environmental Studies (NIES) researchers revealed that the increased occurrence frequency of extreme ocean warming events since the 2000s is attributable to global warming due to industrialization.

In August 2020, the southern area of Japan and the northwestern Pacific Ocean experienced unprecedentedly high SSTs, according to the Japan Meteorological Agency (JMA). A recent study published in January 2021 revealed that the record-high northwestern Pacific SST observed in August 2020 could not be expected to occur without human-induced climate changes. Since then, the JMA again announced that record-high SSTs were observed near Japan in July and October 2021 and from June to August 2022, but it remains unclear to what extent climate change has altered the occurrence likelihood of these regional extreme warming events.

“Impacts of global warming is not uniform, rather show regional and seasonal differences,” said a co-author Hideo Shiogama, the head of the Earth System Risk Assessment Section at Earth System Division, NIES. “A comprehensive analysis of regional SSTs for a long period may provide a quantitative understanding of how much ocean condition near Japan has been and will be affected by global warming. This better informs policymakers to plan climate change mitigation and adaptation strategies.”

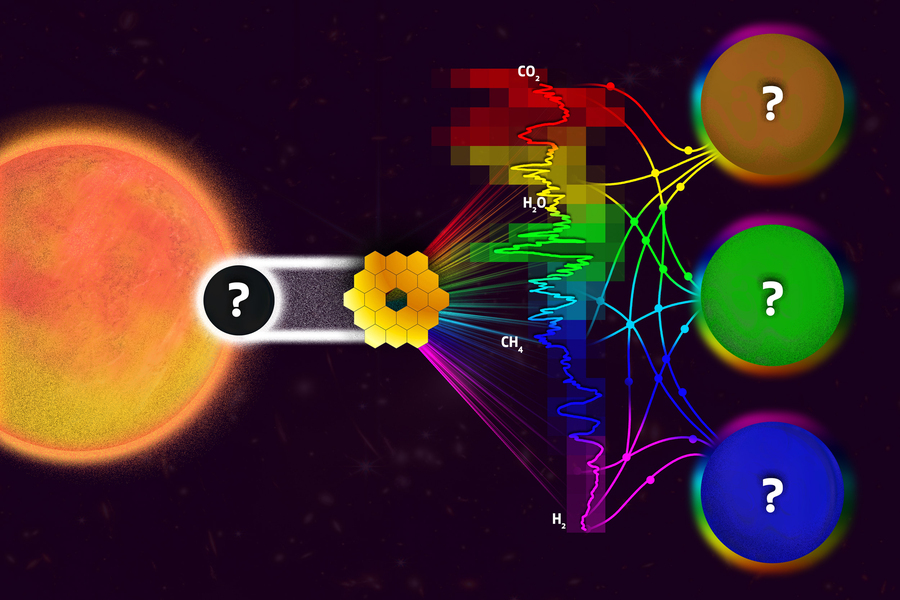

The paper published in Geophysical Research Letters today figures out the contribution of global warming to discrete monthly extreme ocean warming events in Japan’s marginal seas, which could occur less than once per 20 years in the preindustrial era. A climate research group at NIES focused on ten monitoring areas operationally used by the JMA, including the Japan Sea, East China Sea, Okinawa Islands, east of Taiwan, and the Pacific coasts of Japan. The scientists confirmed that observed SST changes from 1982 to 2021 were well reproduced by 24 climate models participating in the sixth phase of the Coupled Model Intercomparison Project (CMIP6), except for the region east of Hokkaido. Then, the extreme ocean warming events were identified in nine monitoring areas to reveal the contribution of climate change therein.

Extreme ocean warming and climate change

Extreme ocean warming and climate change

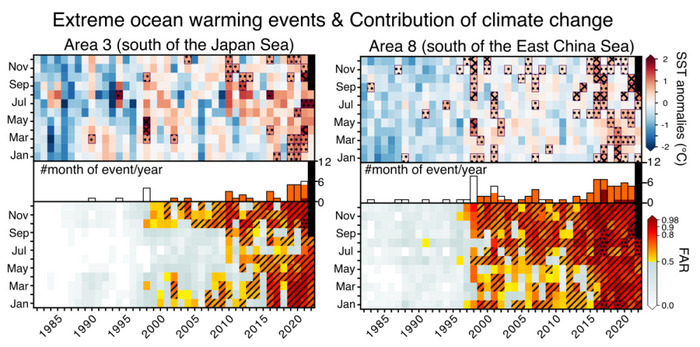

“In the present climate, every extreme ocean warming event is linked to global warming,” said corresponding lead author Michiya Hayashi, a research associate at NIES. The scientists estimated the occurrence frequencies of each event in the present and preindustrial climate conditions from January 1982 through July 2022 based on the CMIP6 climate models. “We found that the occurrence probability of almost all the extreme ocean warming events has already at least doubled since the 2000s than the preindustrial era. It is increased more than tenfold in sizeable cases since the mid-2010s, especially in southern Japan.”

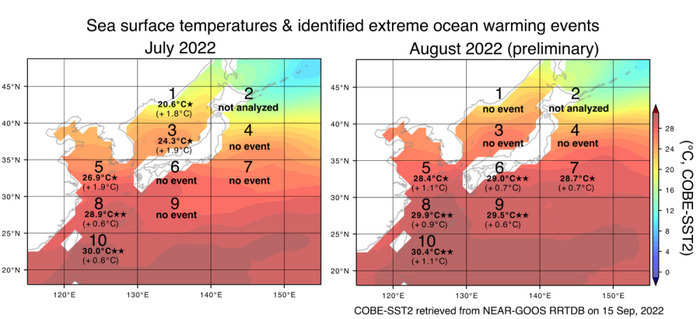

For instance, in July 2022, anomalously high SSTs observed in five monitoring areas, including the Japan Sea (Areas 1, 3), East China Sea (Areas 5, 8), and south of Okinawa near Taiwan (Area 10), are identified as the extreme ocean warming events. The updated results based on the preliminary data retrieved from the NEAR-GOOS RRTDB website on 15 September 2022 (not included in the published paper) show that, in August 2022, the events are also identified in six monitoring areas at the south of 35°N: the East China Sea (Areas 5, 8), south and east of Okinawa (Areas 10, 9), southeastern Kanto (Area 7), and seas off Shikoku and Tokai (Area 6). “We estimate that, in all of these identified events in July and August 2022, the occurrence frequencies are increased at least doubled due to climate change, and more than tenfold for those in the south of 35°N except for the north of East China Sea,” stated Hayashi.

“Climate change impacts on extreme ocean warming events in northern Japan began to emerge relatively late compared to southern Japan,” noted Shiogama. The increased global aerosol emissions until the 1980s tend to cool the Earth’s surface, which is more substantial in the North Pacific especially near northern Japan via atmospheric large-scale circulation changes. In addition, the year-to-year natural variability of SST is large in northern Japan so the global warming signal was less detectable than in southern Japan. Since in the last decades global aerosol emissions have been reduced, the cooling effect becomes less dominant to human-induced greenhouse gas warming. “Our study indicates,“ continued Shiogama, “that the contribution of climate change to SST extremes has been already discernible beyond natural variability even in northern Japan under the present climate condition.”

What about the ocean conditions expected in the future? The researchers further compared the probabilities of exceeding the monthly record high SSTs around Japan at different global warming levels from 0°C to 2°C using the 24 CMIP6 climate model outputs from 1901 to 2100. “Once global warming reaches 2°C, all of nine monitoring areas are expected to experience SSTs warmer than the past highest levels at least every two years,” said Tomoo Ogura, a co-author and the head of the Climate Modeling and Analysis Section at Earth System Division, NIES. He added, “Limiting global warming below 1.5°C is necessary not to have the record warm conditions in Japan's marginal seas as the new normal climate.”

The quantitative analysis of SSTs around Japan implies that climate change has already become the major factor for most of the record-high SSTs in recent years. “In the future, dynamics of each extreme warming event need to be examined by taking the long-term climate change and year-to-year natural variability into account,” noted Hayashi. “Nevertheless, we expect that our statistical results based on the latest climate models will help to implement adaptation and mitigation measures for climate change.”

How to resolve AdBlock issue?

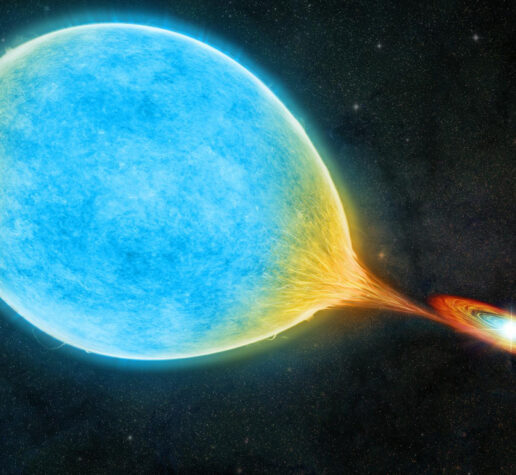

How to resolve AdBlock issue?  The stars circle each other every 51 minutes, confirming a decades-old prediction.

The stars circle each other every 51 minutes, confirming a decades-old prediction.