For decades now, the world has become increasingly reliant on computers and sensors to do just about everything, and the technologies themselves are getting smaller, faster, and more efficient. Take your smartphone as an example: a pocket-sized piece of mostly aluminum, iron, and lithium that is millions of times more powerful than the computers that guided the Apollo 11 moon landing in 1969.

Advancements in quantum technologies, which deploy the properties of quantum physics, promise to take a step further and revolutionize virtually all of industry and daily life. The result could yield more powerful and energy-efficient devices. But to do so requires that physicists get creative about how they exploit the weird ways atoms interact with each other.

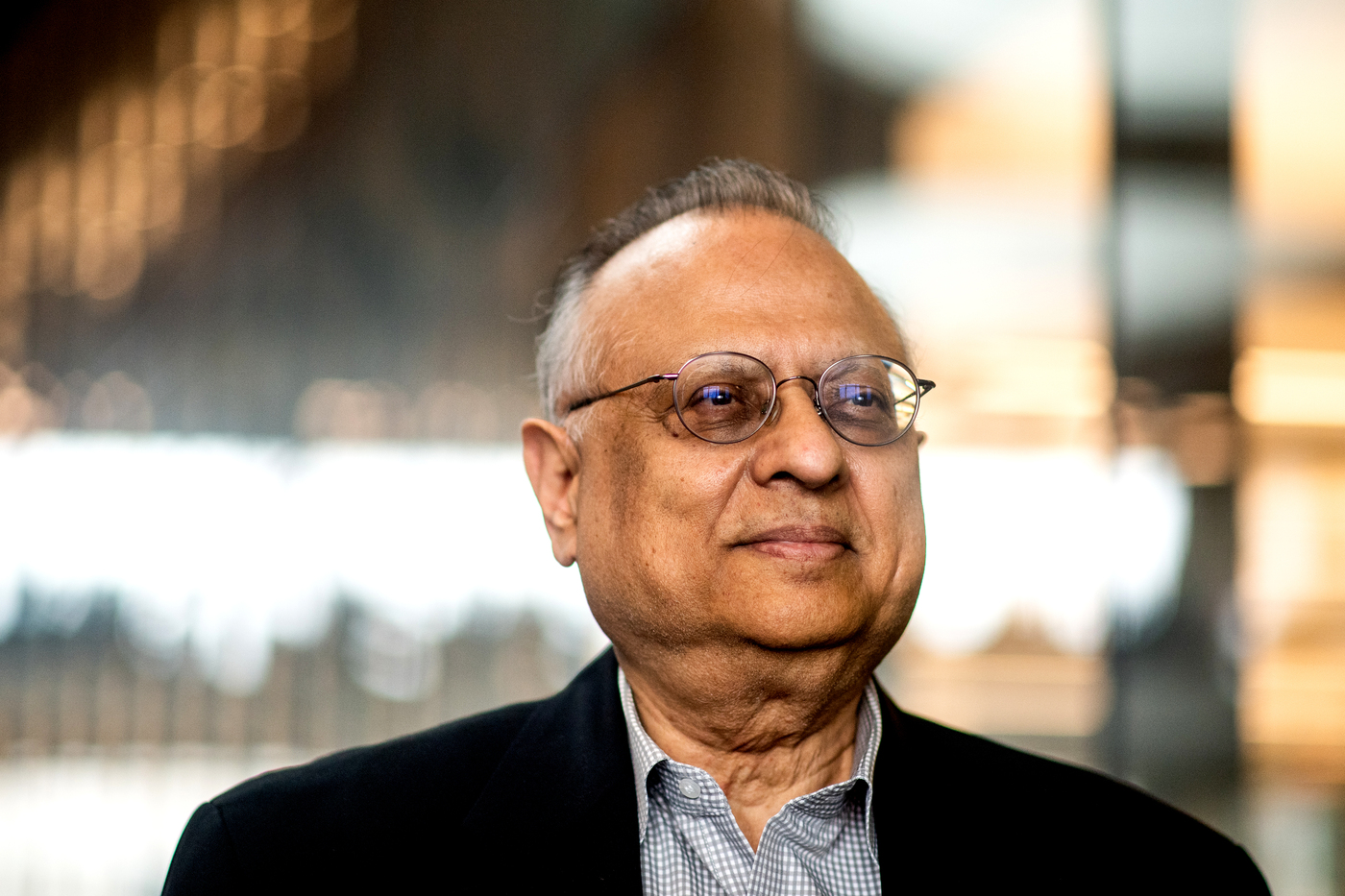

It turns out that atomic defects in certain solid crystals may be key to unleashing the potential of the quantum revolution, according to discoveries by Northeastern researchers. The defects are essentially irregularities in the way that atoms are arranged to form crystalline structures. Those irregularities could provide the physical conditions to host something called a quantum bit, or qubit for short—a foundational building block for quantum technologies, says Arun Bansil, university distinguished professor in the Department of Physics at Northeastern.

Qubits are fundamentally different from classical computer bits, which are the most basic units of information in computing. But because both are made out of incredibly small material, they are subject to the forces operating in the enigmatic and elusive world of nanoparticles.

Bansil and colleagues found that defects in a certain class of materials, specifically two-dimensional transition metal dichalcogenides, contained the atomic properties conducive to making qubits. Bansil says the findings amount to something of a breakthrough, particularly in quantum sensing, and may help accelerate the pace of technological change.

“If we can learn how to create qubits in this two-dimensional matrix, that is a big, big deal,” Bansil says.

Transition metal dichalcogenides have a diverse range of quantum properties, making them especially attractive for scientific investigation, Bansil says. Researchers in the field have said that the unique materials have “virtually unlimited potential in various fields, including electronic, optoelectronic, sensing, and energy storage applications.”

Using super-advanced computations, Bansil and his colleagues sifted through hundreds of different material combinations to find those capable of hosting a qubit.

“When we looked at a lot of these materials, in the end, we found only a handful of viable defects—about a dozen or so,” Bansil says. “Both the material and type of defect are important here because in principle there are many types of defects that can be created in any material.”

The key finding of the study is that the so-called “antisite” defect in films of the two-dimensional transition metal dichalcogenides carries something called “spin” with it. Spin, also called angular momentum, describes a fundamental property of electrons defined in one of two potential states: up or down, Bansil says.

To get a better sense of what a qubit is and how it can be applied to future computers and sensors, it’s important to understand how data is processed in existing “classical” computers. Classical computers use bits to perform computations. When you’re doing almost anything on a computer, you’re sending it a set of instructions that engages a central processing unit, or CPU. The CPU is made of circuitry that uses electrical signals to direct the whole computer to carry out program instructions that are stored in the system’s memory.

These signals communicate using information that’s encoded or packaged, into bits. The information is represented numerically in one of two values: 0 or 1, which describe the states of various circuits as being either on or off. All modern electronic devices operate through circuit components that send and receive information by essentially manipulating these 0s and 1s, Bansil says.

Qubits behave quite differently from existing bits, thanks to not-well-understood quantum mechanical properties. What makes a qubit different is that its values are fluid, meaning—and here’s where things get weird—they can be both 0 and 1 at the same time. This is because of something called superposition, a core principle of quantum mechanics that states that a quantum system can exist in multiple states at a given time until it is measured.

Quantum information systems instead can use the probability that a qubit will be in one or another state when measured, or observed, to make calculations.

“What is unique about a quantum bit is that it can essentially code two different states at the same time,” Bansil says. “You are in a position to actually in principle store a very large number of possibilities in a very small number of qubits simultaneously.”

The challenge for researchers has been how to find qubits that are stable enough to use, given the difficulties in finding the precise atomic conditions under which they can be materially realized.

“The current qubits available—especially those involved in quantum computing—all operate at very low temperatures, making them incredibly fragile,” Bansil says. That’s why the discovery of transition metal dichalcogenides’ defects holds such promise, he adds.

How to resolve AdBlock issue?

How to resolve AdBlock issue?