The new, comprehensive analysis presents more nuanced presents of global energy use

If the world is using more and more data, then it must be using more and more energy, right? Not so, says a comprehensive new analysis.

Researchers at Northwestern University, Lawrence Berkeley National Laboratory, and Koomey Analytics have developed the most detailed model to date of global data center energy use. With this model, the researchers found that, although demand for data has increased rapidly, massive efficiency gains by data centers have kept energy use roughly flat over the past decade.

This detailed, comprehensive model provides a more nuanced view of data center energy use and its drivers, enabling the researchers to make strategic policy recommendations for better managing this energy use in the future.

"While the historical efficiency progress made by data centers is remarkable, our findings do not mean that the IT industry and policymakers can rest on their laurels," said Eric Masanet, who led the study. "We think there is enough remaining efficiency potential to last several more years. But ever-growing demand for data means that everyone -- including policymakers, data center operators, equipment manufacturers, and data consumers -- must intensify efforts to avoid a possible sharp rise in energy use later this decade."  {module INSIDE STORY}

{module INSIDE STORY}

The paper will be published on Feb. 28 in the journal Science.

Masanet is an adjunct professor at Northwestern's McCormick School of Engineering and the Mellichamp Chair in Sustainability Science for Emerging Technologies at the University of California, Santa Barbara. He conducted the research with a Ph.D. student and coauthor Nuoa Lei at Northwestern.

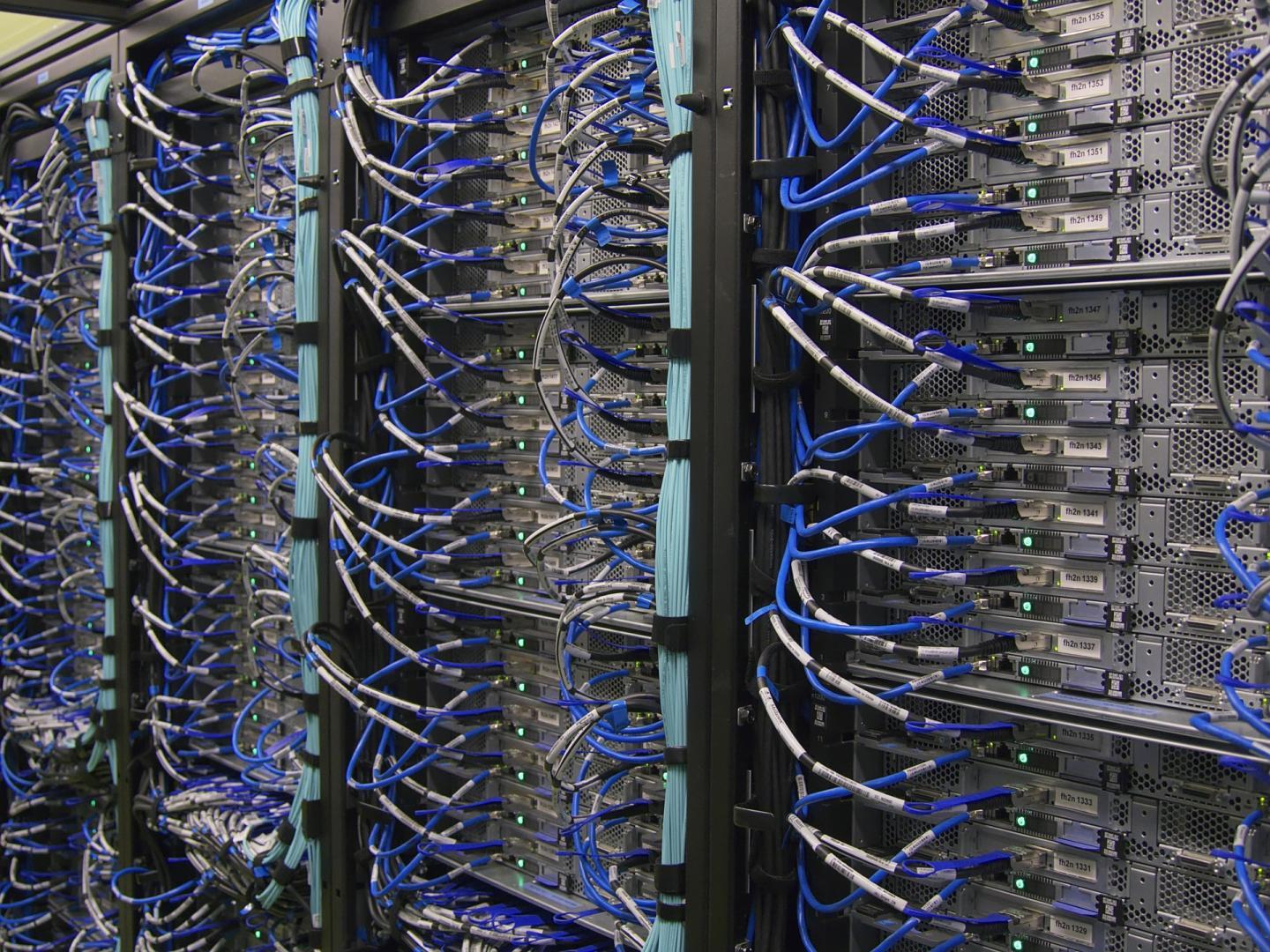

Filled with computing and networking equipment, data centers are central locations that collect, store and process data. As the world relies more and more on data-intensive technologies, the energy use of data centers is a growing concern.

"Considering that data centers are energy-intensive enterprises in a rapidly evolving industry, we do need to analyze them rigorously," said study co-author Arman Shehabi, a research scientist at Lawrence Berkeley National Laboratory. "Less detailed analyses have predicted rapid growth in data center energy use, but without fully considering the historical efficiency progress made by the industry. When we include that missing piece, a different picture of our digital lifestyles emerges."

To paint that more complete picture, the researchers integrated new data from numerous sources, including information on data center equipment stocks, efficiency trends, and market structure. The resulting model enables a detailed analysis of the energy used by data center equipment (such as servers, storage devices, and cooling systems), by type of data center including supercomputing centers and by world region.

The researchers concluded that recent efficiency gains made by data centers have likely been far greater than those observed in other major sectors of the global economy.

"Lack of data has hampered our understanding of global data center energy use trends for many years," said co-author Jonathan Koomey of Koomey Analytics. "Such knowledge gaps make business and policy planning incredibly difficult."

Addressing these knowledge gaps was a major motivation for the research team's work. "We wanted to give the data center industry, policymakers, and the public a more accurate view of data center energy use," said Masanet. "But the reality is that more efforts are needed to better monitor energy use moving forward, which is why we have made our model and datasets publicly available."

By releasing the model, the team hopes to inspire more research into the topic. The researchers also translated their findings into three specific types of policies that can help mitigate future growth in energy use, urging policymakers to act now:

- Extend the life of current efficiency trends by strengthening IT energy standards such as ENERGY STAR, providing financial incentives and disseminating best energy efficiency practices;

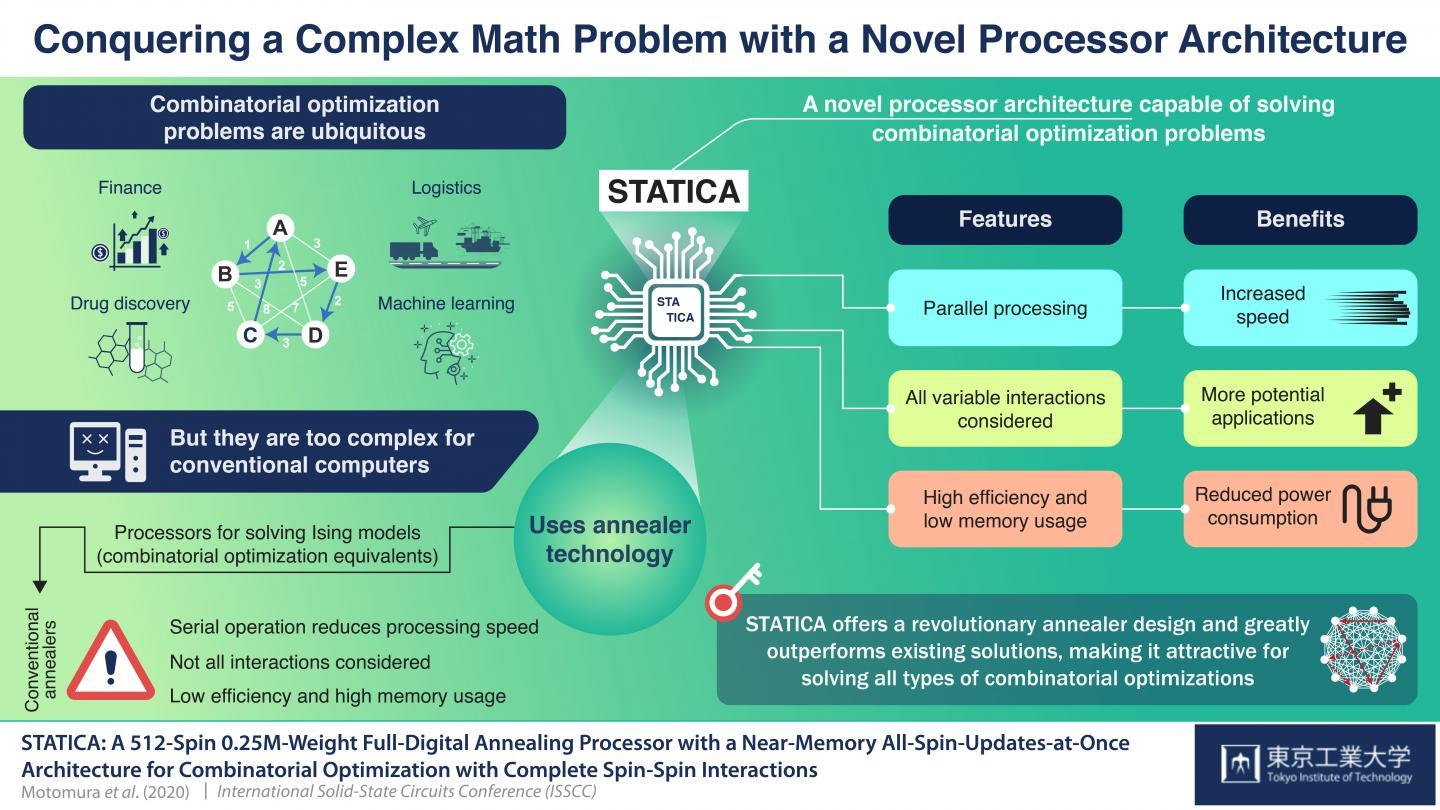

- Increase research and development investments in next-generation supercomputing, storage, and heat removal technologies to mitigate future energy use, while incentivizing renewable energy procurement to mitigate carbon emissions in parallel;

- Invest in data collection, modeling, and monitoring activities to eliminate blind spots and enable more robust data center energy policy decisions.

How to resolve AdBlock issue?

How to resolve AdBlock issue?  {module INSIDE STORY}

{module INSIDE STORY} {module INSIDE STORY}

{module INSIDE STORY}