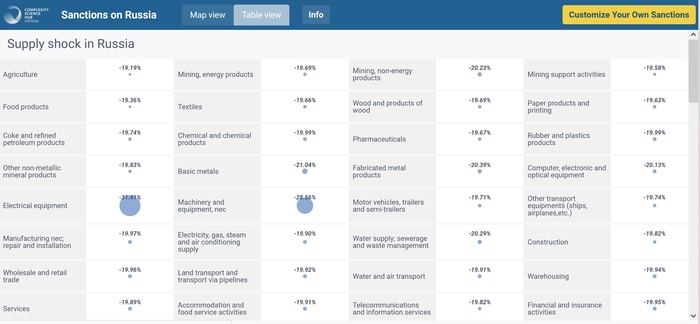

Super-resolution technology is a new supercomputing method used to enhance older meteorological model data so that scientists can better assess Earth's global climate history. Like upscaling digital photos and videos super-resolution calculations are an important analysis tool to calculate historical high-resolution model assimilation data, according to Dr. Chunxiang Shi, Chief Scientist at the National Meteorological Information Center of China Meteorological Administration.

"Due to the sparse historical observation data, the China Meteorological Administration land data assimilation system (CLDAS) cannot generate high-quality and high-resolution data," said Dr. Shi. "At the beginning of last year, I learned that super-resolution technology can be used to complete high-resolution reconstruction of videos and pictures. We can also integrate this technology into reconstructing high-resolution historical assimilation data.”

Dr. Shi and her team from the National Meteorological Information Center of China Meteorological Administration are also known for CMA’s Land Data Assimilation System (CLDAS) and China's 40-year global atmospheric/land surface reanalysis dataset (CRA-40). Recently, they published their super-resolution downscaling research based on CLDAS data in Advances in Atmospheric Sciences.

Specifically, the team built a deep learning downscaling model CLDASSD (CLDAS Statistical Downscaling). Using 2m temperature model data within the Beijing-Tianjin-Hebei region, researchers performed their downscaling test, making large-scale (low resolution) model output available to enhance local scale forecasts (high resolution). Their method successfully reconstructed fine textures in complex mountain areas, where human observation may be impossible. Through comparison with observational data, the root mean square error of CLDASSD is smaller than the general interpolation-based downscaling methods used with different daily times, seasons, and terrain.

"Natural images and meteorological data have similarities in some respects, some computer vision techniques (Super-resolution, semantic segmentation, etc.) may be applied in the atmosphere," said Dr. Shi. "In the future, we will learn from even better super-resolution technologies to upgrade our model and carry out more experiments using soil moisture, 10m wind, precipitation, etc. elements throughout China to fill the gap in CLDAS."

How to resolve AdBlock issue?

How to resolve AdBlock issue?

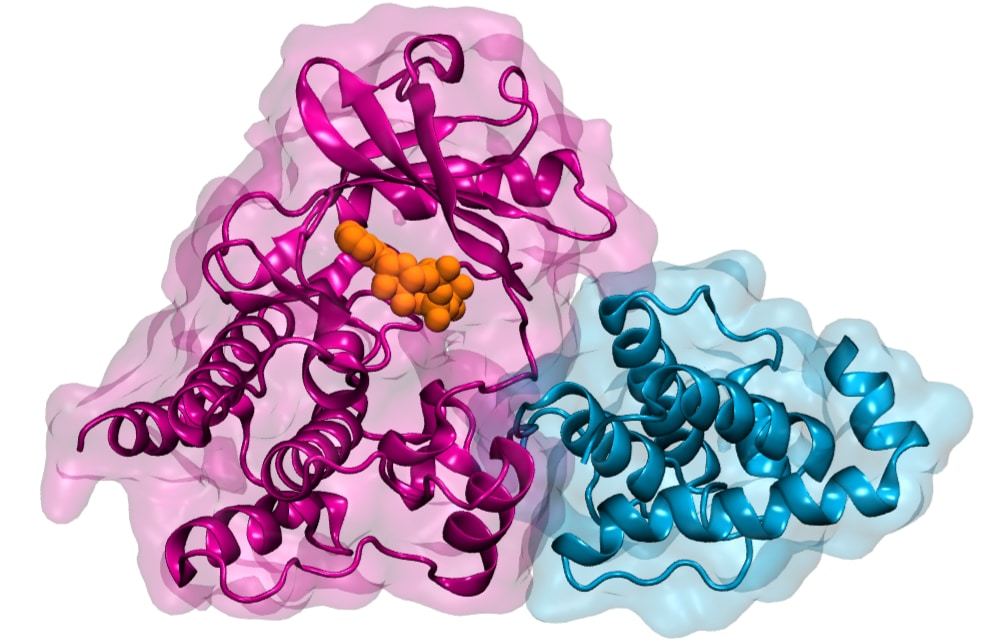

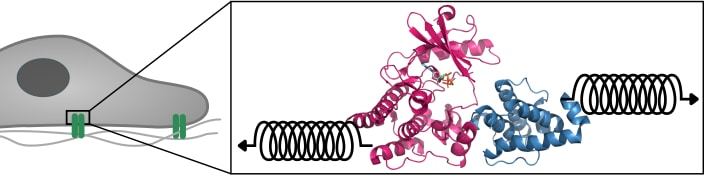

In a further step, they verified the predictions from the simulations and moved beyond their time- and length scales to study the large-scale cellular effects of retained ATP-binding to ILK, for which they joined forces with colleagues in Finland.

In a further step, they verified the predictions from the simulations and moved beyond their time- and length scales to study the large-scale cellular effects of retained ATP-binding to ILK, for which they joined forces with colleagues in Finland.