The vastness and mysteries of the Universe have always intrigued humanity. One of the most fascinating aspects is the expansion of the Universe, which causes galaxies to move away from each other. This phenomenon was first recognized by the renowned US astronomer Edwin Hubble. However, recent research has shed light on a new perspective that challenges our understanding of the Universe's expansion. German researchers from the Helmholtz Institute of Radiation and Nuclear Physics at the University of Bonn, in collaboration with scientists from St. Andrews University, have proposed a modified theory of gravity, known as Modified Newtonian Dynamics (MOND), to explain the discrepancies observed in the Hubble tension. In this article, we will delve into the concept of the Hubble tension, explore the traditional model of cosmology, and unravel the potential implications of the MOND theory.

Understanding the Hubble Tension

To comprehend the Hubble tension, we must first understand the relationship between the expansion of the Universe and the movement of galaxies. As the Universe expands, galaxies move away from each other. The speed at which they do so is proportional to the distance between them. This relationship was established by Edwin Hubble and is known as Hubble's law. Calculating the speed at which galaxies move away from each other requires knowledge of the distance between them, multiplied by a constant known as the Hubble-Lemaitre constant. This constant is a fundamental parameter in cosmology, determining the rate of expansion of the Universe.

The Hubble-Lemaitre Constant: A Key to the Universe's Expansion

The Hubble-Lemaitre constant plays a crucial role in understanding the expansion of the Universe. Its value can be determined by observing distant regions of the Universe, where the speed of galaxies moving away from each other is measured to be approximately 244,000 kilometers per hour per megaparsec. A megaparsec represents a distance of just over three million light years. However, recent research has revealed a discrepancy in the value of the Hubble-Lemaitre constant when observing 1a supernovae, a type of exploding star that is relatively closer to Earth.

1a Supernovae: Probing the Expansion of the Universe

1a supernovae provide a unique opportunity to precisely measure their distance from Earth. By observing the color shift of these shining objects, astronomers can infer their speed, as objects moving away from us exhibit a stronger color change. When calculating the speed of 1a supernovae and correlating it with their distance, a different value for the Hubble-Lemaitre constant emerges. The observed value is just under 264,000 kilometers per hour per megaparsec, indicating a faster expansion of the Universe in our vicinity.

Local "Under-Density" and the Hubble Tension

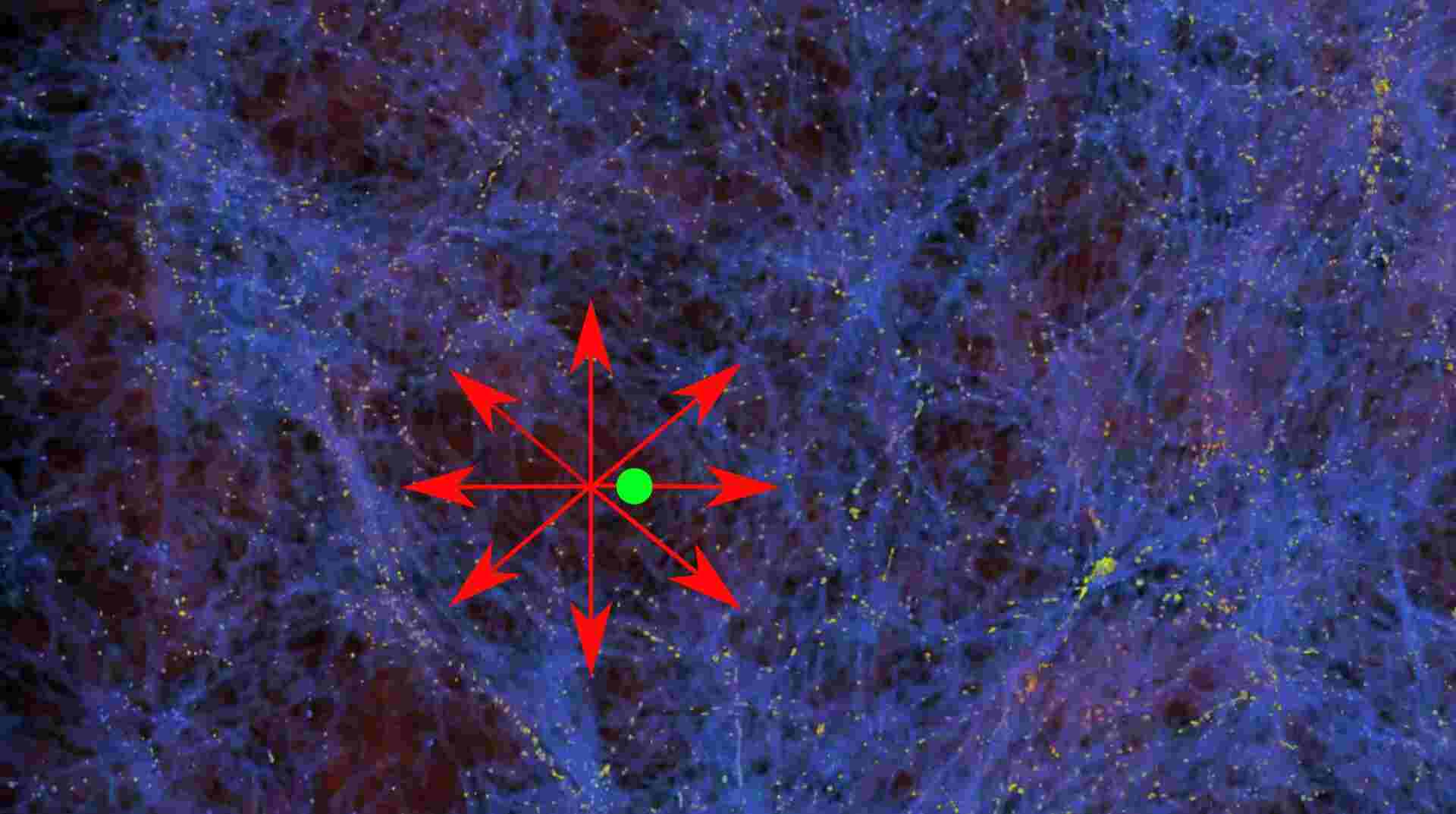

The faster expansion of the Universe in our vicinity raises questions about the traditional model of cosmology. Prof. Dr. Pavel Kroupa from the Helmholtz Institute of Radiation and Nuclear Physics at the University of Bonn suggests that the Earth is located in a region of space with relatively low matter density, akin to an air bubble in a cake. Surrounding this bubble, matter density is higher, resulting in gravitational forces that pull galaxies towards the edges of the cavity. This phenomenon explains why galaxies in our vicinity are moving away from us faster than expected, contributing to the Hubble tension.

The traditional model of cosmology, which is based on Albert Einstein's theory of gravity, assumes that matter is evenly distributed in space. However, recent observations of galaxies located 600 million light years away have revealed that they are moving four times faster than predicted by the standard model. This discrepancy suggests that the distribution of matter in the Universe is not entirely even and that there may be under-densities or "bubbles" that contribute to the observed deviations in the Universe's expansion. Sergij Mazurenko from Kroupa's research group believes that these irregularities challenge the standard model of cosmology.

Modified Newtonian Dynamics (MOND): A New Approach to Gravity

To explain the irregularities in the distribution of matter and reconcile the Hubble tension, researchers have turned to a modified theory of gravity known as Modified Newtonian Dynamics (MOND). This theory, proposed by Prof. Dr. Mordehai Milgrom four decades ago, challenges the traditional understanding of gravitational forces. In a supercomputer simulation using MOND, research groups from the Universities of Bonn and St. Andrews successfully predicted the existence of under-densities or "bubbles" in the distribution of matter. These findings suggest that gravity may behave differently than predicted by Einstein's theory of gravity.

By assuming the validity of Milgrom's assumptions and the modified theory of gravity, the Hubble tension can be resolved. In this alternative perspective, there would be only one constant for the expansion of the Universe, and the observed discrepancies in the Hubble-Lemaitre constant would be attributed to the irregularities in the distribution of matter. The application of MOND in the supercomputer simulation provides a potential solution to the Hubble tension and opens up new avenues for exploring the mysteries of the expanding Universe.

Implications and Future Research

The proposed modified theory of gravity, MOND, challenges our understanding of the Universe's expansion and raises intriguing possibilities for future research. If gravity behaves differently than predicted by Einstein's theory, it may have implications for various astronomical phenomena, such as the movement of galaxies, the formation of structures in the Universe, and even the nature of dark matter. Further studies and observations are needed to validate the MOND theory and explore its broader consequences for our understanding of the cosmos.

Conclusion

The Hubble tension, a discrepancy in the expansion of the Universe, has captivated the attention of scientists worldwide. Researchers from the University of Bonn and St. Andrews University have proposed a modified theory of gravity, MOND, to explain the observed irregularities in the Universe's expansion. By considering the existence of under-densities or "bubbles" in the distribution of matter, the Hubble tension can be resolved, providing a new perspective on the mysteries of the Universe. This alternative approach challenges the traditional model of cosmology and opens the door to further exploration of the fundamental forces shaping our vast cosmos.

How to resolve AdBlock issue?

How to resolve AdBlock issue?