Fluid mechanics researchers from Brown University and the University of Toulouse found that surfactants give the celebratory drink its stable and signature straight rise of bubbles.

Here are some scientific findings worthy of a toast: Researchers from Brown University and the University of Toulouse in France have explained why bubbles in Champagne fizz up in a straight line while bubbles in other carbonated drinks, like beer or soda, don’t.

The findings are based on a series of numerical and physical experiments, including, of course, pouring out glasses of Champagne, beer, sparkling water, and sparkling wine. The results not only explain what gives Champagne its line of bubbles but may hold important implications for understanding bubbly flows in the field of fluid mechanics.

“This is the type of research that I've been working out for years,” said Brown Engineering professor Roberto Zenit, who was one of the paper’s authors. “Most people have never seen an ocean seep or an aeration tank but most of them have had a soda, a beer, or a glass of Champagne. By talking about Champagne and beer, our master plan is to make people understand that fluid mechanics is important in their daily lives.”

The team’s goal was to investigate the stability of bubble chains in carbonated drinks. Part of the signature experience of enjoying these beverages is the tiny or large bubbles that form when the drink is poured, creating a visible chain of bubbles and fizz. Depending on the drink and its ingredients, the fluid mechanics involved are all different.

When it comes to Champagne and sparkling wine, for instance, the gas bubbles that continuously appear rise rapidly to the top in a single-file line and keep doing so for some time. This is known as a stable bubble chain. With other carbonated drinks, like beer, many bubbles veer off to the side, making it look like multiple bubbles are coming up at once. This means the bubble chain isn’t stable.

The chain of bubbles from Champagne and sparkling wine rise in a straight line. The bubble chain in many beers veers off to the side when they rise, making it look like multiple bubbles rise at once.

The researchers set out to explore the mechanics of what makes bubble chains stable and if they could recreate them, making unstable chains as stable as they are in Champagne or prosecco.

The results of their experiments indicate that the stable bubble chains in Champagne and other sparkling wines occur due to ingredients that act as soap-like compounds called surfactants. These surfactant-like molecules help reduce the tensions between the liquid and the gas bubbles, making for a smooth rise to the top.

“The theory is that in Champagne these contaminants that act as surfactants are the good stuff,” said Zenit, senior author of the paper. “These protein molecules that give flavor and uniqueness to the liquid are what makes the bubbles chains they produce stable.”

The experiments also showed the stability of bubbles is impacted by the size of the bubbles themselves. They found that the chains with large bubbles have a wake similar to that of bubbles with contaminants, leading to a smooth rise and stable chains.

In beverages, however, bubbles are always small. It makes surfactants the key ingredient to producing straight and stable chains. Beer, for example, also contains surfactant-like molecules but, depending on the type of beer, the bubbles can rise in straight chains or not. In contrast, bubbles in carbonated water are always unstable since no contaminants are helping the bubbles move smoothly through the wake flows left behind by the other bubbles in the chain.

“This wake, this velocity disturbance, causes the bubbles to be knocked out,” Zenit said. “Instead of having one line, the bubbles end up going up in more of a cone.”

The results of the new study go well beyond understanding the science that goes into celebratory toasts, the researchers said. The findings provide a general framework in fluid mechanics for understanding the formation of clusters in bubbly flows, which have economic and societal value.

Technologies that use bubble-induced mixing, like aeration tanks at water treatment facilities, for instance, would benefit greatly from researchers having a clearer understanding of how bubbles cluster, their origins, and how to predict their appearance. In nature, understanding these flows may help better explain ocean seeps in which methane and carbon dioxide emerge from the bottom of the ocean.

The experiments the research team ran were relatively straightforward — and some could even be run in any local pub. To observe the bubble chains, the researchers poured glasses of carbonated beverages including Pellegrino sparkling water, Tecate beer, Charles de Cazanove Champagne, and a Spanish-style brut.

To study the bubble chains and what goes into making them stable, they filled a small rectangular plexiglass container with liquid and inserted a needle at the bottom so they could pump in gas to create different kinds of bubble chains.

In this figure from the paper, the researchers show that when the frequency of gas bubbles is increased in a clean liquid to the rate of bubble chains in Champagne, the chain quickly loses stabilization. Courtesy of Roberto Zenit.

The researchers then gradually added surfactants or increased bubble size. They found that when they made the bubbles larger, they could make unstable bubble chains become stable, even without surfactants. When they kept a fixed bubble size and only added surfactants, they found they could also go from unstable chains to stable ones.

The two experiments indicate that there are two distinct possibilities to stabilize a bubble chain: adding surfactants and making bubbles bigger, the researchers explain in the paper.

The researchers performed numerical simulations on a computer to explain some of the questions they couldn’t explain through the physical experiments, such as calculating how much of the surfactants go into the gas bubbles, the weight of the bubbles, and their precise velocity.

They plan to keep looking into the mechanics of stable bubble chains to apply them to different aspects of fluid mechanics, especially in bubbly flows.

“We’re interested in how these bubbles move and their relationship to industrial applications and in nature,” Zenit said.

How to resolve AdBlock issue?

How to resolve AdBlock issue?

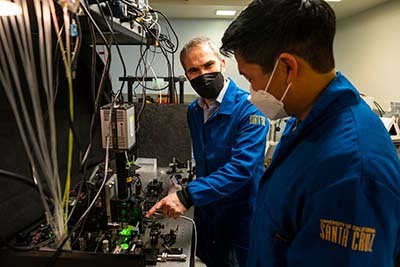

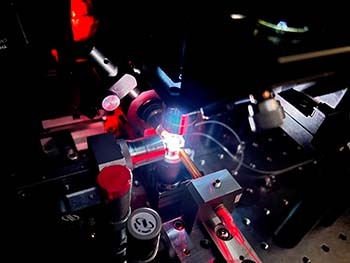

Holger Schmidt, a distinguished professor of electrical and computer engineering at UC Santa Cruz, and his group have long been focused on developing unique, highly sensitive devices called optofluidic chips to detect biomarkers.

Holger Schmidt, a distinguished professor of electrical and computer engineering at UC Santa Cruz, and his group have long been focused on developing unique, highly sensitive devices called optofluidic chips to detect biomarkers.