New research from the University of Georgia reveals that artificial intelligence can be used to find planets outside of our solar system. A recent study demonstrated that machine learning can be used to find exoplanets, information that could reshape how scientists detect and identify new planets very far from Earth.

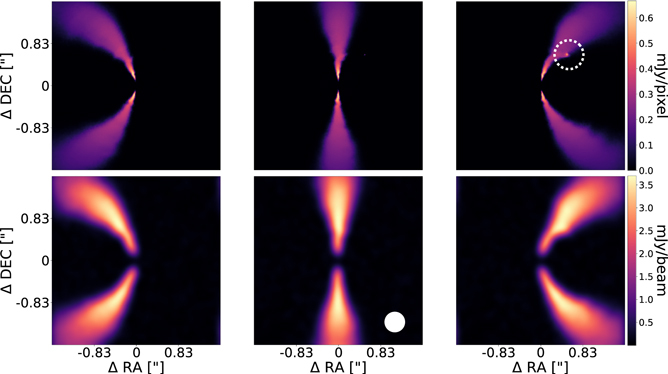

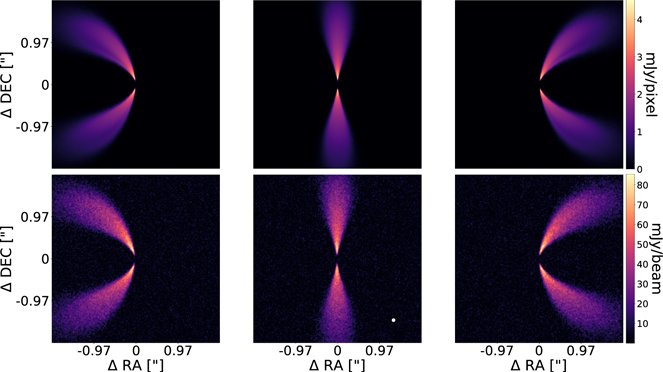

“One of the novel things about this is analyzing environments where planets are still forming,” said Jason Terry, a doctoral student in the UGA Franklin College of Arts and Sciences department of physics and astronomy and lead author on the study. “Machine learning has rarely been applied to the type of data we’re using before, specifically looking at systems that are still actively forming planets.”

The first exoplanet was found in 1992, and though more than 5,000 are known to exist, those have been among the easiest for scientists to find. Exoplanets at the formation stage are difficult to see for two primary reasons. They are too far away, often hundreds of light-years from Earth, and the discs where they form are thicker than the distance of the Earth to the sun. Data suggests the planets tend to be in the middle of these discs, conveying a signature of dust and gases kicked up by the planet.

The research showed that artificial intelligence can help scientists overcome these difficulties.

“This is a very exciting proof of concept,” said Cassandra Hall, assistant professor of astrophysics, principal investigator of the Exoplanet and Planet Formation Research Group, and co-author of the study. “The power here is that we used exclusively synthetic telescope data generated by computer simulations to train this AI, and then applied it to real telescope data. This has never been done before in our field, and paves the way for a deluge of discoveries as James Webb Telescope data rolls in.”

The James Webb Space Telescope, launched by NASA in 2021, has inaugurated a new level of infrared astronomy, bringing stunning new images and reams of data for scientists to analyze. It’s just the latest iteration of the agency’s quest to find exoplanets, scattered unevenly across the galaxy. The Nancy Grace Roman Observatory, a 2.4-meter survey telescope scheduled to launch in 2027 that will look for dark energy and exoplanets, will be the next major expansion in capability – and delivery of information and data – to comb through the universe for life.

The Webb telescope supplies the ability for scientists to look at exoplanetary systems in an exceptionally bright, high resolution, with the forming environments themselves a subject of great interest as they determine the resulting solar system.

“The potential for good data is exploding, so it’s a very exciting time for the field,” Terry said.

New analytical tools are essential

Next-generation analytical tools are urgently needed to greet this high-quality data, so scientists can spend more time on theoretical interpretations rather than meticulously combing through the data and trying to find tiny little signatures.

“In a sense, we’ve sort of just made a better person,” Terry said. “To a large extent the way we analyze this data is you have dozens, hundreds of images for a specific disc and you just look through and ask ‘is that a wiggle?’ then run a dozen simulations to see if that’s a wiggle and … it’s easy to overlook them – they’re really tiny, and it depends on the cleaning, and so this method is one, really fast, and two, its accuracy gets planets that humans would miss.”

Terry says this is what machine learning can already accomplish – improve the human capacity to save time and money as well as efficiently guide scientific time, investments, and new proposals.

“There remains, within science and particularly astronomy in general, skepticism about machine learning and of AI, a valid criticism of it being this black box – where you have hundreds of millions of parameters and somehow you get out an answer. But we think we’ve demonstrated pretty strongly in this work that machine learning is up to the task. You can argue about interpretation. But in this case, we have very concrete results that demonstrate the power of this method.”

The research team’s work is designed to develop a concrete foundation for future applications on observational data, demonstrating the method’s effectiveness by using simulational observations.

How to resolve AdBlock issue?

How to resolve AdBlock issue?