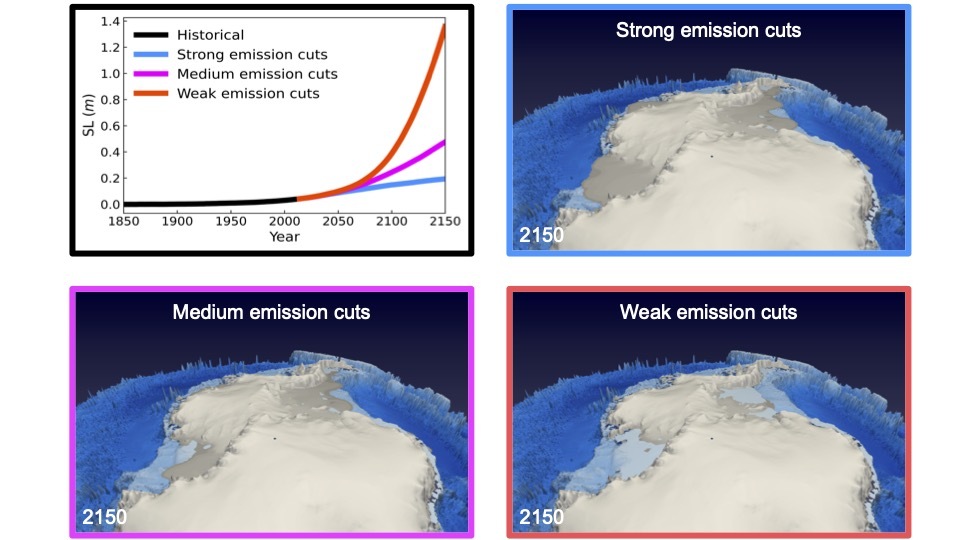

A study by an international team of scientists shows that an irreversible loss of the West Antarctic and Greenland ice sheets, and a corresponding rapid acceleration of sea level rise, may be imminent if global temperature change cannot be stabilized below 1.8°C, relative to the preindustrial levels.

Coastal populations worldwide are already bracing for rising seas. However, planning for counter-measures to prevent inundation and other damages has been extremely difficult since the latest climate model projections presented in the 6th assessment report of the Intergovernmental Panel on Climate Change (IPCC) do not agree on how quickly the major ice sheets will respond to global warming.

Melting ice sheets are potentially the most significant contributor to sea level change, and historically the hardest to predict because the physics governing their behavior is notoriously complex. “Moreover, computer models that simulate the dynamics of the ice sheets in Greenland and Antarctica often do not account for the fact that ice sheet melting will affect ocean processes, which, in turn, can feed back onto the ice sheet and the atmosphere,” says Jun Young Park, a Ph.D. student at the IBS Center for Climate Physics and Pusan National University, Busan, South Korea and first author of the study.

Using a new supercomputer model, which captures the coupling between ice sheets, icebergs, ocean, and atmosphere for the first time, climate researchers found that an ice sheet/sea level run-away effect can be prevented only if the world reaches net zero carbon emissions before 2060.

“If we miss this emission goal, the ice sheets will disintegrate and melt at an accelerated pace, according to our calculations. If we don’t take any action, retreating ice sheets will continue to increase sea level by at least 100 cm within the next 130 years. This would be on top of other contributions, such as the thermal expansion of ocean water” says Prof. Axel Timmermann, co-author of the study and Director of the IBS Center for Climate Physics.

Ice sheets respond to atmospheric and oceanic warming in delayed and often unpredictable ways. Previously, scientists have highlighted the importance of subsurface ocean melting as a critical process, which can trigger runaway effects in the major marine-based ice sheets in Antarctica. “However, according to our supercomputer simulations, the effectiveness of these processes may have been overestimated in recent studies,” says Prof. June Yi Lee from the IBS Center for Climate Physics and Pusan National University and co-author of the study. “We see that sea ice and atmospheric circulation changes around Antarctica also play a crucial role in controlling the amount of ice sheet melting with repercussions for global sea level projections,” she adds.

The study highlights the need to develop more complex earth system models, which capture the different climate components and their interactions. Furthermore, new observational programs are needed to constrain the representation of physical processes in earth system models, particularly from highly active regions, such as Pine Island glaciers in Antarctica.

“One of the key challenges in simulating ice sheets is that even small-scale processes can play a crucial role in the large-scale response of an ice sheet and for the corresponding sea-level projections. Not only do we have to include the coupling of all components, as we did in our current study, but we also need to simulate the dynamics at the highest possible spatial resolution using some of the fastest supercomputers,” summarizes Axel Timmermann.

How to resolve AdBlock issue?

How to resolve AdBlock issue?