Despite the remarkable progress in artificial intelligence (AI), several studies show that AI systems do not improve radiologists' diagnostic performance. Diagnostic errors contribute to 40,000 - 80,000 deaths annually in U.S. hospitals. This lapse creates a pressing need: Build next-generation computer-aided diagnosis algorithms that are more interactive to fully realize the benefits of AI in improving medical diagnosis.

That’s just what Hien Van Nguyen, the University of Houston associate professor of electrical and computer engineering, is doing with a new $933,812 grant from the National Cancer Institute. He will focus on lung cancer diagnostics.

“Current AI systems focus on improving stand-alone performances while neglecting team interaction with radiologists,” said Van Nguyen. “This project aims to develop a computational framework for AI to collaborate with human radiologists on medical diagnosis tasks.”

That framework uses a unique combination of eye-gaze tracking, intention reverse engineering, and reinforcement learning to decide when and how an AI system should interact with radiologists.

To maximize time efficiency and minimize the amount of distraction on clinical work, Van Nguyen is designing a user-friendly and minimally interfering interface for radiologist-AI interaction.

The project evaluates the approaches for two clinically important applications: lung nodule detection and pulmonary embolism. Lung cancer is the second most common cancer, and pulmonary embolism is the third most common cause of cardiovascular death.

“Studying how AI can help radiologists reduce these diseases' diagnostic errors will have significant clinical impacts,” said Van Nguyen. “This project will significantly advance the knowledge of the field by addressing important, but largely under-explored questions.”

The questions include when and how AI systems should interact with radiologists and how to model radiologists' visual scanning process.

“Our approaches are creative and original because they represent a substantive departure from the existing algorithms. Instead of continuously providing AI predictions, our system uses a gaze-assisted reinforcement learning agent to determine the optimal time and type of information to present to radiologists,” said Van Nguyen.

“Our project will advance the strategies for designing user interfaces for doctor-AI interaction by combining gaze-sensing and novel AI methodologies.”

How to resolve AdBlock issue?

How to resolve AdBlock issue?

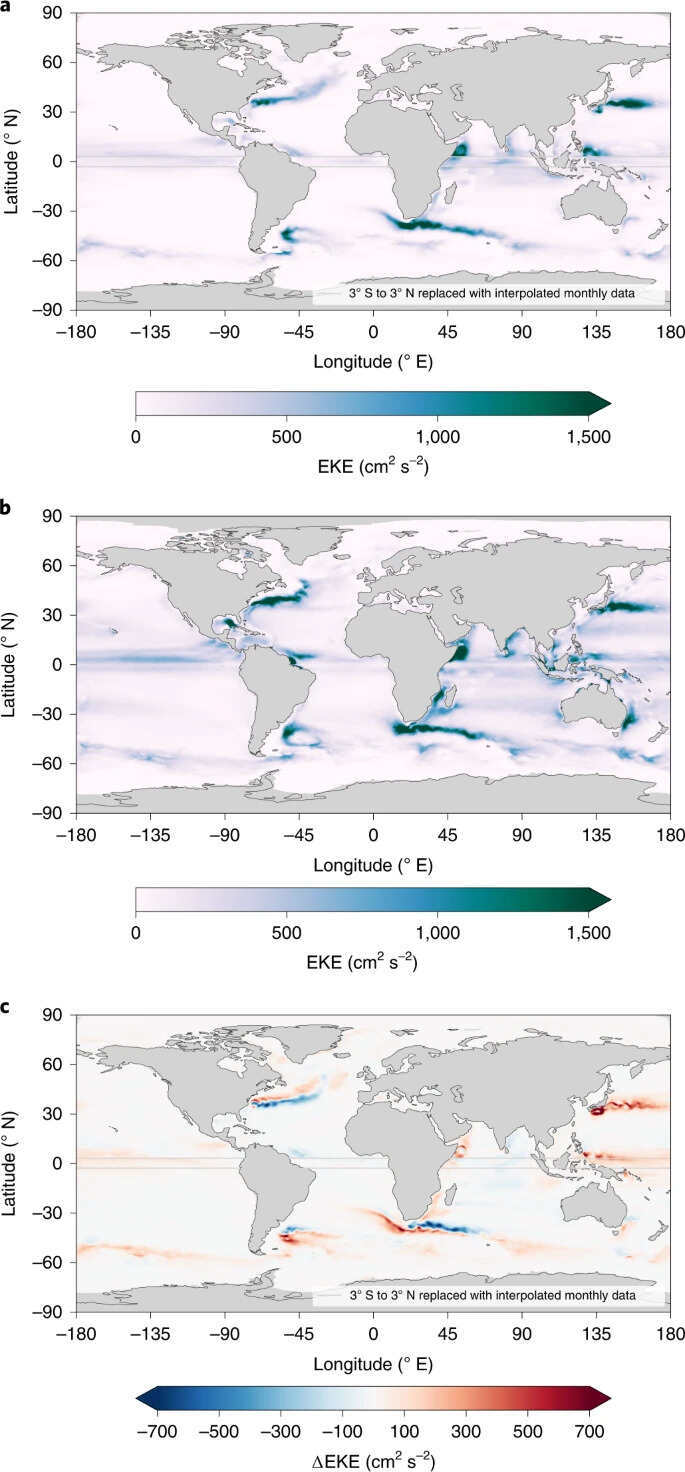

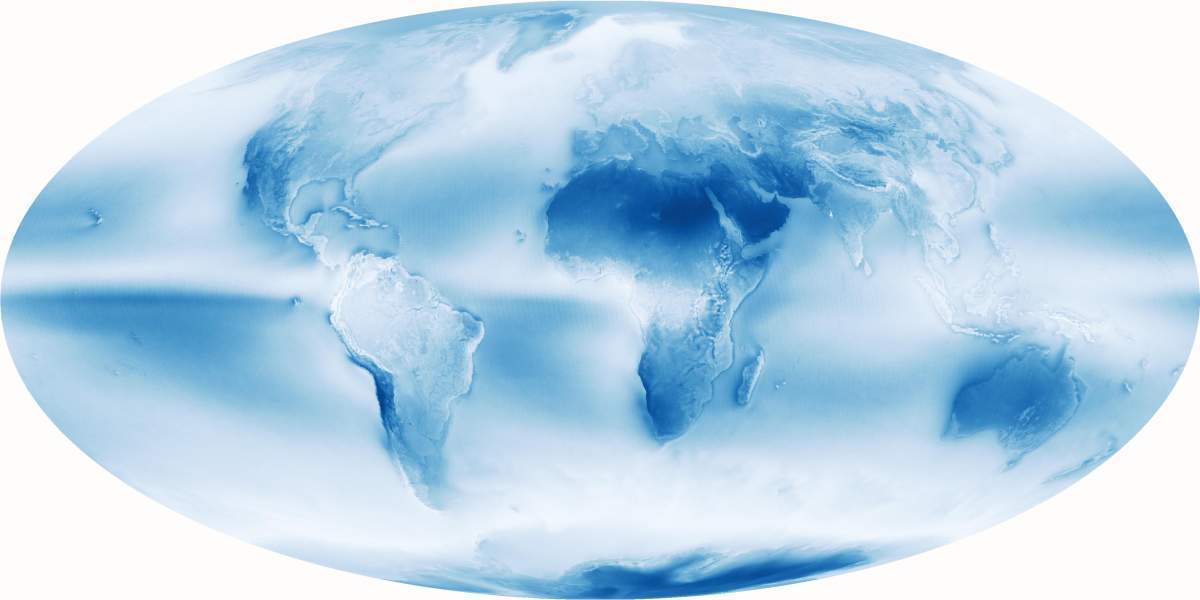

As early as the 1970s, when scientists analyzed data from the first meteorological satellites, they were surprised to find out that the two hemispheres reflect the same amount of solar radiation. The reflectivity of solar radiation is known in scientific lingo as “albedo.” To better comprehend what albedo is, think about driving at night: It is easy to spot the intermittent white lines, which reflect light from the car’s headlights well, but difficult to discern the dark asphalt. The same is true when observing Earth from space: The ratio of the solar energy hitting the Earth to the energy reflected by each region is determined by various factors. One of them is the ratio of dark oceans to bright land, which differ in reflectivity, just like asphalt and intermittent white lines. The land area of the Northern Hemisphere is about twice as large as that of the Southern, and indeed when measuring near the surface of the Earth, when the skies are clear, there is more than a 10 percent difference in albedo. Still, both hemispheres appear to be equally bright from space.

As early as the 1970s, when scientists analyzed data from the first meteorological satellites, they were surprised to find out that the two hemispheres reflect the same amount of solar radiation. The reflectivity of solar radiation is known in scientific lingo as “albedo.” To better comprehend what albedo is, think about driving at night: It is easy to spot the intermittent white lines, which reflect light from the car’s headlights well, but difficult to discern the dark asphalt. The same is true when observing Earth from space: The ratio of the solar energy hitting the Earth to the energy reflected by each region is determined by various factors. One of them is the ratio of dark oceans to bright land, which differ in reflectivity, just like asphalt and intermittent white lines. The land area of the Northern Hemisphere is about twice as large as that of the Southern, and indeed when measuring near the surface of the Earth, when the skies are clear, there is more than a 10 percent difference in albedo. Still, both hemispheres appear to be equally bright from space.