Researchers from DTU Physics describe in an article in Science, how -- by simple means -- they have created a 'carpet' of thousands of quantum-mechanically entangled light pulses

Quantum mechanics is one of the most successful theories of natural science, and although its predictions are often counterintuitive, not a single experiment has been conducted to date of which the theory has not been able to give an adequate description.

Along with colleagues at bigQ (Center for Macroscopic Quantum States - a Danish National Research Foundation Center of Excellence), center leader Prof. Ulrik Lund Andersen is working on understanding and utilizing macroscopic quantum effects:

"The prevailing view among researchers is that quantum mechanics is a universally valid theory and therefore also applicable in the macroscopic day-to-day world we normally live in. This also means that it should be possible to observe quantum phenomena on a large scale, and this is precisely what we strive to do in the Danish National Research Foundation Center of Excellence bigQ," says Ulrik Lund Andersen.

In a new article in the most prestigious international journal Science, the researchers describe how they have succeeded in creating entangled, squeezed light at room temperature. A discovery that could pave the way for less expensive and more powerful quantum supercomputers.  {module In-article}

{module In-article}

Their work concerns one of the most notoriously difficult quantum phenomena to understand: entanglement. It describes how physical objects can be brought into a state in which they are so intricately linked that they can no longer be described individually.

If two objects are entangled, they must be seen as a unified whole regardless of how far from each other they are. They will still behave as one unit--and if the objects are measured individually, the results will be correlated to such a degree that it cannot be described based on the classical laws of nature. This is only possible using quantum mechanics.

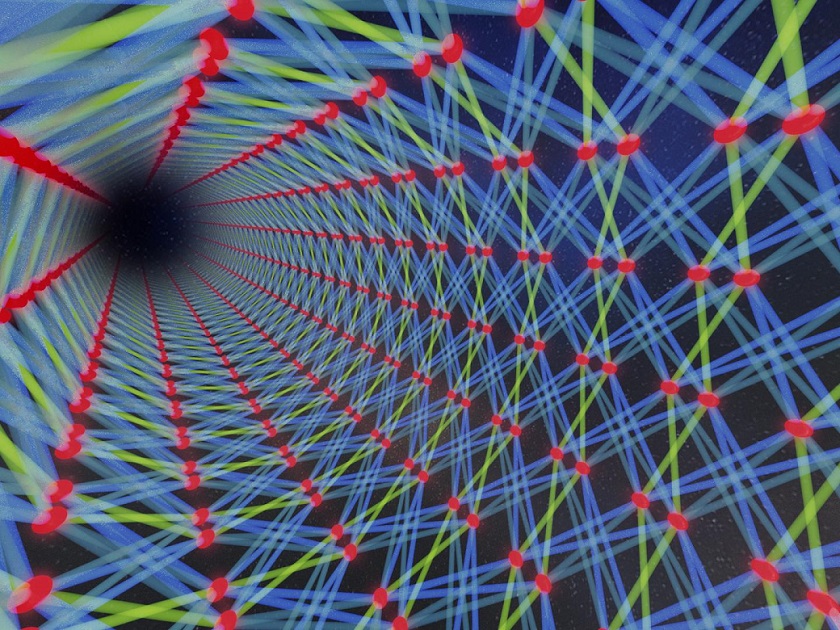

Entanglement is not restricted to pairs of objects. In their efforts to observe quantum phenomena on a macroscopic scale, the researchers at bigQ managed to create a network of 30,000 entangled pulses of light arranged in a two-dimensional lattice distributed in space and time. It is almost like when a myriad of colored threads are woven together into a patterned blanket.

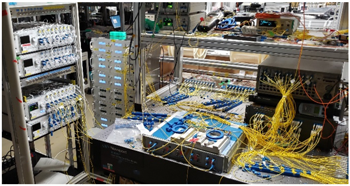

The researchers have produced light beams with special quantum mechanical properties (squeezed states) and woven them together using optical fiber components to form an extremely entangled quantum state with a two-dimensional lattice structure--also called a cluster state.

"As opposed to traditional cluster states, we make use of the temporal degree of freedom to obtain the two-dimensional entangled lattice of 30.000 light pulses. The experimental setup is surprisingly simple. Most of the effort was in developing the idea of the cluster state generation," says Mikkel Vilsbøll Larsen, the lead author of the work.

Creating such an extensive degree of quantum physical entanglement is--in itself--interesting basic research,

The cluster state is also a potential resource for creating an optical quantum computer. This approach is an interesting alternative to the more widespread superconducting technologies, as everything takes place at room temperature.

Also, the long coherence time of the laser light can be utilized--meaning that it is maintained as a precisely defined light wave even over very long distances.

An optical quantum computer will therefore not require costly and advanced refrigeration technology. At the same time, its information-carrying light-based qubits in the laser light will be much more durable than their ultra-cold electronic relatives used in superconductors.

"Through the distribution of the generated cluster state in space and time, an optical quantum computer can also more easily be scaled to contain hundreds of qubits. This makes it a potential candidate for the next generation of larger and more powerful quantum computers," adds Ulrik Lund Andersen.

How to resolve AdBlock issue?

How to resolve AdBlock issue?