Nothing was going any more in the Sun! In the early 2000s, the abundances of the chemical elements on its surface were revised downwards, preventing astrophysicists from reconciling the values predicted by their standard model with these new data. Called into question, these abundances are nevertheless holding up despite several new analyses. We will therefore have to deal with it and it will be up to the solar models to evolve, especially since they serve as a reference for the study of stars in general. A team from the University of Geneva (UNIGE) in Switzerland, in collaboration with the University of Liège in Liège, Wallonia, Belgium, has developed a new theoretical model which solves part of the problem: by taking into account the rotation of the Sun which has varied during the time, and the resulting magnetic fields, scientists have demonstrated that it is possible to explain its chemical structure.

“The Sun is the star that can be best characterized. It thus constitutes a fundamental test for our understanding of stellar physics. We have abundance measurements of its chemical elements, but also measurements of its internal structure as for the Earth thanks to seismology”, explains Patrick Eggenberger, lecturer, and researcher at the UNIGE Astronomy Department. and the first author of the study.

These observations must coincide with the predictions of the theoretical models that attempt to explain its evolution. How will the Sunburn the hydrogen in its core, how the energy produced there will be transported to the outer layers, and how will the chemical elements move under the effect of rotation and magnetic fields?

The standard model of the Sun

"The standard solar model used until now considers our star in a simplified form, on the one hand about the transport of chemical elements in its deepest layers, on the other hand for its rotation and its internal magnetic fields. completely neglected up to now”, emphasizes Gaël Buldgen, a researcher in the UNIGE Department of Astronomy and co-author of the study.

However, all of this worked satisfactorily until the early 2000s, when an international scientific team drastically revised the solar abundances by providing a finer analysis. These new abundances have thrown a big stone in the pond of solar models. From then on, no model was able to reproduce the data obtained by helioseismology, ie the study of the vibrations of the Sun, in particular the abundance of helium in the envelope of the Sun.

New model

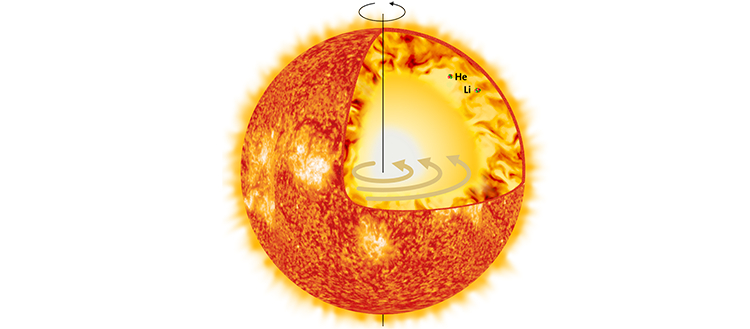

The new model developed by the UNIGE team includes not only the history of the rotation itself, undoubtedly faster in the past, but also the magnetic instabilities it generates. “We must take into account simultaneously the effects of rotation and magnetic fields on the transport of chemical elements in our stellar models. It is important for the Sun as well as for the general physics of stars, with a direct impact on the chemical evolution of the Universe since the chemical elements so important for life on Earth are manufactured in the heart of stars. says Patrick Eggenberger.

The new model succeeds in correctly predicting the concentration of helium in the outer layers of the Sun and that of lithium, which has also resisted modeling until now. “The helium abundance is correctly reproduced by the new model because the internal rotation of the Sun imposed by the magnetic fields generates a turbulent mixing which prevents this element from falling too quickly towards the center of the star; simultaneously, the abundance of lithium observed on the solar surface is also reproduced because this same mixture transports it to the hot regions where it is destroyed”, explains Patrick Eggenberger.

The problem is not fully resolved

Not all the challenges posed by helioseismology are solved by the new model, however: “Thanks to helioseismology, we know with formidable precision, to within 500 km, the region where convective motions of matter begin, at 199,500 km below the surface of the Sun. However, the theoretical models of the Sun predict a depth offset of 10,000 km!” explains Sébastien Salmon, a researcher at UNIGE and co-author of the article. If the problem still exists with the new model, it opens a new door of understanding: “With the new model of this work, we shed light on the physical processes that can help us resolve this critical disagreement.”

Similar Stars Update

“We are going to have to revise the masses, radii, and ages obtained for the stars of the solar-type that we have studied so far”, underlines Gaël Buldgen, detailing the next steps. Indeed, in the vast majority of cases, solar physics is transposed to cases of studies close to the Sun. Therefore, if the models for analyzing the Sun are modified, this update must also be performed for other stars similar to ours.

Patrick Eggenberger specifies: “This is particularly important if we want to better characterize the host stars of planets, for example within the framework of the PLATO mission.” This observatory of 24 telescopes should fly to Lagrange 2 point (1.5 million kilometers from Earth, opposite the Sun) in 2026 to discover and characterize small planets, and refine the characteristics of their host star.

How to resolve AdBlock issue?

How to resolve AdBlock issue?