A breakthrough astrophysics code, named Octo-Tiger, simulates the evolution of self-gravitating and rotating systems of arbitrary geometry using adaptive mesh refinement and a new method to parallelize the code to achieve superior speeds.

This new code to model stellar collisions is more expeditious than the established code used for numerical simulations. The research came from a unique collaboration between experimental computer scientists and astrophysicists in the Louisiana State University Department of Physics & Astronomy, the LSU Center for Computation & Technology, Indiana University Kokomo, and Macquarie University, Australia, culminating in over a year of benchmark testing and scientific simulations, supported by multiple NSF grants, including one specifically designed to break the barrier between computer science and astrophysics.

{media load=media,id=254,width=350,align=left,display=inline}

"Thanks to a significant effort across this collaboration, we now have a reliable computational framework to simulate stellar mergers," said Patrick Motl, professor of physics at Indiana University Kokomo. "By substantially reducing the computational time to complete a simulation, we can begin to ask new questions that could not be addressed when a single-merger simulation was precious and very time-consuming. We can explore more parameter space, examine a simulation at very high spatial resolution or for longer times after a merger, and we can extend the simulations to include more complete physical models by incorporating radiative transfer, for example."

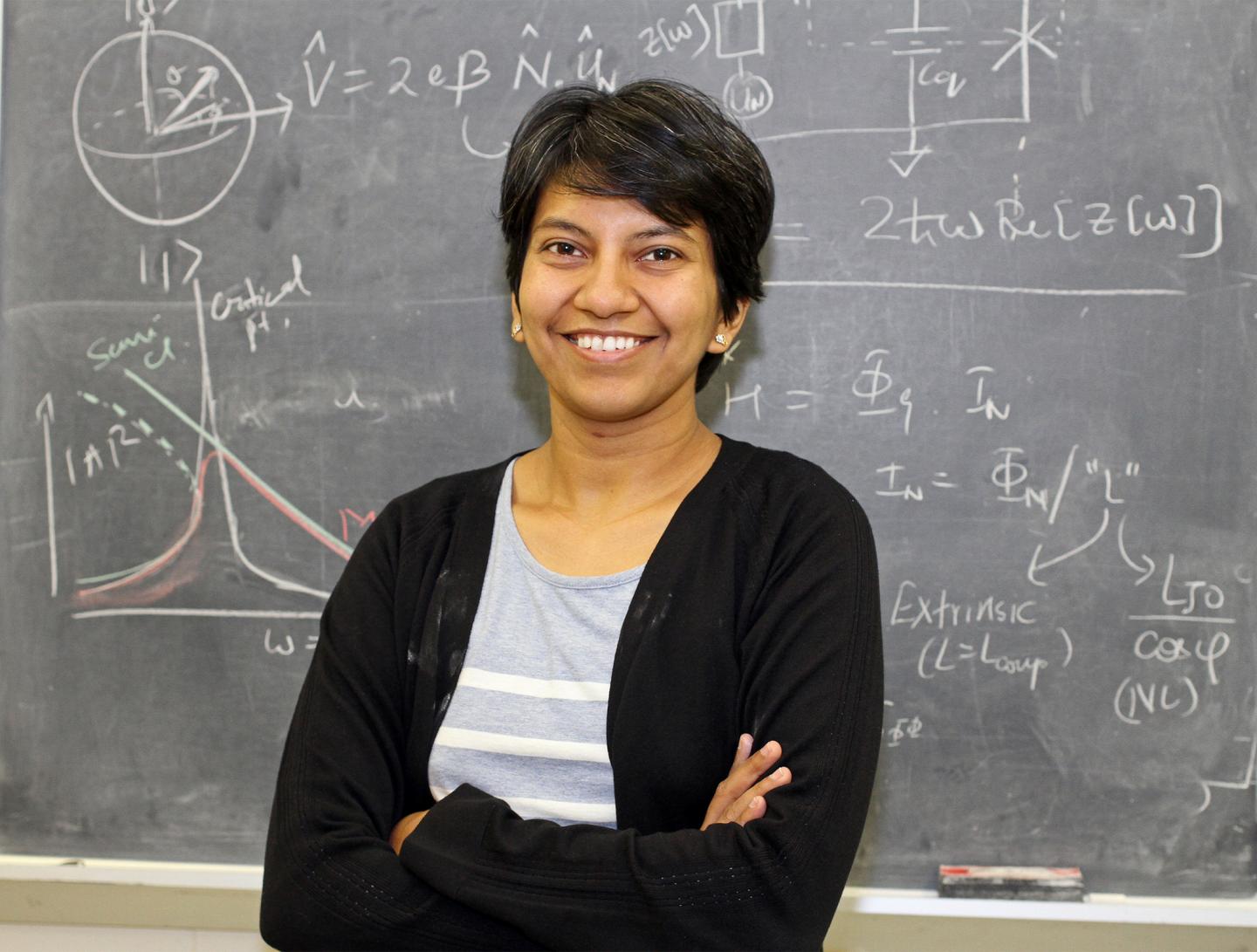

Recently published paper investigates the code performance and precision through benchmark testing. The authors, Dominic C. Marcello, postdoctoral researcher; Sagiv Shiber, postdoctoral researcher; Juhan Frank, professor; Geoffrey C. Clayton, professor; Patrick Diehl, research scientist; and Hartmut Kaiser, research scientist, all at Louisiana State University--together with collaborators Orsola De Marco, professor at Macquarie University and Patrick M. Motl, professor at Indiana University Kokomo--compared their results to analytic solutions when known and other grid-based codes, such as the popular FLASH. Also, they computed the interaction between two white dwarfs from the early mass transfer through to the merger and compared the results with past simulations of similar systems.

"A test on Australia's fastest supercomputer, Gadi, showed that Octo-Tiger, running on a core count over 80,000, displays excellent performance for large models of merging stars," De Marco said. "With Octo-Tiger, we cannot only reduce the wait time dramatically, but our models can answer many more of the questions we care to ask."

Octo-Tiger is currently optimized to simulate the merger of well-resolved stars that can be approximated by barotropic structures, such as white dwarfs or main-sequence stars. The gravity solver conserves angular momentum to machine precision, thanks to a correction algorithm. This code uses HPX parallelization, allowing the overlap of work and communication and leading to excellent scaling properties to solve large problems in shorter time frames.

"This paper demonstrates how an asynchronous task-based runtime system can be used as a practical alternative to Message Passing Interface to support an important astrophysical problem," Diehl said.

The research outlines the current and planned areas of development aimed at tackling several physical phenomena connected to observations of transients.

"While our particular research interest is in stellar mergers and their aftermath, there are a variety of problems in computational astrophysics that Octo-Tiger can address with its basic infrastructure for self-gravitating fluids," Motl said.

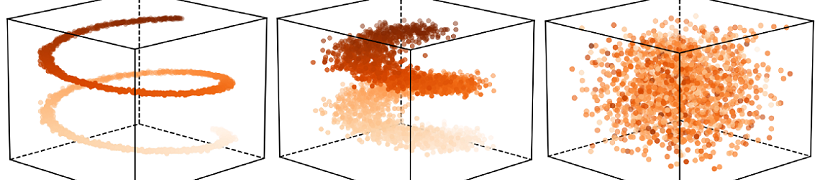

The animation was prepared by Shiber, who says: "Octo-Tiger shows remarkable performance both in the accuracy of the solutions and in scaling to tens of thousands of cores. These results demonstrate Octo-Tiger as an ideal code for modeling mass transfer in binary systems and in simulating stellar mergers."

How to resolve AdBlock issue?

How to resolve AdBlock issue?