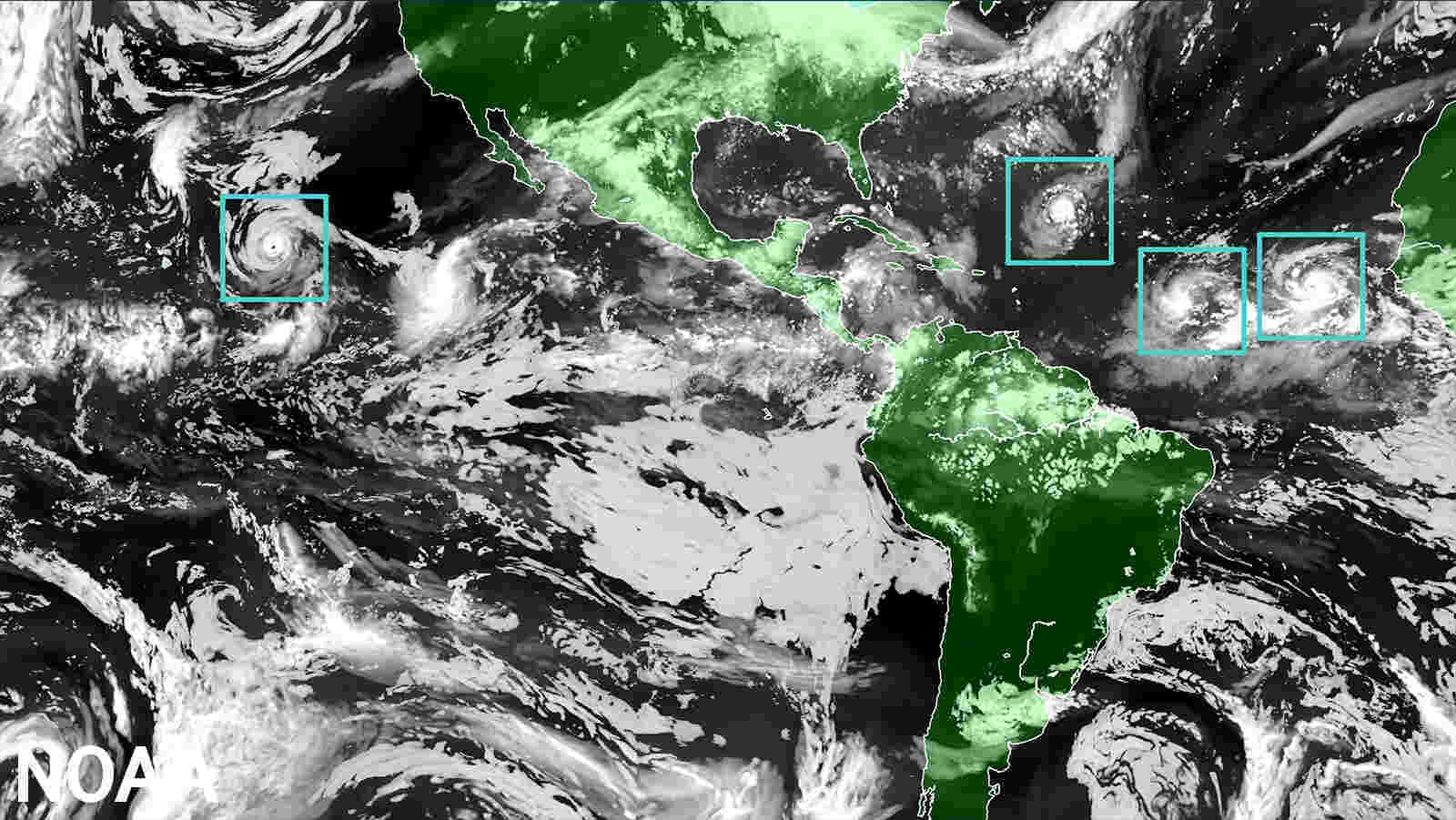

NOAA’s National Hurricane Center — a division of the National Weather Service — has a new model to help produce hurricane forecasts this season. The Hurricane Analysis and Forecast System (HAFS) was put into operation on June 27 and will run alongside existing models for the 2023 season before replacing them as NOAA’s premier hurricane forecasting model.

"The quick deployment of HAFS marks a milestone in NOAA's commitment to advancing our hurricane forecasting capabilities, and ensuring continued improvement of services to the American public," said NOAA Administrator Rick Spinrad, Ph.D. "Development, testing, and evaluations were jointly carried out between scientists at NOAA Research and the National Weather Service, marking a seamless transition from development to operations.”

Running the experimental version of HAFS from 2019 to 2022 showed a 10-15% improvement in track predictions compared to NOAA’s existing hurricane models. HAFS is expected to continue increasing forecast accuracy, therefore reducing storm impacts on lives and property.

HAFS is as good as NOAA’s existing hurricane models when forecasting storm intensity — but is better at predicting rapid intensification. HAFS was the first model last year to accurately predict that Hurricane Ian would undergo secondary rapid intensification as the storm moved off the coast of Cuba and barreled toward southwest Florida.

Over the next four years, HAFS will undergo several major upgrades, ultimately leading to even more increased accuracy of forecasts, warnings, and life-saving information. An objective of the NOAA Hurricane Forecast Improvement Program (HFIP)offsite link is, by 2027, to reduce all model forecast errors by nearly half compared to errors seen in 2017.

HAFS provides more accurate, higher-resolution forecast information both over land and ocean and is comprised of five major components: a high-resolution moving nest; high-resolution physics; multi-scale data assimilation that allows for vortex initialization and vortex cycling; 3-D ocean coupling; and improved assimilation techniques that allow for the assimilation of novel observations. The foundational component is the moving nest, which allows the model to zoom in with a resolution of 1.2 miles on areas of a hurricane that are key to improving wind intensity and rain forecasts.

“With the introduction of the HAFS forecast model into our suite of tropical forecasting tools, our forecasters are better equipped than ever to safeguard lives and property with enhanced accuracy and timely warnings,” said Ken Graham, director of NOAA’s National Weather Service. “HAFS is the result of strong collaborative efforts throughout the science community and marks significant progress in hurricane prediction.”

HAFS, the first regional coupled model to go into operations under the Unified Forecast Systemoffsite link (UFS), was developed through community-based collaboration and the streamlining of the operational transition process. As HAFS uses the FV3 — the same dynamic core as the U.S. Global Forecast System — it will have a unified starting point when initiated for hurricane prediction and will also integrate with ocean and wave models as underlying inputs. The current standalone regional hurricane models, HWRF and HMON, each have their starting point for modeling the atmosphere. Leveraging the FV3 in HAFS reduces overlapping efforts, making the NOAA modeling portfolio more consistent and efficient.

HAFS is also the first new major forecast model implementation using NOAA’s updated weather and climate supercomputers, which were installed last summer. HAFS would not be possible without the speed and power of these new supercomputers, called the Weather and Climate Operational Supercomputing System 2 (WCOSS2).

NOAA developed HAFS as a requirement of the Weather Research and Forecasting Innovation Act of 2017, which directed the agency to conduct ongoing research and development to improve hurricane prediction and warning under the Hurricane Forecast Improvement Programoffsite link. Specifically, the Act called for NOAA to improve prediction capability for rapid intensification and storm track. HAFS development was also enabled by fiscal year 2018 and 2019 hurricane and disaster supplemental funding, and continued acceleration with support from the 2022 Disaster Relief Supplemental Appropriations Act.

HAFS was jointly created by NOAA's National Weather Service Environmental Modeling Center, Atlantic Oceanographic & Meteorological Laboratory, and NOAA's Cooperative Institute for Marine & Atmospheric Studies offsite link.

How to resolve AdBlock issue?

How to resolve AdBlock issue?