The iron that rusts in water theoretically shouldn’t corrode in contact with an “inert” supercritical fluid of carbon dioxide. But it does.

The reason has eluded materials scientists to now, but a team at Rice University has a theory that could contribute to new strategies to protect the iron from the environment.

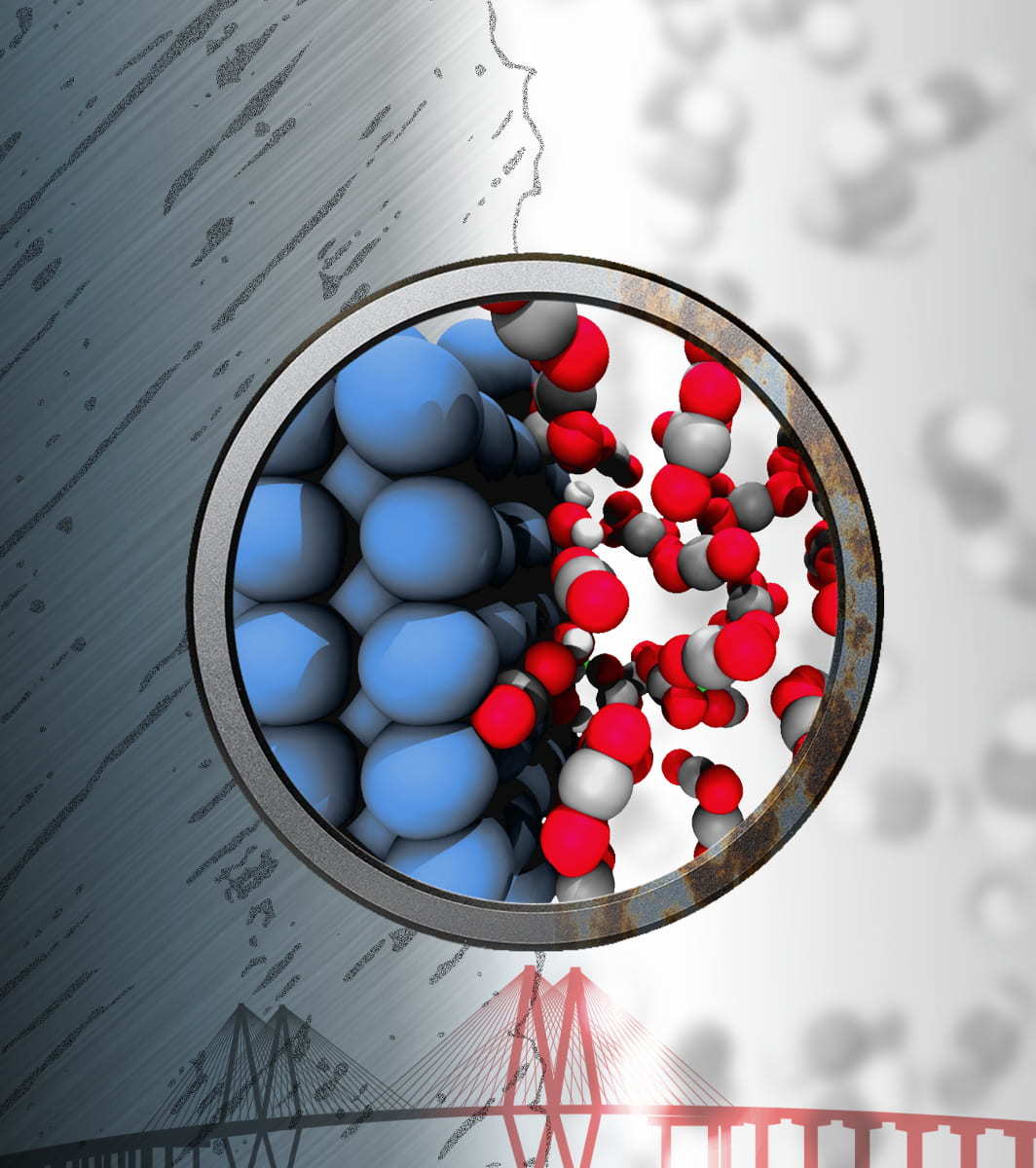

Materials theorist Boris Yakobson and his colleagues at Rice’s George R. Brown School of Engineering found through atom-level simulations that iron itself plays a role in its corrosion when exposed to supercritical CO2 (sCO2) and trace amounts of water by promoting the formation of reactive species in the fluid that come back to attack it.

In their research, published in the Cell Press journal Matter, they conclude that thin hydrophobic layers of 2D materials like graphene or hexagonal boron nitride could be employed as a barrier between iron atoms and the reactive elements of sCO2.

Rice graduate student Qin-Kun Li and research scientist Alex Kutana are co-led authors of the paper. Rice assistant research professor Evgeni Penev is a co-author.

Supercritical fluids are materials at a temperature and pressure that keeps them roughly between phases -- say, not all liquid, but not yet all gas. The properties of sCO2 make it an ideal working fluid because, according to the researchers, it is “essentially inert,” noncorrosive, and low-cost.

“Eliminating corrosion is a constant challenge, and it’s on a lot of people’s minds right now as the government prepares to invest heavily in infrastructure,” said Yakobson, the Karl F. Hasselmann Professor of Materials Science and NanoEngineering and a professor of chemistry. “Iron is a pillar of infrastructure from ancient times, but only now are we able to get an atomistic understanding of how it corrodes.”

The Rice lab’s simulations reveal the devil’s in the details. Previous studies have attributed corrosion to the presence of bulk water and other contaminants in the superfluid, but that isn’t necessarily the case, Yakobson said.

“Water, as the primary impurity in sCO2, provides a hydrogen bond network to trigger interfacial reactions with CO2 and other impurities like nitrous oxide and to form corrosive acid detrimental to iron,” Li said.

The simulations also showed that the iron itself acts as a catalyst, lowering the reaction energy barriers at the interface between iron and sCO2, ultimately leading to the formation of a host of corrosive species: oxygen, hydroxide, carboxylic acid, and nitrous acid.

To the researchers, the study illustrates the power of theoretical modeling to solve complicated chemistry problems, in this case predicting thermodynamic reactions and estimates of corrosion rates at the interface between iron and sCO2. They also showed all bets are off if there’s more than a trace of water in the superfluid, accelerating corrosion.

How to resolve AdBlock issue?

How to resolve AdBlock issue?