Suggestions that COVID-19 is on the wane have been strongly contradicted by the World Health Organization’s senior pandemics scientist, Dr. Maria Van Kerkhove.

And her criticism of virus complacency has fuelled calls for research and development of anti-viral drugs to stop all coronaviruses at source, in addition to ongoing vaccines and testing for COVID-19 variants.

Dr. Van Kerkhove, a highly regarded infectious disease epidemiologist and World Health Organization (WHO) Head of the Emerging Diseases and Zoonoses Unit, delivered her wake-up call in a BBC TV interview where she insisted that COVID-19 was still evolving and the world must evolve with it:

“It will not end with this latest wave (Omicron) and it will not be the last variant you will hear us (WHO) speaking about – unfortunately,” she told BBC interviewer Sophie Raworth.

Countries with high immunity and vaccination levels were starting to think the pandemic is over, she added, but despite 10 billion vaccine doses delivered globally, more than three billion people were yet to receive one dose, leaving the world highly susceptible to further COVID mutations - a global problem for which a global solution was needed.

She also challenged assumptions that the COVID Omicron variant was mild: “It is still putting people in hospital…and it will not be the last (variant). There is no guarantee that the next one will be less severe. We must keep the pressure up – we cannot give it a free ride.”

This drew a response from the World Nano Foundation (WNF), a not-for-profit organization that promotes many of the innovations – including nanomedicines, AI and super computational drug development platforms, testing, and vaccine development – that have played vital roles in fighting the COVID pandemic.

WNF Chairman Paul Stannard said: “We welcome Dr. Van Kerkhove’s timely intervention. Too many people think we can sit back with COVID now, forgetting lessons learned the hard way.

“Such as there’s always another variant just around the corner, and testing and vaccines are not the complete answer.

“Even if Omicron seems milder than its predecessors – though this may be due to vaccinations and growing herd immunity – who can say that a more fatal COVID mutation will not follow, or an all-new virus is waiting to strike.

“Many other pathogens have entered humans in last 15 years including SARs Ebola, Zika virus and Indian Flu variants, so permanent pandemic protection investment is vital to restoring confidence in our way of life and the global markets.

“An even older lesson is Spanish Flu (1918-20): the death toll was relatively contained initially, lulling people already fatigued by WW1 devastation into thinking the worst was over.

“But that virus then mutated into its most deadly strain, killing 50 million people when Earth’s population numbered less than two billion. All of which suggests we must maintain or redouble our efforts against COVID-19 and other potential threats.

“We have already benefitted from greater healthcare investment and research due to the pandemic: experts say the first six months of the emergency delivered sector progress equivalent to the previous 10 years.

“This helped unusually rapid deployment of new and better testing and vaccines that have driven down infection, hospitalization, and deaths, but we hope that the WHO view will now foster a new and potentially more effective development against COVID and other threats – anti-viral drugs.

“Instead of attacking the virus-like a vaccine, anti-viral drugs aim to stop it functioning in the human body. Merck and Pfizer say they have re-purposed existing drugs to do just that.

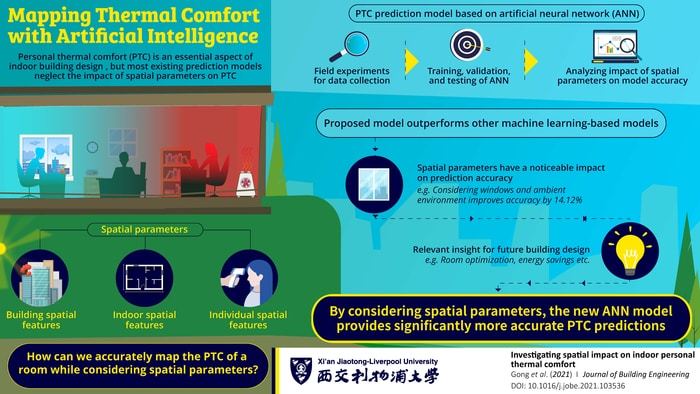

“But a better option is gathering momentum using nanomedicine, AI, and advanced super computational technology to develop all-new drugs more quickly and effectively, potentially delivering breakthroughs against many serious killers, including viruses, cancers, and heart disease.

“WNF believes these can disrupt the traditional pharmaceutical industry as Tesla has done in the auto industry, or SpaceX and Blue Origin have done in space.”

California-based Verseon has developed an AI and computational drug development platform and has six drug candidates, including an anti-viral drug to potentially block all coronaviruses and some flu variants, potentially transforming pandemic protection.

This could be on the market within 18 months after securing a final $60 million investment, a small amount compared to the $1 billion pharma industry norm for a single new drug (source: Biospace), and weighed against 5.6 million COVID deaths globally and an estimated $3 trillion in economic output (source: Statista) lost since the start of the pandemic.

Verseon Head of Discovery Biology Anirban Datta said: “Vaccines and the current anti-viral drugs are retrospective solutions that don’t treat newly emergent strains. We need a different strategy to avoid always being one step behind viral mutations.

“So, we switched target from the virus to the human host. If we stop SARS-CoV-2 (COVID-19) from entering our cells which, unlike viruses, don’t mutate then we have a long-term solution.

“Even better, the strategy should work against other coronaviruses and influenza strains that use the same mechanism as SARS-CoV-2 to infect cells – a key point, since it surely won’t be the last pandemic to affect humanity.”

How to resolve AdBlock issue?

How to resolve AdBlock issue?