People with autism spectrum disorder can be classified into four distinct subtypes based on their brain activity and behavior, according to a study from Weill Cornell Medicine investigators in New York City.

The study leveraged machine learning to analyze newly available neuroimaging data from 299 people with autism and 907 neurotypical people. They found patterns of brain connections linked with behavioral traits in people with autism, such as verbal ability, social effect, and repetitive or stereotypic behaviors. They confirmed that the four autism subgroups could also be replicated in a separate dataset and showed that differences in regional gene expression and protein-protein interactions explain the brain and behavioral differences.

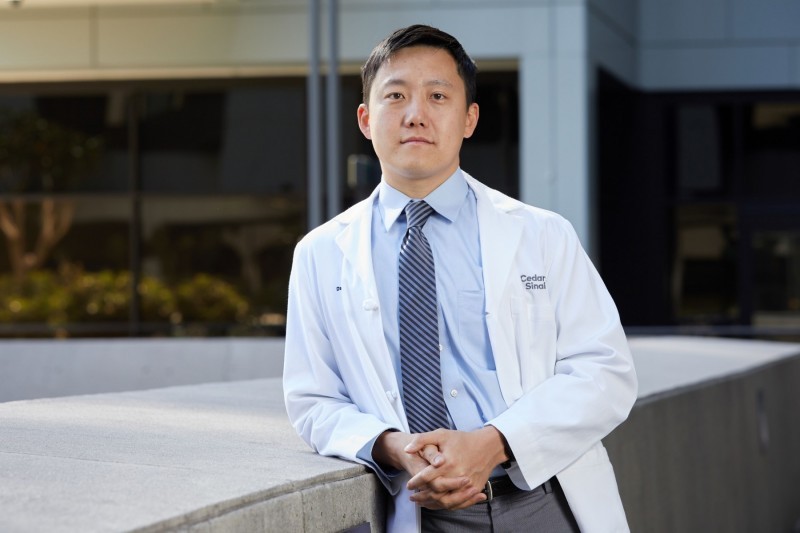

“Like many neuropsychiatric diagnoses, individuals with autism spectrum disorder experience many different types of difficulties with social interaction, communication, and repetitive behaviors. Scientists believe there are probably many different types of autism spectrum disorder that might require different treatments, but there is no consensus on how to define them,” said co-senior author Dr. Conor Liston, an associate professor of psychiatry and of neuroscience in the Feil Family Brain and Mind Research Institute at Weill Cornell Medicine. “Our work highlights a new approach to discovering subtypes of autism that might one day lead to new approaches for diagnosis and treatment.”

A previous study published by Dr. Liston and colleagues in Nature Medicine in 2017 used similar machine-learning methods to identify four biologically distinct subtypes of depression, and subsequent work has shown that those subgroups respond differently to various depression therapies.

“If you put people with depression in the right group, you can assign them the best therapy,” said lead author Dr. Amanda Buch, a postdoctoral associate of neuroscience in psychiatry at Weill Cornell Medicine.

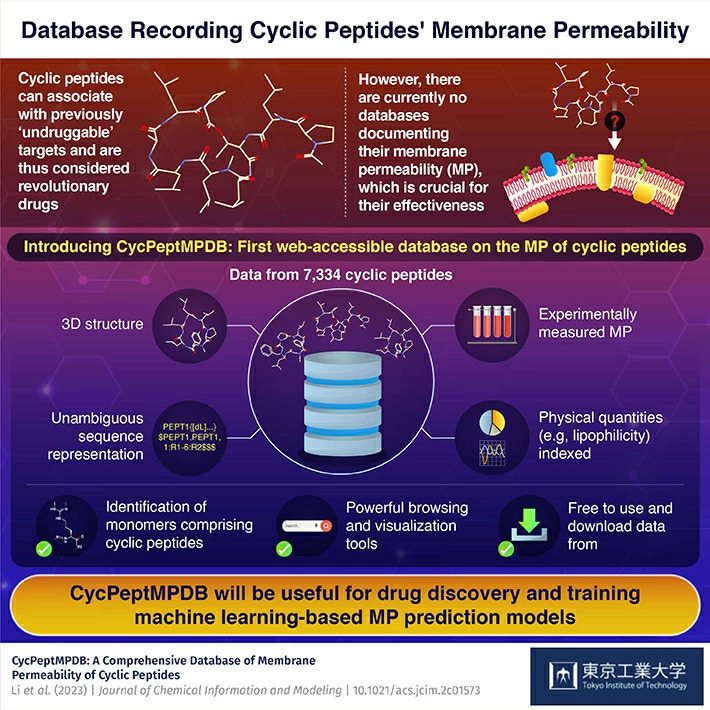

Building on that success, the team set out to determine if similar subgroups exist among individuals with autism, and whether different gene pathways underlie them. She explained that autism is a highly heritable condition associated with hundreds of genes that have diverse presentations and limited therapeutic options. To investigate this, Dr. Buch pioneered new analyses for integrating neuroimaging data with gene expression data and proteomics, introducing them to the lab and enabling testing and developing hypotheses about how risk variants interact in the autism subgroups.

“One of the barriers to developing therapies for autism is that the diagnostic criteria are broad, and thus apply to a large and phenotypically diverse group of people with different underlying biological mechanisms,” Dr. Buch said. “To personalize therapies for individuals with autism, it will be important to understand and target this biological diversity. It is hard to identify the optimal therapy when everyone is treated as being the same when they are each unique.”

Until recently, there were not large enough collections of functional magnetic resonance imaging data of people with autism to conduct large-scale machine learning studies, Dr. Buch noted. But a large dataset created and shared by Dr. Adriana Di Martino, research director of the Autism Center at the Child Mind Institute, as well as other colleagues across the country, provided the large dataset needed for the study.

“New methods of machine learning that can deal with thousands of genes, brain activity differences, and multiple behavioral variations made the study possible,” said co-senior author Dr. Logan Grosenick, an assistant professor of neuroscience in psychiatry at Weill Cornell Medicine, who pioneered machine-learning techniques used for biological subtyping in the autism and depression studies.

Those advances allowed the team to identify four clinically distinct groups of people with autism. Two of the groups had above-average verbal intelligence. One group also had severe deficits in social communication but less repetitive behaviors, while the other had more repetitive behaviors and less social impairment. The connections between the parts of the brain that process visual information and help the brain identify the most salient incoming information were hyperactive in the subgroup with more social impairment. These same connections were weak in the group with more repetitive behaviors.

“It was interesting on a brain circuit level that there were similar brain networks implicated in both of these subtypes, but the connections in these same networks were atypical in opposite directions,” said Dr. Buch, who completed her doctorate from Weill Cornell Graduate School of Medical Sciences in Dr. Liston’s lab and is now working in Dr. Grosenick’s lab.

The other two groups had severe social impairments and repetitive behaviors but had verbal abilities at the opposite ends of the spectrum. Despite some behavioral similarities, the investigators discovered completely distinct brain connection patterns in these two subgroups.

The team analyzed gene expression that explained the atypical brain connections present in each subgroup to better understand what was causing the differences and found many were genes previously linked with autism. They also analyzed network interactions between proteins associated with the atypical brain connections, and looked for proteins that might serve as a hub. Oxytocin, a protein previously linked with positive social interactions, was a hub protein in the subgroup of individuals with more social impairment but relatively limited repetitive behaviors. Studies have looked at using intranasal oxytocin as a therapy for people with autism with mixed results, Dr. Buch said. She said it would be interesting to test whether oxytocin therapy is more effective in this subgroup.

“You could have treatment that is working in a subgroup of people with autism, but that benefit washes out in the larger trial because you are not paying attention to subgroups,” Dr. Grosenick said.

The team confirmed their results on a second human dataset, finding the same four subgroups. As a final verification of the team’s results, Dr. Buch conducted an unbiased text-mining analysis she developed of biomedical literature that showed other studies had independently connected the autism-linked genes with the same behavioral traits associated with the subgroups.

The team will next study these subgroups and potential subgroup-targeted treatments in mice. Collaborations with several other research teams with large human datasets are also underway. The team is also working to refine their machine-learning techniques further.

“We are trying to make our machine learning more cluster-aware,” Dr. Grosenick said.

In the meantime, Dr. Buch said they’ve received encouraging feedback from individuals with autism about their work. One neuroscientist with autism spoke to Dr. Buch after a presentation and said his diagnosis was confusing because his autism was so different than others but that her data helped explain his experience.

“Being diagnosed with a subtype of autism could have been helpful for him,” Dr. Buch said.

How to resolve AdBlock issue?

How to resolve AdBlock issue?