On 30 April 2023, all nominal operations of Aeolus, the first mission to observe Earth’s wind profiles on a global scale, will conclude in preparation for a series of end-of-life activities.

Although a recent upgrade to Aeolus’ original laser meant that in its last months it has been performing as well as ever, diminishing fuel combined with increasing solar activity means the mission must come to an end.

That ESA’s wind mission has made it this far is a great achievement, having outlived its predicted lifetime of three years by over 18 months.

But it’s not over just yet.

Over the past year, scientists and industry specialists have been designing a thorough roadmap to bring the Aeolus mission to a close. After much consideration and careful planning, it was decided that the best course of action is to carefully re-enter the satellite back to Earth.

The finishing touches to the end-of-life schedule will be made over the coming weeks and a timeline will be announced in due course.

In the meantime, Aeolus will provide data as usual up to the end of operations on 30 April 2023. While no new operational data will be gathered after 30 April, the mission's existing data will still be available to users.

"My gratitude goes to all our ESA and industry colleagues who have developed and operated this unique mission,” said Aeolus Mission Manager, Tommaso Parrinello.

“A special thanks goes to the scientific community, whose support has been outstanding and has contributed to one of the most successful missions ever flown by ESA.”

A trailblazing wind mission

Aeolus, ESA’s fifth Earth Explorer, was tasked with an extraordinarily challenging and pioneering feat: to measure global winds from space using a laser.

Its launch in 2018 was an achievement some thought might not be possible, especially after many years of grit and determination to make its experimental technology work. Plenty of headscratchers and setbacks were encountered along the way.

Once in orbit, Aeolus met further trials, being forced into switching to its backup laser less than a year after launch.

The struggles were worth it, as Europe’s wind mission triumphed.

Aeolus data are now used by major weather forecasting services worldwide, including the European Centre for Medium-Range Weather Forecasts (ECMWF), Météo-France, the UK Met Office, Germany’s Deutscher Wetterdienst (DWD), and India’s National Centre for Medium-Range Weather Forecasting (NCMRWF).

Its many successes, including economic benefits valued at over €3.5 billion, meaning that an operational follow-on mission called Aeolus-2 will be launched within a decade.

Remarkable improvements in weather forecasts

Aeolus carries an instrument known as ALADIN, which is Europe’s most sophisticated Doppler wind lidar flown in space. A laser fires pulses of ultraviolet light toward Earth’s atmosphere, and a receiver detects the light that is scattered back from air molecules, water molecules, and aerosols such as dust.

Thanks to subtle changes in the properties of the light that is received, we can measure how quickly these particles travel away from Aeolus - the speed of the wind.

Over its four-and-a-half-year lifetime, orbiting Earth 16 times a day and covering the entire globe once a week, ALADIN has beamed down over seven billion laser pulses.

Supported by the ground segment team, well over 99.5% of the data collected reached users such as weather forecasters within three hours.

The impacts have been remarkable.

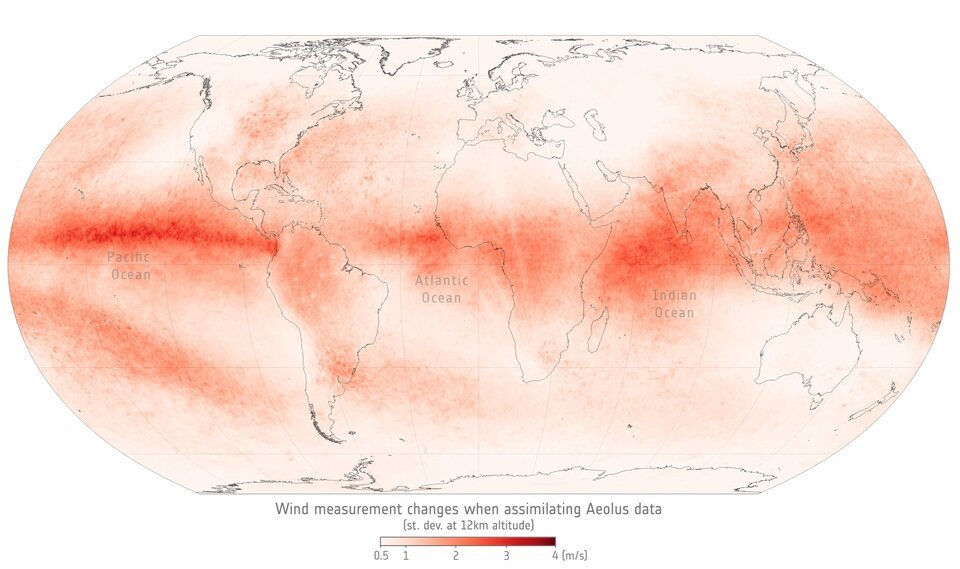

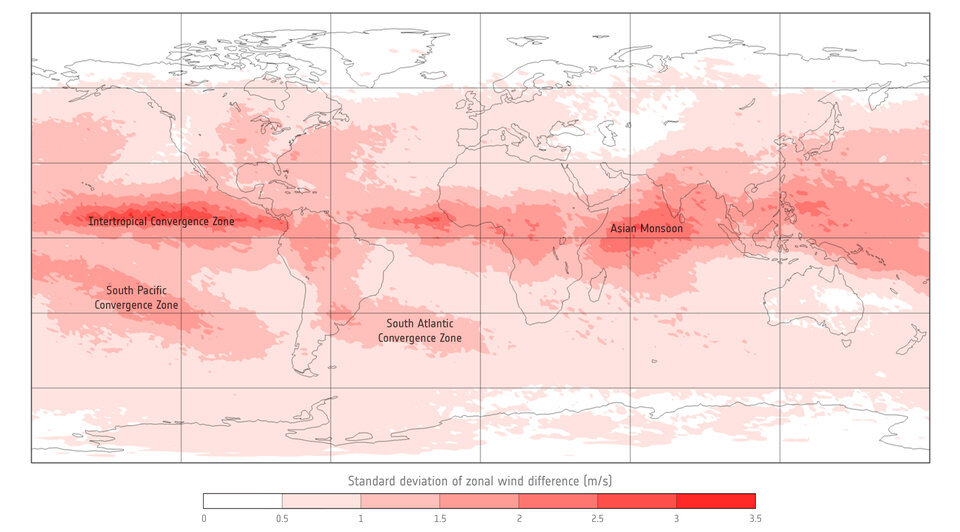

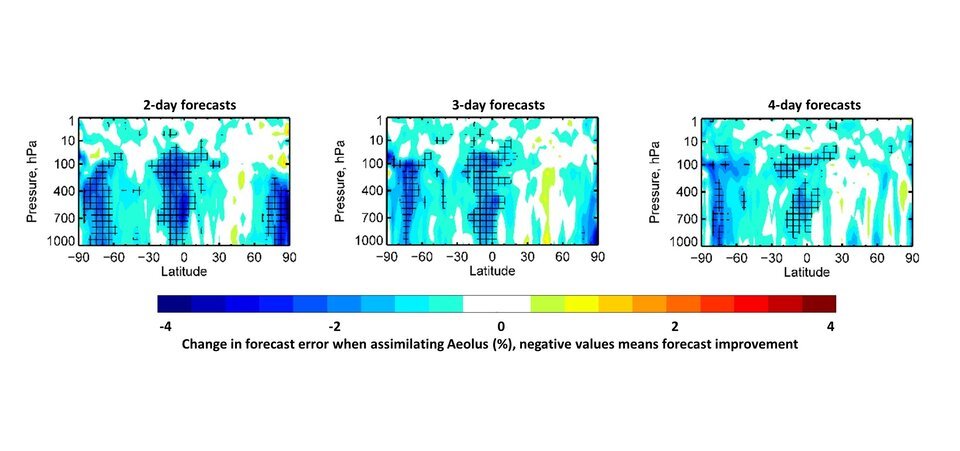

Since ECMWF started assimilating Aeolus data in 2020 the satellite has become one of the highest impact-per-observation instruments in existence.

A lot is down to Aeolus’ capacity to measure winds where data are scarce. When planes were grounded during the lockdowns imposed due to the COVID pandemic, Aeolus was able to contribute missing data to plug the gap in weather forecasts.

Researchers recently concluded that Aeolus data could also help to improve forecasting of hurricanes in regions of the planet where reconnaissance flights are sparse, particularly over the tropics.

A universal collaboration

The Aeolus mission has been underpinned by a tightly-knit, Europe-wide collaboration of over forty experts that make up the Aeolus Data, Innovation, and Science Cluster (DISC).

Years of calibration and validation activities by the DISC, including tens of thousands of kilometers flown in field campaigns from Greenland to Cape Verde, have honed and improved the instrument and the quality of its data.

In recent years, an international collaboration known as the Joint Aeolus Tropical Airborne Campaign (JATAC) has expanded the remit of Aeolus, honing in on the use of Aeolus data to measure the role of aerosols in tropical weather systems.

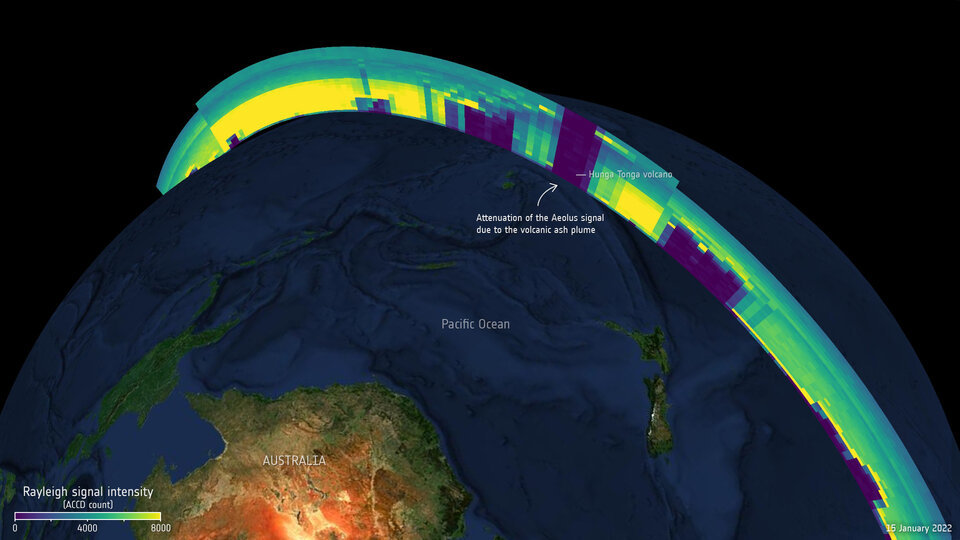

Where aerosols are concerned, Aeolus has managed to provide unique insight into volcanic plumes. The satellite was able to track the huge Hunga Tonga eruption of January 2022 and observed a completely new atmospheric phenomenon following the eruption of Raikoke in 2019.

Aeolus wind data also improve supercomputer modeling of plumes as they spread through Earth’s atmosphere, which benefits air traffic safety.

Other innovative projects have used Aeolus data to understand a range of phenomena from Saharan dust to ocean biochemistry and sea surface winds.

The results will inform future Earth Explorer missions such as EarthCARE, a collaborative mission between ESA and JAXA that will carry a similar lidar instrument to measure atmospheric aerosols and clouds.

"The Aeolus mission has been a triumph of European innovation, collaboration, and technical excellence," says ESA’s Director of Earth Observation Programmes, Simonetta Cheli.

"Aeolus is another example of how ESA’s Earth Explorers perform beyond expectations, and a shining light for our Future EO program. Its impacts will live long beyond its lifetime in space, paving the way for future operational missions such as Aeolus-2."

How to resolve AdBlock issue?

How to resolve AdBlock issue?

![A photo of the huge elliptical galaxy M87 [left] is compared to its three-dimensional shape as gleaned from meticulous observations made with the Hubble and Keck telescopes [right]. Because the galaxy is too far away for astronomers to employ stereoscopic vision, they instead followed the motion of stars around the center of M87, like bees around a hive. This created a three-dimensional view of how stars are distributed within the galaxy. CREDIT ILLUSTRATION: NASA, ESA, Joseph Olmsted (STScI), Frank Summers (STScI) SCIENCE: Chung-Pei Ma (UC Berkeley) A photo of the huge elliptical galaxy M87 [left] is compared to its three-dimensional shape as gleaned from meticulous observations made with the Hubble and Keck telescopes [right]. Because the galaxy is too far away for astronomers to employ stereoscopic vision, they instead followed the motion of stars around the center of M87, like bees around a hive. This created a three-dimensional view of how stars are distributed within the galaxy. CREDIT ILLUSTRATION: NASA, ESA, Joseph Olmsted (STScI), Frank Summers (STScI) SCIENCE: Chung-Pei Ma (UC Berkeley)](/images/hubble_keck_m87_3d_stsci-01gxs1t379z0rzp5gvvbdd82qh_0439d.jpg)