A research team from Jena (Germany) and Turin (Italy) has reconstructed the origin of an unusual gravitational wave signal. The signal GW190521 may result from the merger of two enormous black holes that captured each other in their gravitational field and then collided while spinning around each other in a rapid, eccentric motion.

A research team from Jena (Germany) and Turin (Italy) has reconstructed the origin of an unusual gravitational wave signal. The signal GW190521 may result from the merger of two enormous black holes that captured each other in their gravitational field and then collided while spinning around each other in a rapid, eccentric motion.

When black holes collide in the universe, the clash shakes up space and time: the amount of energy released during the merger is so great that it causes space-time to oscillate, similar to waves on the surface of the water. These gravitational waves spread out through the entire universe and can still be measured thousands of light years away, as was the case on May 21, 2019, when the two gravitational wave observatories LIGO (USA) and Virgo (Italy) captured such a signal. Named GW190521 after the date of its discovery, the gravitational wave event has since provoked discussion among experts because it differs markedly from previously measured signals.

The signal had initially been interpreted to mean that the collision involved two black holes moving in near-circular orbits around each other. “Such binary systems can be created by a number of astrophysical processes,” explains Prof. Sebastiano Bernuzzi, a theoretical physicist from the University of Jena, Germany. Most of the black holes discovered by LIGO and Virgo, for example, are of stellar origin. “That means they are the remnants of massive stars in binary star systems,” adds Bernuzzi, who led the current study. Such black holes orbit each other in quasi-circular orbits, just as the original stars did previously.

One black hole captures a second

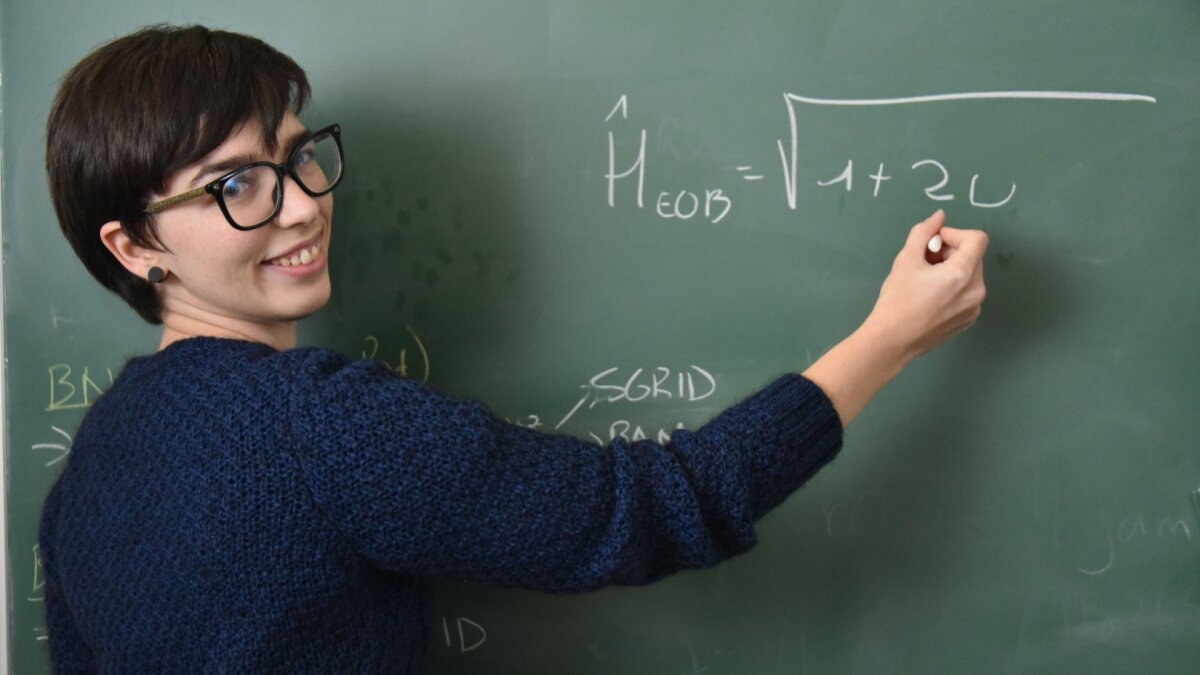

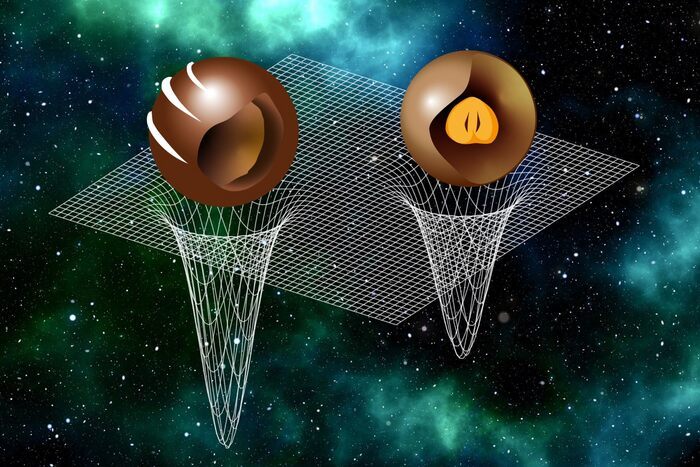

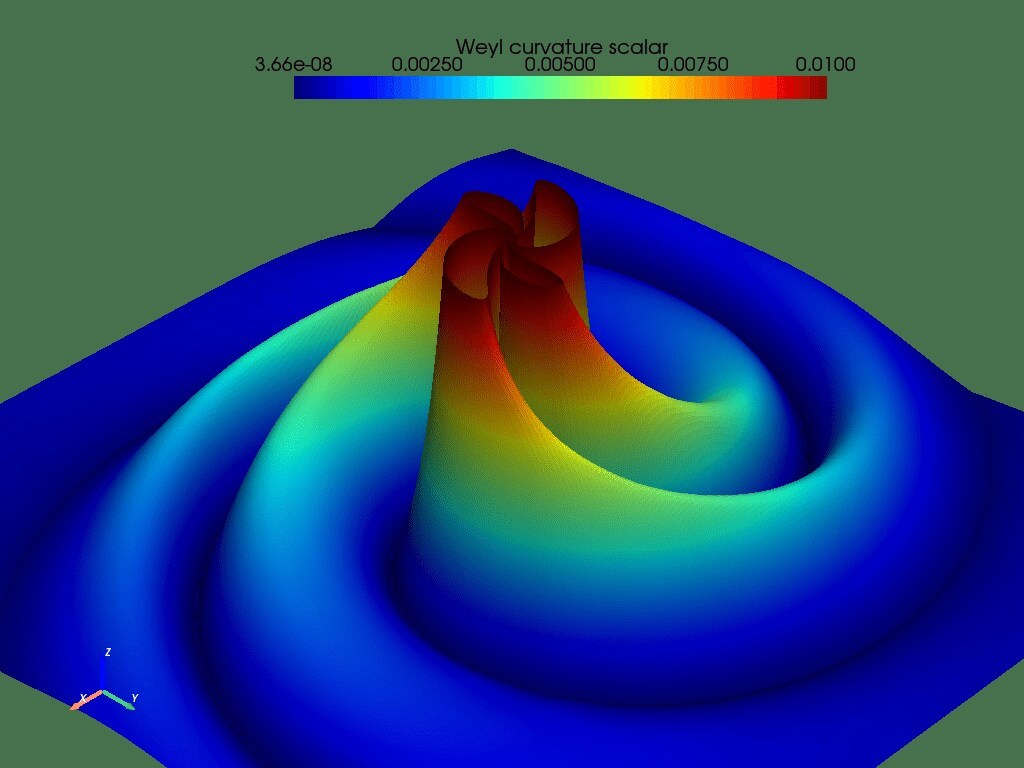

“GW190521 behaves significantly differently, however,” explains Rossella Gamba. The lead author of the publication is doing her doctorate in Jena Research Training Group 2522 and is part of Bernuzzi’s team. “Its morphology and explosion-like structure are very different from previous observations.” So, Rossella Gamba and her colleagues set out to find an alternative explanation for the unusual gravitational wave signal. Using a combination of state-of-the-art analytical methods and numerical simulations on supercomputers, they calculated different models for cosmic collision. They came to the conclusion that it must have occurred on a strongly eccentric path instead of a quasi-circular one. A black hole initially moves freely in an environment that is relatively densely filled with matter and, as soon as it gets close to another black hole, it can be “captured” by the other’s gravitational field. This also leads to the formation of a binary system, but here the two black holes do not orbit in a circle, but move eccentrically, in tumbling motions around each other.

“Such a scenario explains the observations much better than any other hypothesis presented so far. The probability is 1:4300,” says Matteo Breschi, doctoral student and co-author of the study, who developed the infrastructure for the analysis. And postdoctoral researcher Dr. Gregorio Carullo adds: “Even though we don’t currently know exactly how common such dynamic movements by black holes are, we don’t expect them to be a frequent occurrence.” This makes the current results all the more exciting, he adds. Nevertheless, more research is needed to clarify beyond doubt the processes that created GW190521.

Teamwork in the Research Training Group

For the current project, the teams in Turin and Jena (as part of the German Research Foundation-funded Jena Research Training Group 2522 “Dynamics and Criticality in Quantum and Gravitational Systems”) developed a general relativistic framework for the eccentric merger of black holes and verified the analytical predictions using simulations of Einstein’s equations. For the first time, models of dynamic encounters were used in the analysis of gravitational wave observation data.

How to resolve AdBlock issue?

How to resolve AdBlock issue?