Researchers at Rochester and Erlangen have taken a decisive step toward creating ultra-fast supercomputers.

A long-standing quest for science and technology has been to develop electronics and information processing that operate near the fastest timescales allowed by the laws of nature.

A promising way to achieve this goal involves using laser light to guide the motion of electrons in the matter and then using this control to develop electronic circuit elements—a concept known as lightwave electronics.

Remarkably, lasers currently allow us to generate bursts of electricity on femtosecond timescales—that is, in a millionth of a billionth of a second. Yet our ability to process information in these ultrafast timescales has remained elusive.

Now, researchers at the University of Rochester and the Friedrich-Alexander-Universität Erlangen-Nürnberg (FAU) have made a decisive step in this direction by demonstrating a logic gate—the building block of computation and information processing—that operates at femtosecond timescales. The feat was accomplished by harnessing and independently controlling, for the first time, the real and virtual charge carriers that compose these ultrafast bursts of electricity.

The researchers’ advances have opened the door to information processing at the petahertz limit, where one quadrillion computational operations can be processed per second. That is almost a million times faster than today’s computers operating with gigahertz clock rates, where 1 petahertz is 1 million gigahertz.

“This is a great example of how fundamental science can lead to new technologies,” says Ignacio Franco, an associate professor of chemistry and physics at Rochester who, in collaboration with doctoral student Antonio José Garzón-Ramírez ’21 (Ph.D.), performed theoretical studies that lead to this discovery.

Lasers generate ultrafast bursts of electricity

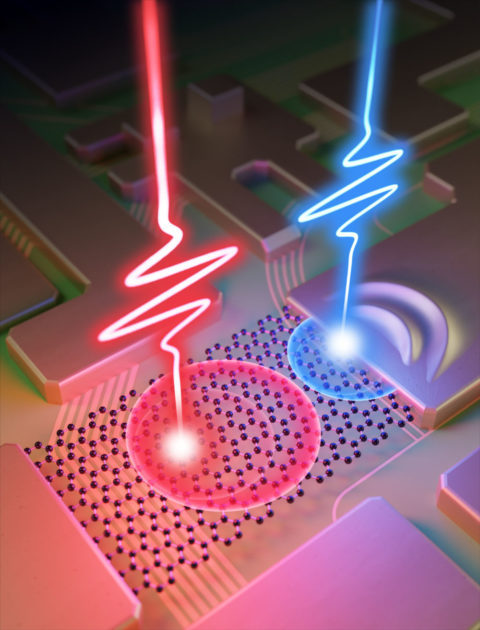

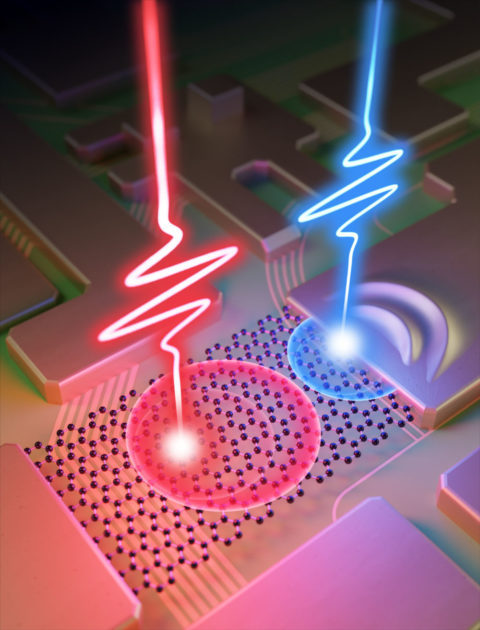

In recent years, scientists have learned how to exploit laser pulses that last a few femtoseconds to generate ultrafast bursts of electrical currents. This is done, for example, by illuminating tiny graphene-based wires connecting two gold metals. The ultrashort laser pulse sets in motion, or “excites,” the electrons in graphene and, importantly, sends them in a particular direction—thus generating a net electrical current.

Laser pulses can produce electricity far faster than any traditional method—and do so in the absence of applied voltage. Further, the direction and magnitude of the current can be controlled simply by varying the shape of the laser pulse (that is, by changing its phase).

The breakthrough: Harnessing real and virtual charge carriers

The research groups of Franco and FAU’s Peter Hommelhoff have been working for several years to turn light waves into ultrafast current pulses.

In trying to reconcile the experimental measurements at Erlangen with computational simulations at Rochester, the team had a realization: In gold-graphene-gold junctions, it is possible to generate two flavors—“real” and “virtual”—of the particles carrying the charges that compose these bursts of electricity.

- “Real” charge carriers are electrons excited by the light that remains in directional motion even after the laser pulse is turned off.

- “Virtual” charge carriers are electrons that are only set in net directional motion while the laser pulse is on. As such, they are elusive species that only live transiently during illumination.

Because the graphene is connected to gold, both natural and virtual charge carriers are absorbed by the metal to produce a net current.

Strikingly, the team discovered that by changing the shape of the laser pulse, they could generate currents where only the real or the virtual charge carriers play a role. In other words, they not only generated two flavors of currents, but they also learned how to control them independently, a finding that drastically augments the elements of design in lightwave electronics.

Logic gates through lasers

Using this augmented control landscape, the team was able to experimentally demonstrate, for the first time, logic gates that operate on a femtosecond timescale.

Logic gates are the basic building blocks needed for computations. They control how incoming information, which takes the form of 0 or 1 (known as bits), is processed. Logic gates require two input signals and yield a logic output.

In the researchers’ experiment, the input signals are the shape or phase of two synchronized laser pulses, each one chosen to only generate a burst of real or virtual charge carriers. Depending on the laser phases used, these two contributions to the currents can either add up or cancel out. The net electrical signal can be assigned logical information 0 or 1, yielding an ultrafast logic gate.

“It will probably be a very long time before this technique can be used in a computer chip, but at least we now know that lightwave electronics is practically possible,” says Tobias Boolakee. He led the experimental efforts as a Ph.D. student at FAU.

“Our results pave the way toward ultrafast electronics and information processing,” says Garzón-Ramírez ’21 (Ph.D.), now a postdoctoral researcher at McGill University.

“What is amazing about this logic gate,” Franco says, “is that the operations are performed not in gigahertz, like in regular computers, but in petahertz, which are one million times faster. This is because of the short laser pulses used that occur in a millionth of a billionth of a second.”

From fundamentals to applications

This new, potentially transformative technology arose from fundamental studies of how charge can be driven in nanoscale systems with lasers.

“Through fundamental theory and its connection with the experiments, we clarified the role of virtual and real charge carriers in laser-induced currents, which opened the way to creating ultrafast logic gates,” says Franco.

The study represents more than 15 years of research by Franco. In 2007, as a Ph.D. student at the University of Toronto, he devised a method to generate ultrafast electrical currents in molecular wires exposed to femtosecond laser pulses. This initial proposal was later implemented experimentally in 2013 and the detailed mechanism behind the experiments was explained by the Franco group in a 2018 study. Since then, there has been what Franco calls “explosive” experimental and theoretical growth in this area.

“This is an area where theory and experiments challenge each other and, in doing so, unveil new fundamental discoveries and promising technologies,” he says.

How to resolve AdBlock issue?

How to resolve AdBlock issue?