EpiMoRPH is envisioned as a collaborative online “hub,” making forecasting epidemics vastly more transparent and reliable

Mathematical modeling—which combines math, statistics, computing, and data—is a critical tool for public health professionals, who use it to study how diseases spread, predict the future course of outbreaks, and evaluate strategies for controlling epidemics.

As the COVID-19 pandemic drove public health decision-making nationwide, a wide range of disease models proliferated. Across the country, city, county, and state officials worked with academic modeling teams to develop custom models to predict what would happen in their jurisdictions. Municipalities that did not have the resources to develop models specific to their locations were forced to extrapolate data from other models and make decisions based on less-than-ideal information. Since there was no cyberinfrastructure for executing these models in a standardized way, the confusion caused by the cacophony of inconsistent models very likely eroded public trust in modeling as a powerful tool.

As the COVID-19 pandemic drove public health decision-making nationwide, a wide range of disease models proliferated. Across the country, city, county, and state officials worked with academic modeling teams to develop custom models to predict what would happen in their jurisdictions. Municipalities that did not have the resources to develop models specific to their locations were forced to extrapolate data from other models and make decisions based on less-than-ideal information. Since there was no cyberinfrastructure for executing these models in a standardized way, the confusion caused by the cacophony of inconsistent models very likely eroded public trust in modeling as a powerful tool.

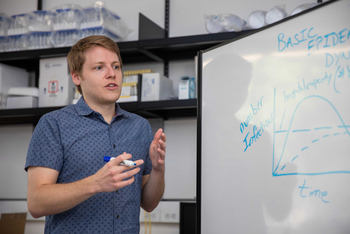

Assistant professor Joe Mihaljevic of Northern Arizona University’s School of Informatics, Computing, and Cyber Systems (SICCS) has been working with public health partners across the state and the country to share computer models mapping the spread of the coronavirus. Mihaljevic, a disease ecologist who applies epidemiological modeling techniques to wildlife and, more recently, to human diseases, was awarded more than $3.5 million by the National Institutes for Health to take modeling to the next level with EpiMoRPH (Epidemiological Modeling Resources for Public Health), which will substantially automate and expedite the development of epidemiological models.

“Throughout the pandemic, we realized we needed models that were at spatial scales relevant to the needs of specific public health partners,” Mihaljevic said. “Across the country, smaller municipalities, like cities, were often forced to inform their decisions based on models that were developed at larger spatial scales, like county scales or even statewide scales, when what they really needed was a customized model for their location. As we thought about the complex challenges we faced and the things we learned modeling the coronavirus, we posed this question: if a new epidemic or pandemic were to emerge, could we envision a system that would make things much easier for modelers to get up and running and to collaborate with with with across groups? And could we use this to develop locally customized models that are better for decision-making?”

“As we developed the proposal for EpiMoRPH, we tried to define a manageable piece of that answer that we could accomplish in a five-year timeframe, to develop a good proof of concept modeling system for what we envision as the ‘next generation of epidemiological modeling that increases automation, promotes sharing and collaboration, accelerates discovery and rapidly advances our understanding of epidemics,” he said.

The project will use two different virus-based diseases as case studies: COVID-19 and SLEV (St. Louis Encephalitis Virus), but EpiMoRPH will work with any transmissible pathogen affecting humans, animals, or even plants.

“EpiMoRPH will provide a framework for characterizing meta-population disease models,” Mihaljevic said, “supporting rapid model development and uniform evaluation of models against data benchmarks. Beyond that, however, EpiMoRPH will provide an accessible interface for public health professionals to identify models relevant to their locale and to then use these models to generate municipality-specific forecasts.”

Multi-institutional collaboration to include Public Health Advisory Council

Mihaljevic’s co-investigators on the project are SICCS professor Eck Doerry, who will lead software development and cloud-based computing; SICCS associate professor Crystal Hepp, also with the Translational Genomics Research Institute (TGen), who will lead the procurement and management of surveillance data on viral cases; and Samantha Sabo, associate professor from NAU’s Center for Health Equity Research, who will assist with mobilizing and liaising with public health partners and lead the efforts in formal assessment.

NAU investigators will work with researchers from several other institutions, including Esma Gel from the University of Nebraska, who will assist with optimization theory and algorithm developments; Sanjay Mehrotra from Northwestern University, who will lead the overall work on optimization theory development; and Timothy Lant from Arizona State University, who will assist with mobilizing and coordinating a Public Health Advisory Council.

The team will form a Public Health Advisory Council (PHAC) consisting of 15 local, regional and national stakeholders in public health and epidemiological modeling who will provide critical input and evaluation of the system as it is being developed. Collaborators from the Arizona Department of Health Services, with whom Mihaljevic and his team have worked extensively during the COVID-19 pandemic, will be part of this effort.

“The PHAC will help us better understand the logistical constraints and drive the development of the user interface so that it reflects the level of detail required by the intended users,” Mihaljevic said. “We will work closely with the advisory council to evaluate and refine our technologies, ensuring that our innovations meet the evolving needs of public health partners, while also appealing to the community of epidemiological modelers.”

In addition, many graduate and undergraduate students in informatics and computer science will assist with efforts to develop web-based cyberinfrastructures, coding automation scripts, and writing technical documentation. Two undergraduate researchers in public health will assist the team’s efforts to conduct formal evaluations of the technology and develop outreach methods with the PHAC.

Could EpiMoRPH help make forecasting epidemics as reliable as forecasting the weather?

“Once EpiMoRPH is built, a typical user could be someone who represents public health in Flagstaff, for instance. During the pandemic, this user might have wanted to understand what they should expect with COVID-19 in terms of hospitalizations in the next 30 days. Because our model at that time was at the scale of Coconino County, we could tell them what was happening at the county level, but not specifically for Flagstaff,” Mihaljevic said.

“And so, once EpiMoRPH is in place if a model hasn't been built for Flagstaff, a public health official could enter some characteristics of this particular location, such as population density, geography, etc., and immediately see which models are currently most accurate. And then the EpiMoRPH system would use those models to develop a customized forecast for Flagstaff.

“In the ideal scenario, the modelers in the community could contribute models and public health professionals could contribute data, too. Our system would pair the models and the data and run them against each other and try to figure out which models are best for specific locations.

“Eventually, as models become more and more accurate, forecasting outbreaks could become as routine, and as reliable, as forecasting the weather,” Mihaljevic said.

Revolutionizing how modeling is done

“This is a whole new way of thinking about developing models on a mass scale,” co-investigator Doerry said, “so that next time we have a pandemic, we are ready and can produce coherent, intelligible, and consistent models from the very start.

“Our ultimate aim is to revolutionize how modeling is done by defining a uniform conceptual standard that all current and existing models can be characterized with. This will allow for massive automation of model validation and parameter refinement and will support automatically testing them across thousands of different locales to discover what model is best given any set of local conditions. Finally, we will add an infinitely scalable cloud computing infrastructure that can bring to bear massive computing power to do all this heavy lifting. EpiMoRPH is so powerful precisely because it explores what you could achieve if you took cutting-edge infectious pathogen modeling and combined it with the cutting edge in cloud-based big data computation.”

EpiMoRPH to contribute to the national modeling community

With an increased emphasis on disease modeling, the EpiMoRPH platform could potentially be adopted as a national hub. Academic labs and national organizations across the country are racing to make epidemic modeling more accessible, more useful, and more accurate. For instance, the Centers for Disease Control and Prevention (CDC) recently launched its Center for Forecasting and Outbreak Analytics (CFA), which will enhance the nation’s ability to use data, models, and analytics to enable timely, effective decision-making in response to public health threats for CDC and its public health partners. Mihaljevic hopes that EpiMoRPH could make a strong contribution to national efforts toward standardizing and automating epidemic modeling, to create reliable forecasts for local decision-makers.

How to resolve AdBlock issue?

How to resolve AdBlock issue?