Yale researchers build simulations of particles that more accurately model their collective behavior

If you take a bucket of water balloons and jostle one of them, the neighboring balloons will respond as well. This is a scaled-up example of how collections of cells and other deformable particle packings respond to forces. Modeling this phenomenon with supercomputer simulations can shed light on questions about how cancer cells invade healthy tissue or how leaves and flowers grow. But the behavior of cell aggregates is extremely complex, and fully capturing their structure and dynamics has proved tricky.

A team of researchers in the lab of Corey O’Hern, professor of mechanical engineering & materials science, physics, and applied physics, has developed novel supercomputer simulations of deformable particles that more accurately model their collective behavior. The study was led by John Treado, a Ph.D. student, and postdoctoral researcher Dong Wang, both in the O’Hern lab. It was recently published in Physical Review Materials.

Cells, bubbles, droplets, and other small particles that make up soft solids – which include anything from mayonnaise and shaving cream to cells and tissues - are all highly deformable. There’s significant variability in how they change shape, and how they respond to forces.

“There is a strong connection between the response of the collection of particles to applied forces, particle shape, and deformability,” Treado said. “Particle deformability determines how they’re going to move because they’re compressed tightly with many neighbors who are squishing them on all sides.”

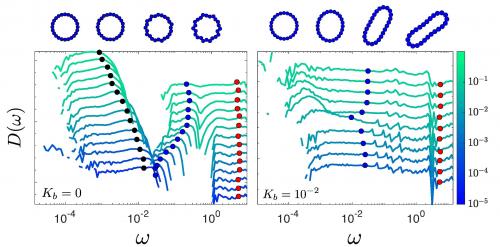

Conventional computer models typically represent soft particles as spheres. When the spheres press against each other, the models represent the spheres’ deformations by having them overlap. This approach works to a certain extent, but crucial information about the particle shapes and interactions is lost or misrepresented.

The O’Hern team, though, developed a supercomputer model that can tune the particles from being floppy, with the ability to easily change shape, to being completely rigid. This model treats each particle as a ring of connected small spheres. In the simulation, forces are applied to the spherical beads, and the model tracks how the connected beads change positions and orientations.

The researchers found that allowing for collective shape changes produced material responses that they wouldn’t have observed with fixed spherical shapes of the particles. The results underscore the importance of incorporating shape variability into models of tissues, foams, and other soft solids composed of deformable particles.

“We now need to extend the model to three dimensions, which more closely mimics the real world,” Wang said. “We can also apply the deformable particle model to active biological systems, which can form swarms, schools, and flocks.”

Treado and Wang are also currently using this new supercomputer model to study how tumor cells invade adipose tissue in breast cancer. In most cancers, the tumor cells can change their shapes to crawl through dense tissue, reach blood vessels, and spread to other sites.

“We are now seeking to determine the physical limits of tumor cells’ deformability, and the forces that they must exert to push through a dense tissue,” Treado said. Their work may lead to improvements in the ability to predict whether cancers will metastasize or not.

How to resolve AdBlock issue?

How to resolve AdBlock issue?