Researchers at the University of California, Riverside, have used a nanoscale synthetic antiferromagnet to control the interaction between magnons -- research that could lead to faster and more energy-efficient computers.

In ferromagnets, electron spins point in the same direction. To make future computer technologies faster and more energy-efficient, spintronics research employs spin dynamics -- fluctuations of the electron spins -- to process information. Magnons, the quantum-mechanical units of spin fluctuations, interact with each other, leading to nonlinear features of the spin dynamics. Such nonlinearities play a central role in magnetic memory, spin torque oscillators, and many other spintronic applications.

For example, in the emergent field of magnetic neuromorphic networks -- a technology that mimics the brain -- nonlinearities are essential for tuning the response of magnetic neurons. Also, in another frontier area of research, nonlinear spin dynamics may become instrumental.

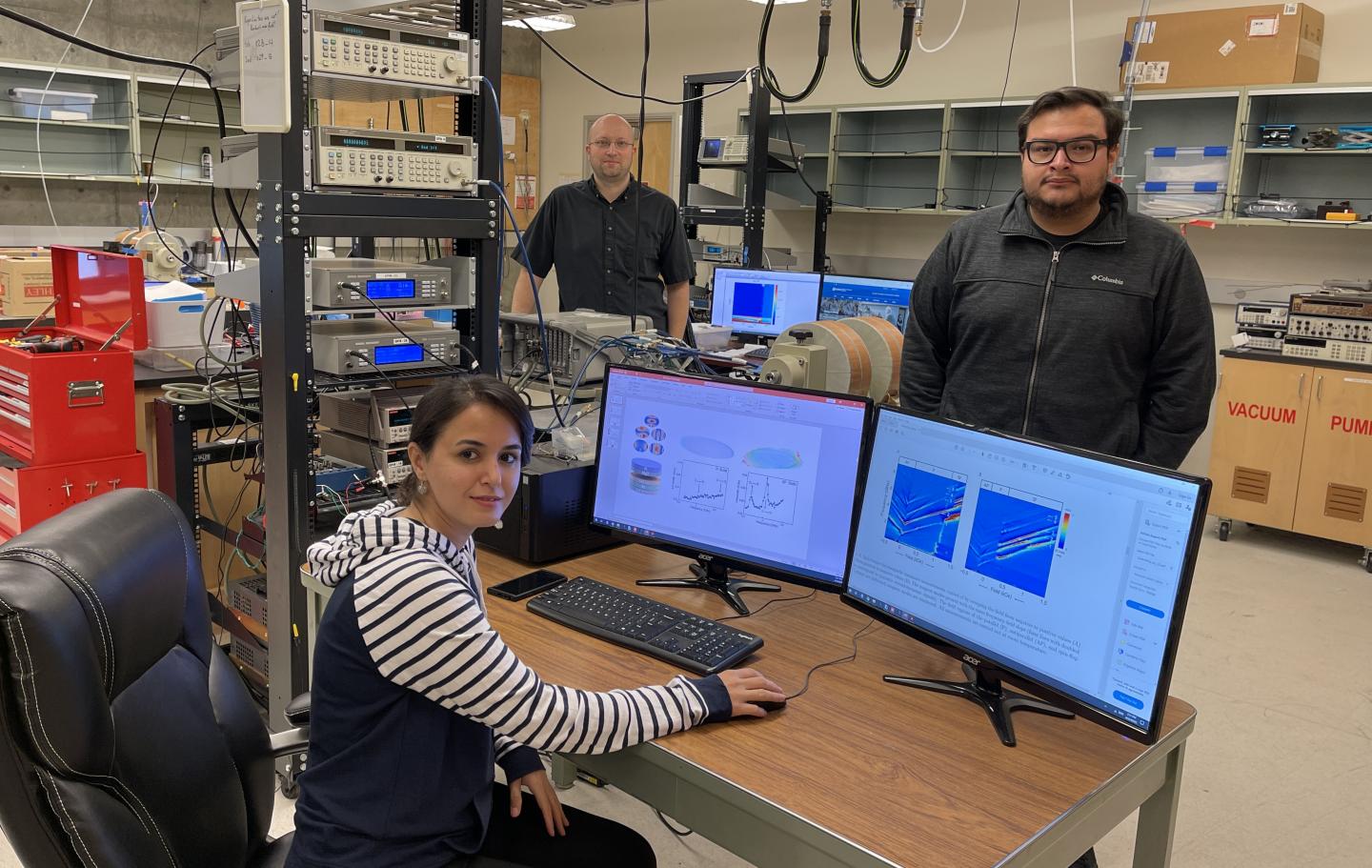

"We anticipate the concepts of quantum information and spintronics to consolidate in hybrid quantum systems," said Igor Barsukov, an assistant professor at the Department of Physics & Astronomy who led the study that appears in Applied Materials & Interfaces. "We will have to control nonlinear spin dynamics at the quantum level to achieve their functionality."

Barsukov explained that in nanomagnets, which serve as building blocks for many spintronic technologies, magnons show quantized energy levels. Interaction between the magnons follows certain symmetry rules. The research team learned to engineer the magnon interaction and identified two approaches to achieve nonlinearity: breaking the symmetry of the nanomagnet's spin configuration; and modifying the symmetry of the magnons. They chose the second approach.

"Modifying magnon symmetry is the more challenging but also more application-friendly approach," said Arezoo Etesamirad, the first author of the research paper and a graduate student in Barsukov's lab.

In their approach, the researchers subjected a nanomagnet to a magnetic field that showed nonuniformity at characteristic nanometer length scales. This nanoscale nonuniform magnetic field itself had to originate from another nanoscale object.

For a source of such a magnetic field, the researchers used a nanoscale synthetic antiferromagnet, or SAF, consisting of two ferromagnetic layers with antiparallel spin orientation. In its normal state, SAF generates nearly no stray field -- the magnetic field surrounding the SAF, which is very small. Once it undergoes the so-called spin-flop transition, the spins become canted and the SAF generates a stray field with nonuniformity at nanoscale, as needed. The researchers switched the SAF between the normal state and the spin-flop state in a controlled manner to toggle the symmetry-breaking field on and off.

"We were able to manipulate the magnon interaction coefficient by at least one order of magnitude," Etesamirad said. "This is a very promising result, which could be used to engineer coherent magnon coupling in quantum information systems, create distinct dissipative states in magnetic neuromorphic networks, and control large excitation regimes in spin-torque devices."

How to resolve AdBlock issue?

How to resolve AdBlock issue?