Supercomputing is transforming planetary science by revealing Saturn’s magnetic "bubble" as a dynamic, lopsided structure, overturning the long-held belief in its symmetry and highlighting the crucial power of modern simulations to uncover hidden planetary truths.

The discovery, led by scientists at University College London, was made possible by cutting-edge supercomputer simulations that recreate the complex interaction between the solar wind and planetary magnetic fields.

A Magnetic Bubble Reimagined

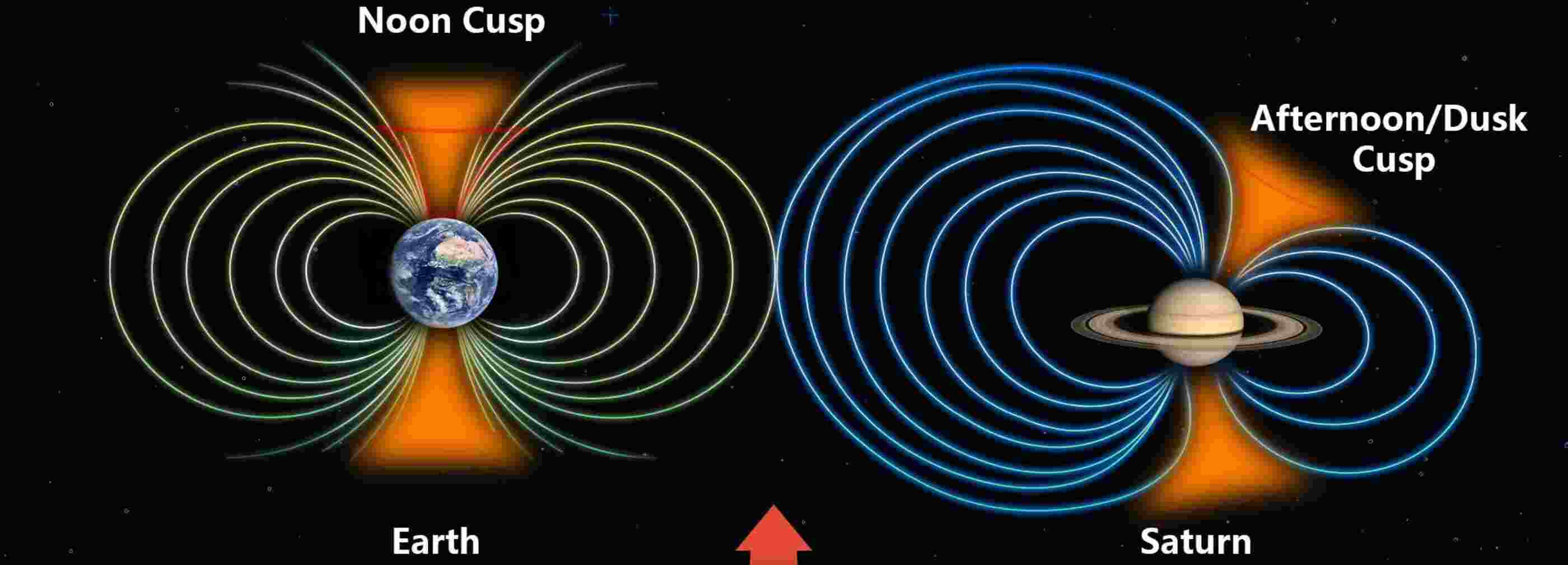

Every magnetized planet is enveloped by a magnetosphere, a protective bubble that deflects charged particles streaming from the Sun. On Earth, this bubble is relatively well understood and largely symmetric.

But Saturn tells a different story.

Using high-resolution computational models, scientists found that Saturn’s magnetosphere is distinctly lopsided, stretched, and distorted in ways that challenge decades of assumptions. Instead of a neat, balanced structure, the simulations reveal a system shaped by competing pressures and flows in space.

This breakthrough is driven not just by new data but by the unparalleled ability of supercomputers to simulate global-scale plasma physics with extraordinary realism, unlocking Saturn's true magnetic shape.

Supercomputing: The Engine Behind the Discovery

To decode Saturn’s magnetic environment, researchers turned to advanced magnetohydrodynamic (MHD) simulations, mathematical models that describe how electrically charged gases behave in magnetic fields.

These simulations demand immense computational power.

Supercomputers enabled the team to:

- Model the solar wind interacting with Saturn’s magnetic field in three dimensions.

- Track how plasma flows reshape the magnetosphere over time.

- Capture subtle asymmetries that are invisible to spacecraft observations alone.

The result is a fully dynamic portrait of Saturn’s magnetic bubble, one that evolves continuously under the influence of solar energy and internal planetary processes.

Such simulations bridge a critical gap: spacecraft like Cassini provide snapshots, but supercomputers connect those snapshots into a living system.

A Planetary System in Motion

The simulations indicate that Saturn’s magnetosphere is compressed, stretched, and skewed by external forces, resulting in a persistent imbalance. This lopsidedness affects how energy and particles circulate around the planet, influencing everything from auroras to radiation belts.

Crucially, the findings suggest that Saturn’s atmosphere and magnetosphere are tightly coupled, feeding energy into one another in a complex feedback loop.

This insight would be nearly impossible without computational modeling at scale. The physics involved spans vast distances and countless interactions, precisely the kind of challenge modern supercomputers are built to solve.

Inspiration at the Edge of Computation

Beyond Saturn itself, the study signals something larger: a new era in which supercomputing becomes a primary tool of discovery in space science.

By simulating entire planetary environments, researchers can now:

- Test theories that cannot be reproduced experimentally.

- Predict space weather conditions across the solar system.

- Compare magnetic worlds, from Earth to distant exoplanets.

In doing so, supercomputers are transforming how we explore space, not by traveling farther, but by thinking deeper.

A New View of the Solar System

Saturn’s newly revealed asymmetry is more than a curiosity; it is a reminder that even familiar worlds still hold profound surprises.

And increasingly, those surprises are being uncovered not just through telescopes or spacecraft, but through the silent, relentless calculations of the world’s most powerful machines.

In the hum of supercomputers, we are beginning to hear the true shape of planets, and the deeper rhythms of the universe itself.

How to resolve AdBlock issue?

How to resolve AdBlock issue?