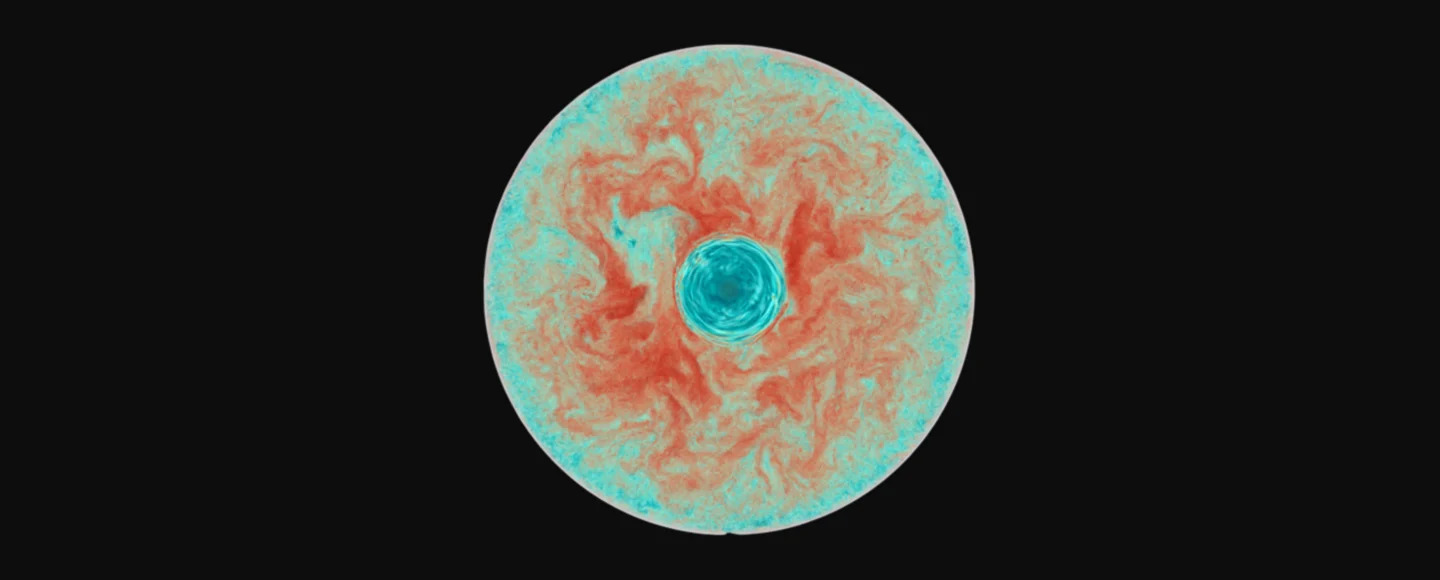

Astrophysicists have long puzzled over a key mystery in the life cycle of red giant stars, the swollen, aging stars that will eventually include our own Sun. For more than fifty years, scientists have documented changes in the surface chemistry of these stars as they evolve; however, the process responsible for these changes has remained unclear. Now, researchers at the University of Victoria’s Astronomy Research Centre report that advanced supercomputer simulations have finally cracked the case: stellar rotation intensifies internal mixing, carrying elements from deep inside red giants up to their surfaces.

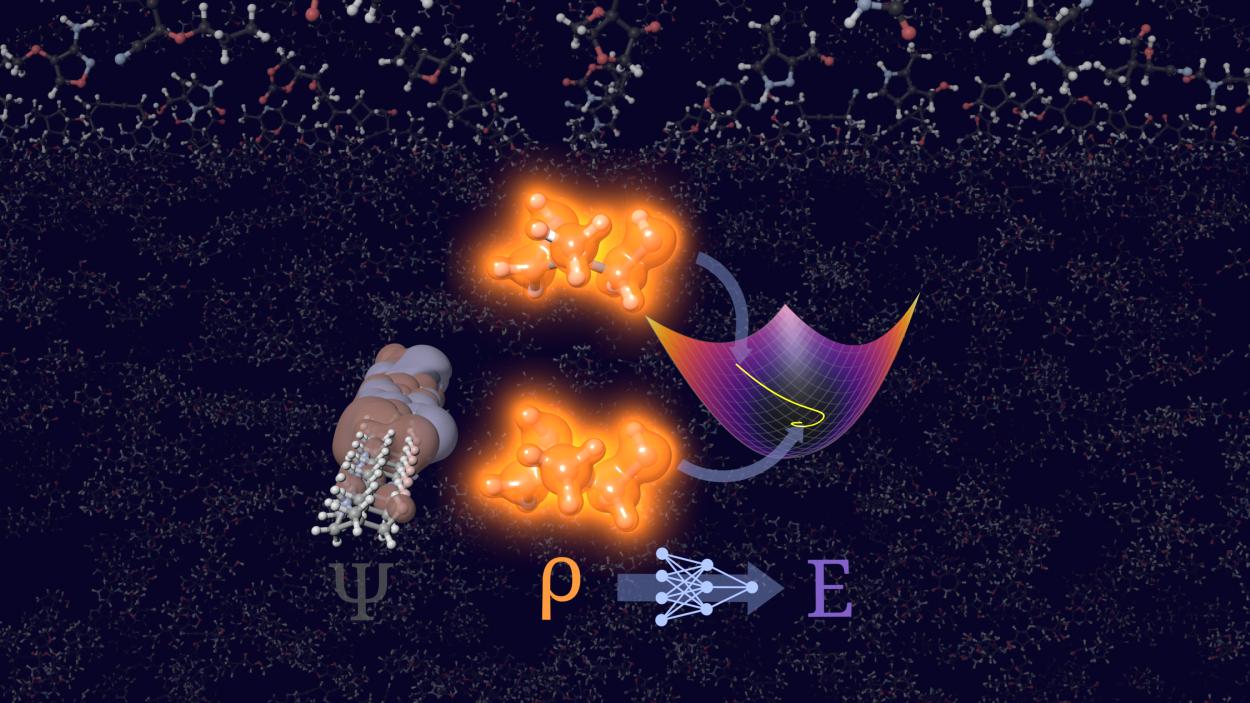

These results are grounded in sophisticated three-dimensional hydrodynamical simulations. Such simulations are feasible only thanks to the immense computational power of modern high-performance computing facilities, including the Texas Advanced Computing Center and the Trillium supercomputer at SciNet in Canada.

According to lead researcher Simon Blouin, rotation dramatically increases the efficiency with which internal waves move material through the stable barrier layer between the core and the outer convection zone. In practical terms, this means that elements like carbon and nitrogen can be transported outward in ways that align with what telescopes have observed for decades, particularly changes in isotopic ratios like carbon-12 to carbon-13 that had until now lacked a convincing cause.

But before declaring this celestial riddle fully solved, especially for a scientifically literate audience like that of SC Online, it’s worth digging into what this “solution” really entails, and where skepticism might still be warranted.

Simulation Success, But What About Reality?

The crux of the new work lies in computational hydrodynamics: solving the fluid motion of stellar interiors under the influence of rotation, gravity, turbulence, and thermal gradients. These simulations are not simple; even with hundreds of processors working in parallel, individual runs can consume millions of CPU hours. Their scope and resolution reflect the kind of computational scale once reserved for meteorology and climate models, “big science” simulations where raw computational power often dictates what questions can be asked as much as what answers are found.

While the results reproduce observed surface anomalies under specific rotation regimes, there are critical caveats inherent to any model of such complexity:

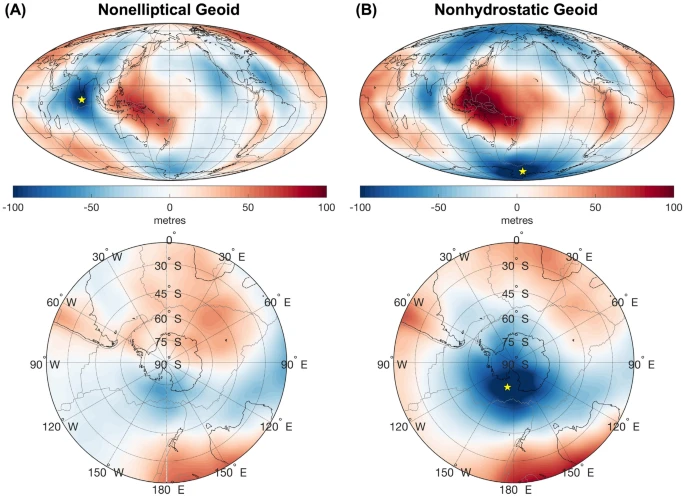

- Parameter Dependence: The simulations assume particular rotation rates and internal structural profiles. Whether those parameters accurately represent all red giants, especially those with different masses or histories, is not firmly established.

- Resolution Limits: Even top-tier HPC clusters must balance between resolution and computational cost. Fine details of mixing processes can be sensitive to grid size and physics approximations, meaning that what appears as a “solution” at one scale might shift at higher fidelity.

- Model Uncertainties: Stellar interiors host a vast array of poorly constrained physical processes, from magnetism to subtle wave interactions, some of which may not be fully captured in current models.

In other words, while the simulations are impressive and represent a significant step forward in computational astrophysics, there remains ample room for cautious interpretation.

The Computational Science Perspective

For the supercomputing community, the UVic work is a testament to both the power and limitations of HPC. Without large-scale simulations, computations spread across hundreds or thousands of processors, exploring how rotating convection and internal gravity waves interact inside a star would remain purely theoretical. Supercomputers act here as numerical laboratories, where hypotheses about internal stellar dynamics can be tested in silico, complementing observations from telescopes with otherwise unreachable insights.

At the same time, this breakthrough highlights that solving a scientific problem rarely equates to closure. Computational results often raise as many questions as they answer: How universal is the rotational mixing mechanism across the diversity of red giant stars? Could different physical processes dominate in other evolutionary phases? And how might uncertainties in initial conditions or physics assumptions influence model outcomes?

These are issues that only further HPC-driven research, informed by both observation and theoretical refinement, can address. In that sense, the latest simulations are less a final answer and more a checkpoint in a long, iterative process of scientific inquiry.

A Future Written in Code

As supercomputing power continues to grow and astrophysical models become ever more detailed, simulations like these will increasingly serve as essential tools and indispensable partners in unraveling cosmic mysteries. Whether it’s mixing winds in red giants or simulating galaxy formation at cosmological scales, HPC remains at the frontier of our capacity to think computationally about the universe.

Still, caution is warranted. Matching known observations with a model marks significant progress, but it does not equate to a final answer. In astronomy and other computational sciences, findings are only as robust as the underlying assumptions, and verifying those assumptions across the universe’s full complexity is a task that extends far beyond any individual study.

At present, supercomputer-generated star models present a compelling narrative for how rotation affects red giant surfaces. Whether this narrative endures further examination, evolves with new data, or is ultimately rewritten remains to be seen.

How to resolve AdBlock issue?

How to resolve AdBlock issue?