What if the future of supercomputing didn’t depend on building bigger machines, but on discovering smaller, stranger building blocks?

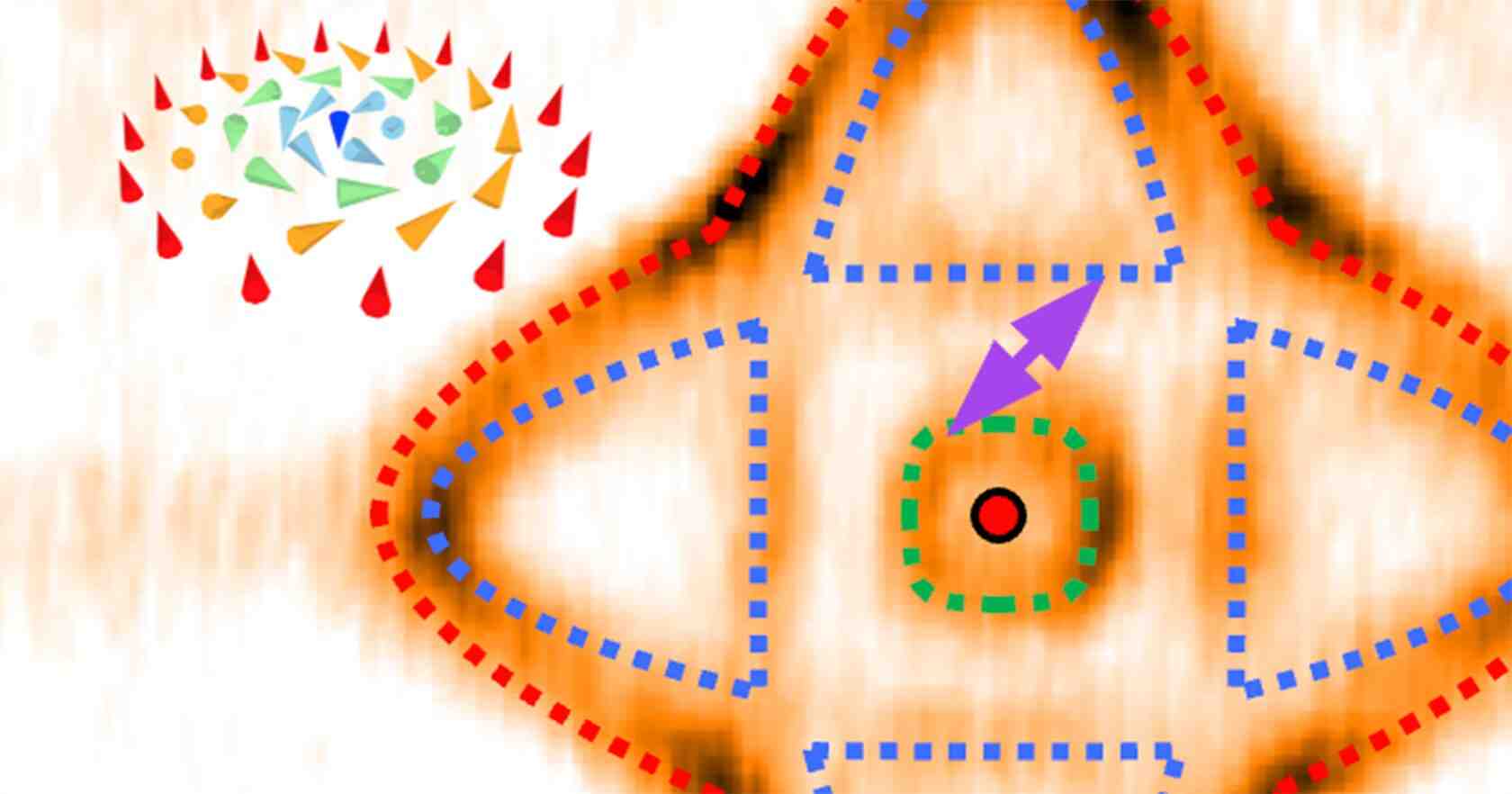

Deep inside exotic materials, researchers have uncovered something that looks almost imaginary: tiny magnetic whirlpools just 2 nanometers wide, thousands of times smaller than a human hair.

These structures, known as skyrmions, were once purely theoretical.

Now, they may hold the key to the next revolution in computing.

A particle that isn’t a particle

Skyrmions are not particles in the traditional sense. They are swirling patterns of magnetism, vortex-like arrangements of atomic spins that behave as if they are stable, self-contained objects.

For years, scientists suspected they could exist. But understanding how they form and how to control them remained elusive.

That is beginning to change.

Researchers at Tohoku University in Japan have now identified critical mechanisms behind skyrmion formation, revealing that these structures can exist in materials previously thought impossible.

Even more surprisingly, their behavior appears to follow a kind of hidden blueprint encoded in the material’s electronic structure.

The Blueprint Beneath the Surface

At the heart of the discovery is a phenomenon called a Lifshitz transition, a sudden shift in a material’s electronic state.

When this shift occurs, it reshapes the material’s “Fermi surface,” creating overlapping patterns that act like a structural template for skyrmions.

Researchers describe this as a kind of design rule: a way to predict not just whether skyrmions will form, but their size, arrangement, and behavior.

Even the force that stabilizes them turned out to be unexpected.

For years, scientists believed skyrmions were governed by one type of magnetic interaction. Instead, the new study shows they are driven by the RKKY interaction, a subtle effect mediated by electrons moving through the material.

It is a reminder that in physics, even well-established assumptions can quietly unravel.

Why supercomputing cares

At first glance, these nanoscale whirlpools might seem far removed from the world of high-performance computing.

But they address one of its biggest challenges: memory.

Modern supercomputers, and especially AI-driven data centers, are increasingly limited not by processing power, but by how fast and efficiently they can store and move data. Energy consumption has become a defining constraint.

This is where skyrmions become intriguing.

They are:

- Extremely stable (resistant to disruption)

- Tiny (enabling ultra-high-density storage)

- Energy-efficient (movable with minimal electrical current)

In practical terms, this means future memory devices could store vastly more data while consuming far less power, a combination that could redefine the architecture of supercomputing systems.

Memory that moves like a fluid

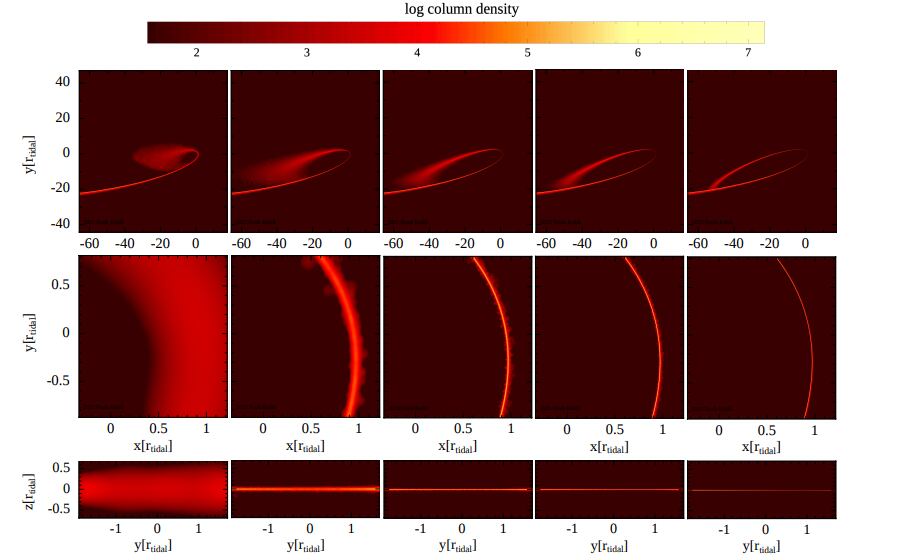

One of the most curious aspects of skyrmions is how they behave.

Unlike traditional bits stored in fixed locations, skyrmions can move, gliding through materials like microscopic beads on a track. This opens the door to entirely new types of memory, where data is not just stored, but dynamically transported.

It’s a concept that feels almost biological, less like rigid hardware, more like a flowing system.

And it raises new questions:

- Could memory become something that evolves in real time?

- Could computing architectures shift from static layouts to dynamic ones?

- Could supercomputers become not just faster, but fundamentally different?

From theory to technology

There is still a long road ahead.

One major challenge is temperature: many skyrmion systems currently require conditions that are impractical for everyday devices. Researchers are now working to design materials that function reliably at higher, more accessible temperatures.

But the path forward is clearer than before.

By linking electronic structure to magnetic behavior, scientists are moving from trial-and-error experimentation to intentional design, engineering materials with properties tailored for computation.

A curious future, computed at the nanoscale

What makes this discovery so compelling is not just its potential, but its strangeness.

A swirling pattern of spins, once a mathematical curiosity, now sits at the center of a possible technological shift.

And like many breakthroughs in modern science, it exists at the intersection of disciplines:

- quantum physics

- materials science

- nanotechnology

- and, increasingly, supercomputing

Understanding, simulating, and ultimately harnessing skyrmions requires immense computational power. Their behavior emerges from complex interactions that only advanced modeling and high-performance computing can fully capture.

The smallest frontier

In the race toward more powerful supercomputers, it’s tempting to think in terms of scale, more cores, more data, more energy.

But skyrmions suggest a different path.

One where progress comes not from building bigger systems, but from discovering smaller, smarter ones.

Where the future of computation may hinge on structures so small they were once invisible to science, and so elegant they almost feel like nature’s own code.

And where curiosity, once again, leads the way.

How to resolve AdBlock issue?

How to resolve AdBlock issue?