In the grand cosmic ballet, stars live tumultuous lives, forming in blazing clouds of gas, burning for millions of years, and ultimately exploding as supernovae that reshape entire galaxies. Now, thanks to cutting-edge astronomical surveys and the next generation of supercomputer simulations, scientists are beginning to see where and how these cataclysmic events unfold across the vast tapestry of space, even in places once thought unlikely.

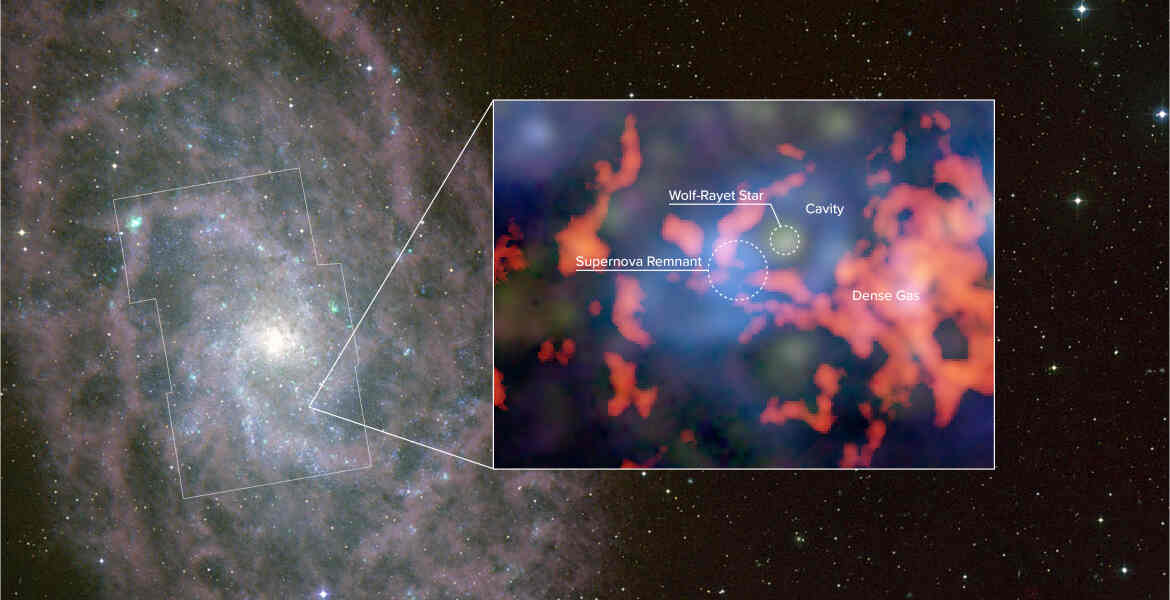

A collaborative team of astronomers has produced the first large-scale census of evolved massive stars, those on the brink of explosive death, across the nearby spiral galaxy M33. By overlaying high-resolution gas maps from the NSF’s Very Large Array and ALMA with catalogs of thousands of red supergiants, Wolf–Rayet stars, and known supernova remnants, researchers uncovered a surprising truth: a majority of future stellar explosions are likely to occur outside the dense clouds where stars are born.

This revelation reshapes our understanding of how galaxies evolve. Supernovae don’t merely spew heavy elements into dense star-forming chambers; many detonate within the more diffuse interstellar medium. In these off the beaten path locales, their shock waves travel farther before dissipating, stirring gas over larger scales and influencing the cosmic ecosystem in ways that traditional models hadn’t fully captured.

Supercomputing: The Engine Behind Cosmic Insight

Bringing this level of detail to astrophysics isn’t possible without supercomputing, the computational backbone of modern galaxy simulations. Observational efforts like the Local Group L-Band Survey provide exquisite maps of gas and stars, but only large-scale cosmological simulations can trace millions to billions of years of galactic evolution, modeling how stars interact with their environments over cosmic time.

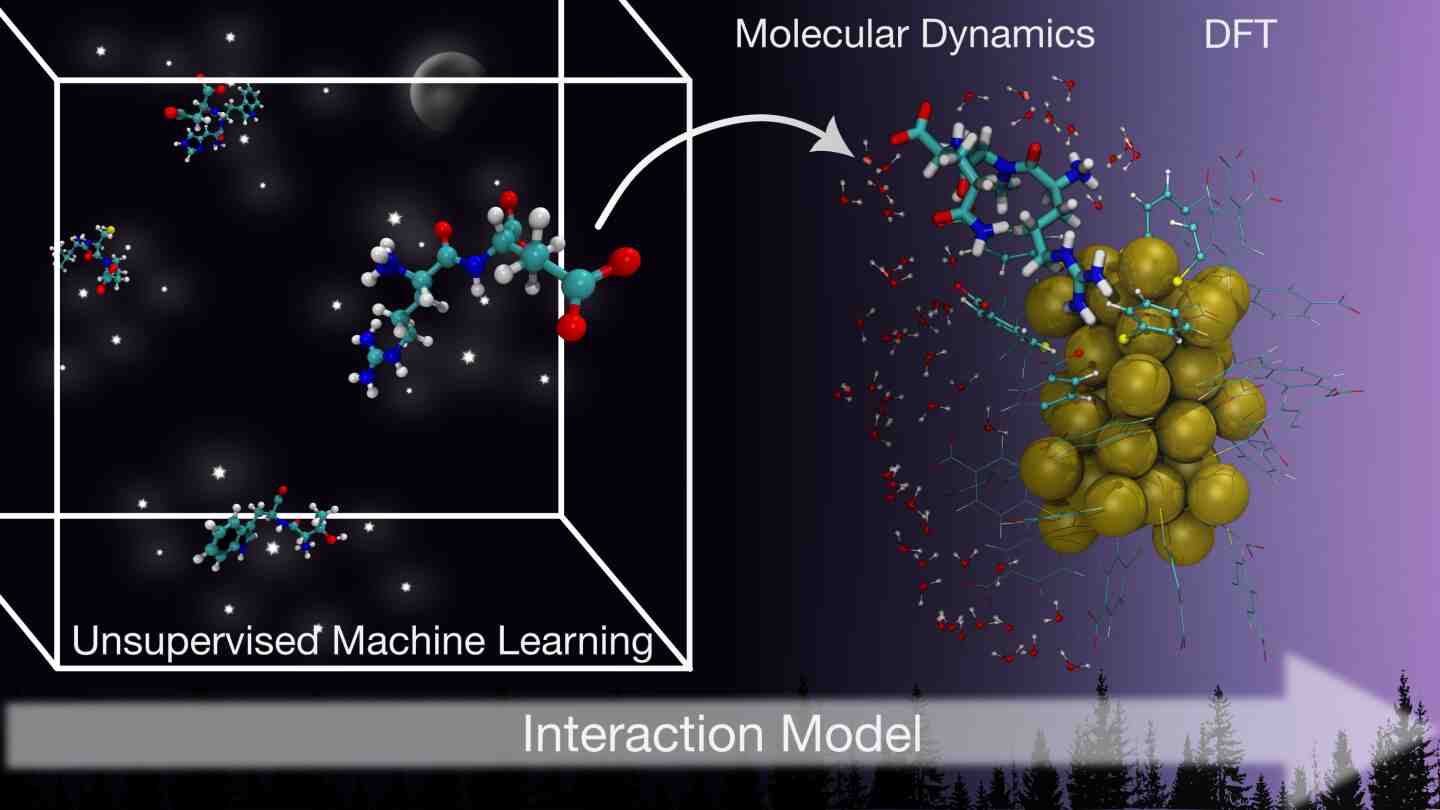

These simulations, ambitious in both scale and physics, run on some of the world’s most powerful supercomputers, incorporating gravity, hydrodynamics, radiative feedback, and turbulent gas flows.

Models such as FIRE, Illustris, TIGRESS, and SILCC integrate complex subgrid physics to approximate processes occurring at scales far smaller than individual simulation cells. The new stellar census from M33 provides a critical benchmark for these simulations, giving astrophysicists real-world data against which to test and refine their codes.

Without high-performance computing, tracking the intricate interplay between massive stars and their gaseous surroundings across an entire galaxy, from cold molecular clouds to tenuous atomic hydrogen, would be unthinkable. Supercomputers enable researchers to explore how stellar winds, supernova blasts, and runaway stars shape the evolution of galaxies over billions of years, bridging the gap between theoretical physics and observable astrophysical phenomena.

Polishing the Future of Galaxy Modeling

The realization that many stars meet their end far from dense clouds is reshaping our view of galactic evolution. This new understanding challenges long-held beliefs about where energy and momentum are distributed throughout galaxies, alters predictions for galactic winds and the spread of elements, and drives simulation models to include more accurate feedback mechanisms. As new data from ALMA and future telescopes like the Next Generation Very Large Array become available, astronomers will continue to refine their insights with supercomputers playing a critical role in making sense of it all.

In this era of astronomical breakthroughs, supercomputing is more than just a tool for simulating the cosmos; it is a key to understanding our own cosmic origins. By combining detailed observations with immense computational power, scientists are piecing together the life cycles of stars and, through them, the evolution of galaxies. This blend of data and simulation marks a pivotal step forward in humanity’s journey to understand the universe.

How to resolve AdBlock issue?

How to resolve AdBlock issue?