Leveraging the capabilities of modern high-performance computing (HPC), astronomers have unraveled a cosmic mystery: the Milky Way and its closest neighboring galaxies are embedded in a sprawling sheet of matter that shapes the movement of surrounding galaxies. This breakthrough, featured in Nature Astronomy, was achieved using advanced simulations powered by cutting-edge supercomputers to model the mass distribution and dynamics of our local universe.

For decades, cosmologists have grappled with an apparent contradiction in galactic motion. While most galaxies in the universe recede from one another in accord with the expansion described by the Hubble–Lemaître law, our immediate neighborhood, the Local Group comprising the Milky Way, the Andromeda Galaxy, and dozens of dwarf galaxies, exhibits surprisingly coherent motion patterns that ordinary mass distributions failed to explain. The Andromeda Galaxy itself moves toward the Milky Way at about 100 km/s, a phenomenon long understood as gravitational interaction within the Local Group. Yet the behavior of other nearby galaxies did not align with theoretical expectations.

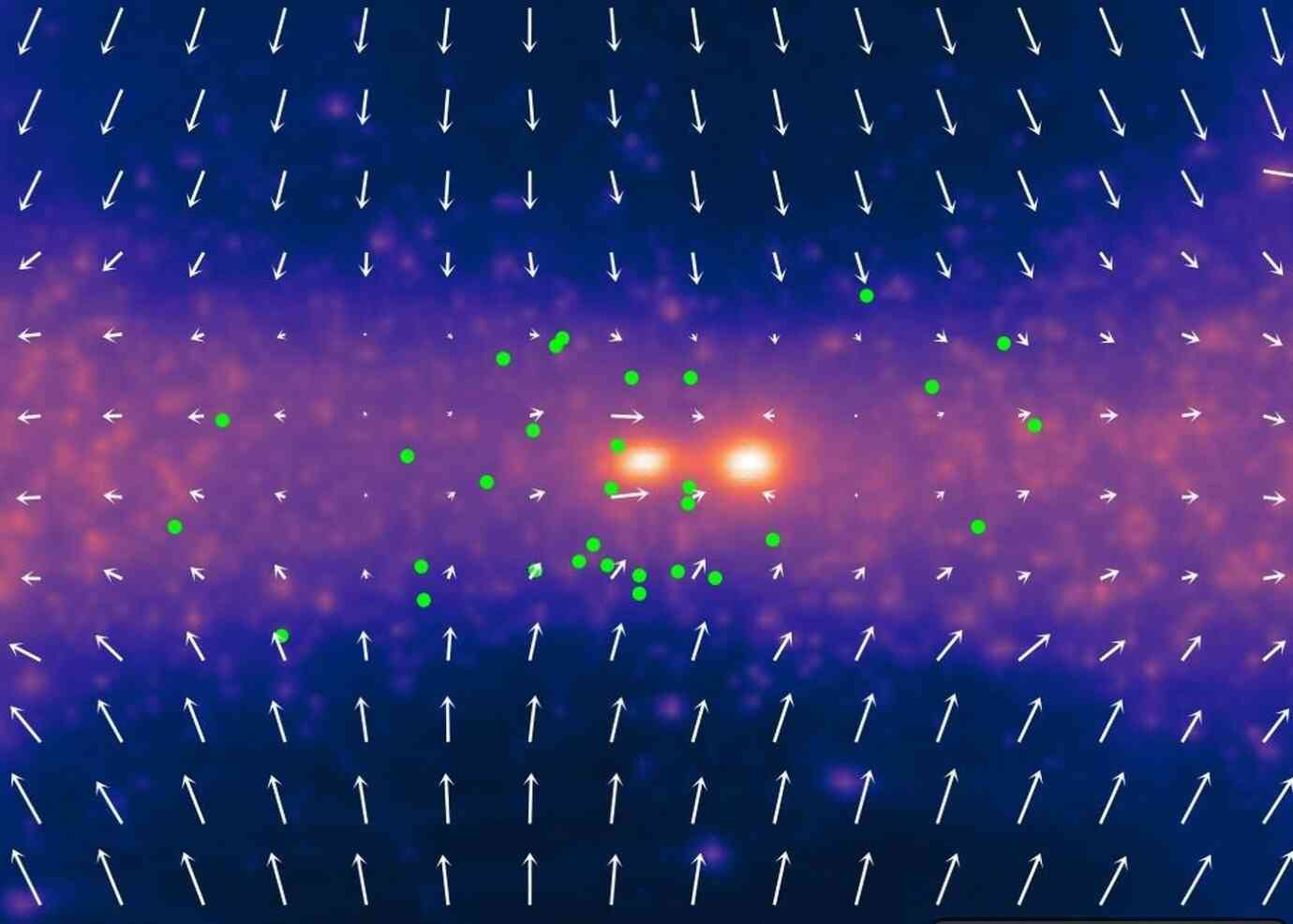

Now, an international team led by doctoral researcher Ewoud Wempe and Professor Amina Helmi at the University of Groningen has shown that the key to this puzzle lies not within the confines of the Local Group alone but in an extended, planar mass structure surrounding it. Using sophisticated cosmological simulations constrained by observational data, including the positions, masses, and velocities of 31 galaxies just beyond the Local Group, the researchers demonstrated that the vast majority of dark matter and visible matter in our vicinity is organized in a flat sheet extending tens of millions of light-years. Above and below this planar structure are vast voids with minimal matter.

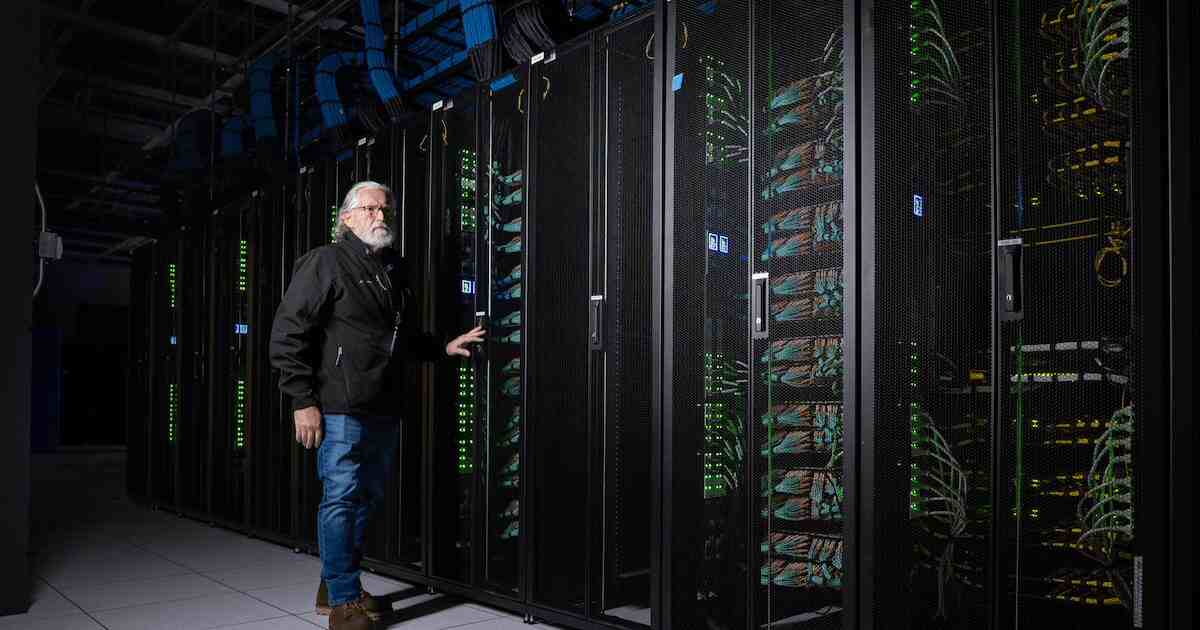

What sets this discovery apart is the critical role of supercomputing in constructing these “virtual twin” universes. The team’s simulations began with initial conditions seeded by early-universe observations and then evolved forward using numerical methods that solve Einstein’s equations of gravity together with fluid dynamics for dark matter and baryonic matter. Such calculations involve millions of interacting elements and demand parallel computation at scale, the exclusive domain of HPC systems. By performing these simulations on powerful supercomputers, astronomers were able to trace the gravitational influence of the large-scale sheet on galaxy motions and verify that this configuration reproduces observed velocities with high fidelity.

According to Helmi, this marks the first time that the distribution and velocity field of dark matter in the region surrounding our galactic neighborhood have been quantitatively constrained in a manner consistent with both ΛCDM cosmology and observed local dynamics. “Astronomers have been trying to solve this problem for decades,” Helmi said. “It is extraordinary that, based purely on the motions of galaxies, we can infer a mass distribution that matches the observed positions and motions of galaxies within and just outside the Local Group.”

For the supercomputing community, this achievement is profoundly inspirational. It highlights how modern HPC infrastructures, with their massive parallelism, high memory bandwidth, and optimized numerical libraries, are enabling scientists to probe cosmic questions that were once deemed intractable. These simulations not only illuminate the hidden architecture of our cosmic neighborhood but also exemplify how simulation-based science complements observation, allowing researchers to explore scenarios that cannot be directly imaged or measured.

Beyond resolving a decades-old enigma in galactic astronomy, this work reinforces the broader scientific view that large-scale structures, from filaments of the cosmic web to planar mass configurations like the one now identified around the Milky Way, are fundamental to understanding the universe’s evolution. Supercomputers are not just tools for speeding up calculations; they are essential engines of discovery that empower scientists to simulate the universe with realism and precision.

As supercomputing technology advances, both in terms of hardware and algorithms, scientists are poised to create increasingly detailed “virtual universes.” These sophisticated simulations will not only put our cosmological models to the test but also inform future telescope and space mission observations, leading to a richer understanding of our cosmic context.

According to the study’s authors, uncovering the influence of the Local Sheet on galactic motion is more than just resolving a persistent mystery; it demonstrates the remarkable discoveries possible when computational power, observational insights, and scientific curiosity are combined on a cosmic scale.

How to resolve AdBlock issue?

How to resolve AdBlock issue?