When gravitational-wave detectors picked up the signal known as GW231123, astronomers were stunned. Two black holes, far too massive and spinning far too fast, merged in a way that standard stellar evolution shouldn’t allow. One scientist summed up the global reaction: "Those black holes should not exist."

Now, researchers supported by the Simons Foundation believe they have an answer. Their new paper in The Astrophysical Journal Letters proposes that these massive black holes were born not from earlier mergers, but directly from the collapse of enormous, rapidly rotating stars, no supernova, no explosion, just a straight plunge into darkness. To test this idea, they turned to supercomputing. But does the model truly explain the mystery, or are we simply forcing the data to fit a convenient narrative?

The simulations

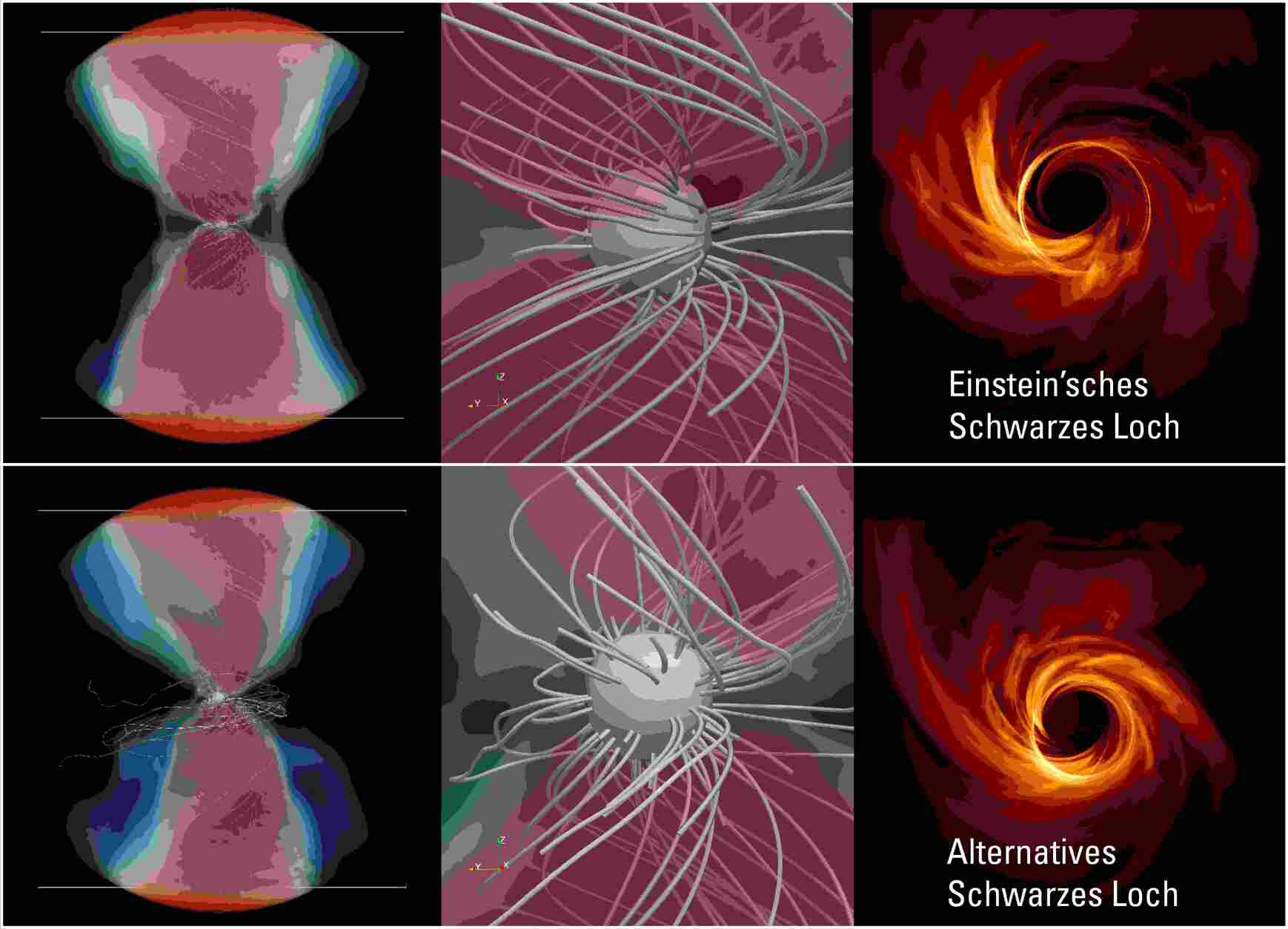

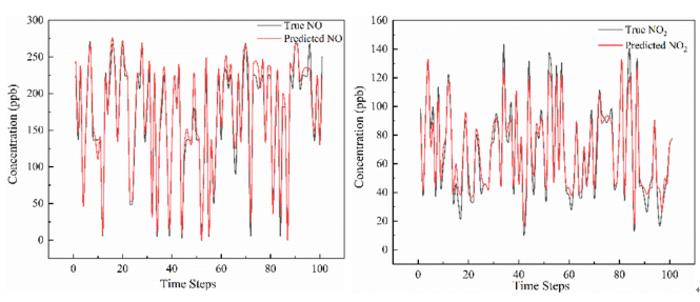

The research team employed state-of-the-art, end-to-end general-relativistic magnetohydrodynamic simulations (GRMHD), representing the most advanced black-hole formation simulations currently available. These models were executed on the supercomputers from the U.S. Department of Energy, specifically including the Argonne and NERSC clusters.

Unlike previous models that averaged out messy physics or assumed idealized collapse, these simulations:

- Track a massive star from the late burning stage → collapse → black hole birth.

- Include magnetic fields, rotation, and the feedback between jets and accretion disks.

- Model how much mass falls into the black hole and how much is blasted away.

The key claim:

A 250-solar-mass helium star collapses into a black hole of ~40 solar masses, then accretes or blows off the rest, depending on how magnetic fields choke or feed the flow. With a moderate magnetic field, the simulation produced a final black hole around ~100 solar masses with high spin, strikingly similar to GW231123.

In other words: rotation + magnetic fields + gravitational collapse = black holes that shouldn’t exist, suddenly… exist.

The “mass gap” problem

There’s a no-man’s-land of black hole masses, from ~70 to ~140 solar masses, called the pair-instability mass gap. In current theory, stars in this mass band explode violently before they can ever form a black hole. So how did GW231123 contain two of them?

The paper posits that rapid rotation quells the explosive instability, directing mass toward the black hole. Magnetic fields then regulate mass accretion and ejection; too weak, and the black hole overgrows, while too strong, and it expels its own fuel. Only a "Goldilocks" magnetic field produces the massive, high-spin black holes observed.

The work is a tour de force of supercomputing and theoretical astrophysics. But skepticism is warranted.

- Too many knobs to turn. Rotation rate, magnetic strength, metallicity, tweak any one of these, and you get a different outcome. A perfect match may say more about parameter tuning than about nature.

- Assumptions stacked on assumptions. The model assumes these hyper-massive stars existed, paired in binaries, both rotating rapidly and with finely tuned magnetic fields. We have hints that such stars might exist, not proof.

- The simulation freezes spacetime. The GRMHD code evolves matter and magnetic fields but does not dynamically evolve the black hole itself; its mass and spin are adjusted afterward in post-processing. That means the most spectacular claim, reproducing the final mass and spin, comes partially from inference, not direct simulation.

- Explanations arrive after the observation. The merger was first called “impossible;” the theory arrived later. That’s classic scientific back-filling, plausible, but unproven.

Supercomputers are powerful, but they can turn into wish-fulfillment engines if we’re not careful.

What we can say with confidence

- The event is real.

- The black holes are too massive and too fast-spinning for standard models.

- Supercomputing is the only way we can model the collapse with full magnetic, relativistic detail.

Whether this simulation reflects what nature actually does, or simply what our models are capable of doing, remains open.

The bottom line

A breakthrough? Possibly. A closed case? Not even close.

Supercomputers do not provide definitive truths; instead, they offer potential explanations. The validation of these possibilities rests on future observational data and the discovery of analogous black hole mergers.

How to resolve AdBlock issue?

How to resolve AdBlock issue?