'Shell structures' are the first of their kind found in the galaxy

Nearly 3 billion years ago, a dwarf galaxy plunged into the center of the Milky Way and was ripped apart by the gravitational forces of the collision. Astrophysicists announced today that the merger produced a series of telltale shell-like formations of stars in the vicinity of the Virgo constellation, the first such "shell structures" to be found in the Milky Way. The finding offers further evidence of the ancient event and new possible explanations for other phenomena in the galaxy.

Astronomers identified an unusually high density of stars called the Virgo Overdensity about two decades ago. Star surveys revealed that some of these stars are moving toward us while others are moving away, which is also unusual, as a cluster of stars would typically travel in concert. Based on emerging data, astrophysicists at Rensselaer Polytechnic Institute proposed in 2019 that the overdensity was the result of a radial merger, the stellar version of a T-bone crash.

"When we put it together, it was an 'aha' moment," said Heidi Jo Newberg, Rensselaer professor of physics, applied physics, and astronomy, and lead author of The Astrophysical Journal paper detailing the discovery. "This group of stars had a whole bunch of different velocities, which was very strange. But now that we see their motion as a whole, we understand why the velocities are different, and why they are moving the way that they are."  {module INSIDE STORY}

{module INSIDE STORY}

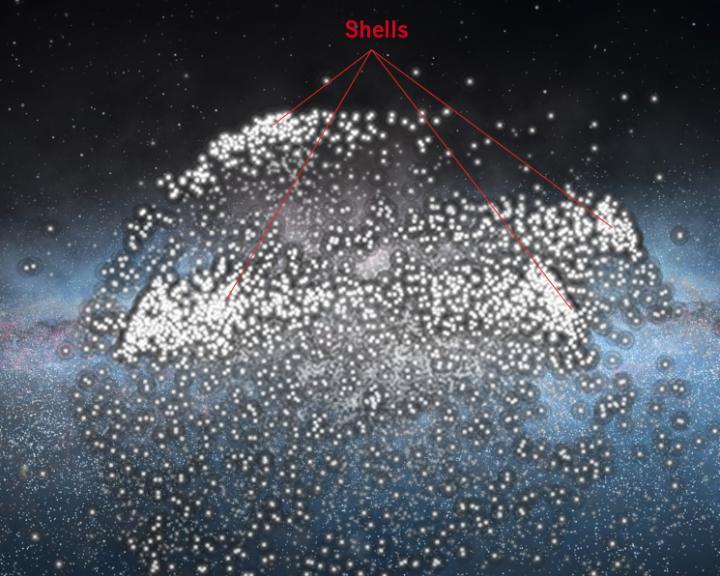

The newly announced shell structures are planes of stars curved, like umbrellas, left behind as the dwarf galaxy was torn apart, literally bouncing up and down through the center of the galaxy as it was incorporated into the Milky Way, an event the researchers have named the "Virgo Radial Merger." Each time the dwarf galaxy stars pass quickly through the galaxy center, slow down as they are pulled back by the Milky Way's gravity until they stop at their farthest point, and then turn around to crash through the center again, another shell structure is created. Simulations that match survey data can be used to calculate how many cycles the dwarf galaxy has endured, and therefore when the original collision occurred.

The new paper identifies two shell structures in the Virgo Overdensity and two in the Hercules Aquila Cloud region, based on data from the Sloan Digital Sky Survey, the European Space Agency's Gaia space telescope, and the LAMOST telescope in China. Supercomputer modeling of the shells and the motion of the stars indicates that the dwarf galaxy first passed through the galactic center of the Milky Way 2.7 billion years ago.

Newberg is an expert on the halo of the Milky Way, a spherical cloud of stars that surrounds the spiral arms of the central disk. Most if not all of those stars appear to be "immigrants," stars that formed in smaller galaxies that were later pulled into the Milky Way. As the smaller galaxies coalesce with the Milky Way, their stars are pulled by so-called "tidal forces," the same kind of differential forces that make tides on Earth, and they eventually form a long cord of stars moving in unison within the halo. Such tidal mergers are fairly common and have formed much of Newberg's research over the past two decades.

More violent "radial mergers" are considered far less common. Thomas Donlon II, a Rensselaer graduate student and first author of the paper, said that they were not initially seeking evidence of such an event.

"There are other galaxies, typically more spherical galaxies, that have a very pronounced shell structure, so you know that these things happen, but we've looked in the Milky Way and hadn't seen really obvious gigantic shells," said Donlon, who was lead author on the 2019 paper that first proposed the Virgo Radial Merger. As they modeled the movement of the Virgo Overdensity, they began to consider a radial merger. "And then we realized that it's the same type of merger that causes these big shells. It just looks different because, for one thing, we're inside the Milky Way, so we have a different perspective, and also this is a disk galaxy and we don't have as many examples of shell structures in disk galaxies."

View a video simulation of the formation of the stellar shell structures.

The finding poses potential implications for a number of other stellar phenomena, including the Gaia Sausage, a formation of stars believed to have resulted from the merger of a dwarf galaxy between 8 and 11 billion years ago. Previous work supported the idea that the Virgo Radial Merger and the Gaia Sausage resulted from the same event; the much lower age estimate for the Virgo Radial Merger means that either the two are different events or the Gaia Sausage is much younger and could not have caused the creation of the thick disk of the Milky Way, as previously claimed. A recently discovered spiral pattern in position and velocity data for stars close to the sun sometimes called the Gaia Snail, and a proposed event called the Splash, may also be associated with the Virgo Radial Merger.

"There are lots of potential tie-ins to this finding," Newberg said. "The Virgo Radial Merger opens the door to a greater understanding of other phenomena that we see and don't fully understand, and that could very well have been affected by something having fallen right through the middle of the galaxy less than 3 billion years ago."

How to resolve AdBlock issue?

How to resolve AdBlock issue?  {module INSIDE STORY}

{module INSIDE STORY} {module INSIDE STORY}

{module INSIDE STORY}