A pioneering team at the University of Aberdeen in Scotland has introduced an AI model named SAGRNet, which can potentially transform environmental and agricultural monitoring worldwide.

Developed by Dr. Lydia Sam, Dr. Anshuman Bhardwaj, and their colleagues, SAGRNet—short for Sampling and Attention-based Graph Convolutional Residual Network—utilizes deep learning to map land cover from satellite imagery with greater accuracy and efficiency. Instead of analyzing individual pixels, the model examines entire landscape features, such as forests, fields, and waterways, providing deeper insights into vegetation types and their contexts.

Initially trained on the diverse terrains of northeast Scotland, encompassing habitats ranging from farmland to urban areas, SAGRNet has demonstrated impressive adaptability. It has performed well in various regions worldwide, including Guangzhou (China), Durban (South Africa), Sydney (Australia), New York City (USA), and Porto Alegre (Brazil). The team has made the model open-source so that decision-makers, researchers, and conservationists can implement it in their local contexts.

“Our system of deep learning algorithms can instantly and accurately recognize different types of land cover, vegetation, or crops in an area,” said Dr. Sam.

Significantly, the model provides detailed information while minimizing computational demands—an essential advantage for timely monitoring of climate impacts, such as wildfires, floods, and droughts.

Dr. Bhardwaj emphasized its versatility: “It can also monitor crop growth, facilitating more accurate harvest predictions and helping make better-informed decisions about land-use sustainability.”

PhD researcher Baoling Gui pointed out how seamlessly SAGRNet integrates into operational pipelines, benefiting various applications from ecological studies to national land-use surveys.

This research, published in the prestigious ISPRS Journal of Photogrammetry and Remote Sensing, was supported by the UK’s BBSRC International Institutional Award, with contributions from international collaborators in Spain, and Germany.

How to resolve AdBlock issue?

How to resolve AdBlock issue?

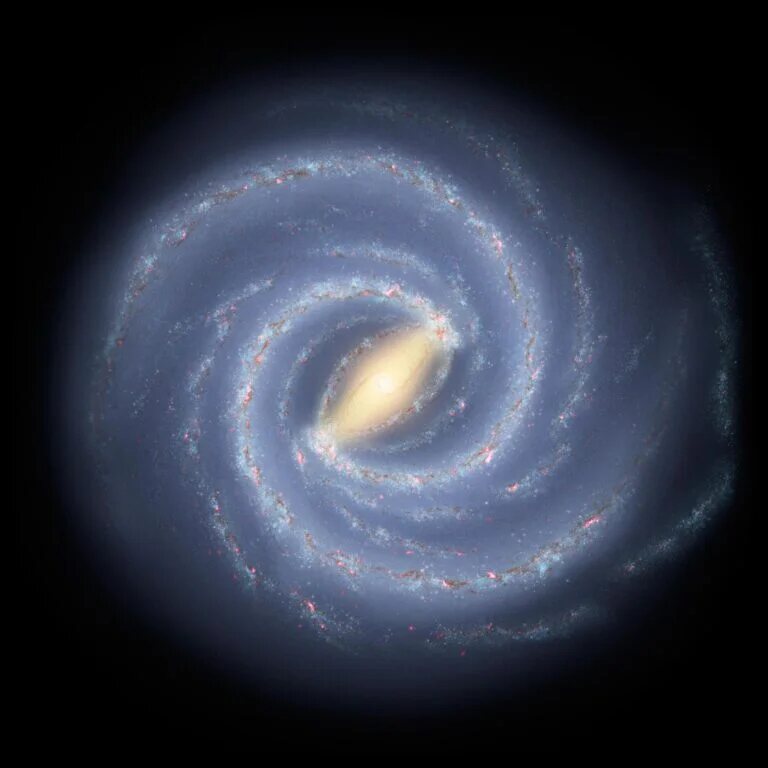

In bold news, the University of Southern California claimed its new “COZMIC” simulation, dubbed “Milky-Way twins,” leverages a powerful supercomputer to uncover hidden truths about dark matter. The announcement paints a picture of groundbreaking progress but how much of this is genuine scientific advance, and how much is hype?

In bold news, the University of Southern California claimed its new “COZMIC” simulation, dubbed “Milky-Way twins,” leverages a powerful supercomputer to uncover hidden truths about dark matter. The announcement paints a picture of groundbreaking progress but how much of this is genuine scientific advance, and how much is hype?